Write something

Pinned

Welcome to Clief Notes. Here's where to start.

1. Watch the intro video and introduce yourself in the intro post here 2. Start with The Foundation (free course). Concepts, folder architecture, prompting framework. Everything else builds on this. 3. Check in at the bottom of each lesson. Polls, discussion posts, other members working through the same stuff. Use them. 4. When you're ready to build real things, move to Implementation Playbooks (Level 2). When you're ready to build your own tools, Building Your Stack (Level 3). 5. Post your work. Ask questions. Help others when you can. What are you here to build?

Poll

4417 members have voted

Pinned

🌶️ CINCO DE MAYO FIRESALE — STARTS NOW 🌶️

Locked in for the next 5 days only. Ends May 5th at 10:00 AM EST. No exceptions. 🎉 Premium: $27 → $14/mo 🎉 VIP: $97 → $67/mo The closest you'll get to our original launch pricing. We're doing this because the community has shown up for us, and we want to show up back. 🤝 🔥 Already a member? Read this carefully. To lock in the new rate, you need to: 1. Cancel your current plan 2. Resign under the new price That's the only way the system can apply the new rate. We have way too many members for manual refunds, so we can't refund anyone who just signed up at current pricing. But the savings stack month over month, so if you plan to stick around (and you should 😁), the math works out fast. 🚫 A few ground rules: Please do not DM myself or Jake about pricing, exceptions, or extensions. We love you, but we're a small team and we need to stay focused on building. Everyone gets the same window. Everyone gets the same deal. If you miss it, you miss it. We'll do more things for the community down the road. ⏰ The clock: 🟢 LIVE NOW 🔴 Locks May 5th, 10:00 AM EST - Premium gets you The Vault and Afternoon Tea calls. - VIP gets you The Drawing Room, High Tea, and bespoke folder builds from Jake himself. If you've been on the fence, this is the moment. 🚀 Tag a friend who needs to be in here. Let's make Cinco a movement. 🎊 🌶️🌶️🌶️

Pitching a 4-Day-to-1-Hour Automation to our Global Head of AI

I just landed a meeting that feels both terrifying and incredibly exciting. Tomorrow, I’m sitting down with the Head of AI and Implementation for the entire global group I work for—a corporation with 14,000 employees across 72 countries. The Backstory If you’ve followed my previous posts, you know I’ve been fighting "manual hell" in our D365 F&O system using Claude Code and Playwright. Since those posts, I’ve evolved the concept. It’s no longer just a one-off script; it’s a scalable framework that can handle almost any repetitive task in D365. The Bold Email Yesterday, I took a chance and sent a direct email to the Head of AI: "I was wondering if we will be able to use Claude Code in the near future? We have Copilot, but what about the rest? For me, this is about automating the boring, repetitive tasks in finance that a monkey could do." His response came today: "We are procuring Claude Code for developers now... Let’s have a short call. My calendar is open." The "Finance Guy" Advantage My boss, who has been with the company for 15 years, is skeptical. Her experience is that "high-level" AI projects rarely trickle down to the people doing the actual work. Corporate roadmaps are often too busy with the big picture to notice the daily grind. But that’s my edge. I’m not an AI specialist looking for a problem to solve. I’m a Finance Manager who is the problem. I have the domain insight they lack. I know exactly where the work hurts because I’m the one doing it. The central AI team has a roadmap to follow, but they aren't necessarily looking at the daily operations at the bottom of the ladder. The Goal: 4 Days down to 1 Hour The project I’m presenting tomorrow is a massive upgrade of my previous work. I’m aiming to take a task that currently takes 3 to 4 days of manual labor and cut it down to 1 hour. It’s 100x more precise than my first version, and because our processes are standardized, it’s scalable across all 72 countries. I’m heading into this meeting with the support of my boss and the time to make it happen.

My AI Operational workflow

Hello All, this is a mixture of two AI outputs based on my personal projects AI operational workflow. Sharing as food for thought and discussion. There a lot here to read here. Not sure how well the formatting will hold up. Any feedback welcome, lots more ideas to take this further in the works, but as of now its performing very well, even with lower models (in fact been testing it and improving it on using feedback from weak local models outputs to frontier models to adjust to save the same issues occurring again). The approach is to make agent work explicit, bounded, and evidence-driven. Agents are given: - A map. - A workflow. - A small reading list. - Known pitfalls. - Specialist help. - Verification gates. - Review expectations. - Examples of good output. Humans get: - More predictable agent behavior. - Cleaner handoffs. - Easier review. - Less repeated explanation. - A mechanism for turning mistakes into durable process improvements. The most important shift is cultural: stop treating AI assistance as a chat transcript and start treating it as an operational system. The files, prompts, rubrics, checklists, and gotchas are the system. The agent is just the worker moving through it. # Generic AI Agent Setup Overview This document describes a reusable, high-level approach for setting up a repository so AI agents can work inside it reliably. It intentionally avoids product-specific, stack-specific, and implementation-specific details. The focus is the operating model: how agents discover context, choose the right workflow, avoid repeated mistakes, produce evidence, and hand work back to humans in a reviewable state. The core idea is to treat AI agents like fast but context-limited contributors. They need a clear map of the repository, explicit rules for what they may and may not change, task-specific reading lists, examples of good finished work, and gates that force them to prove outcomes rather than merely assert confidence. --- ## 1. The Overall Philosophy

0

0

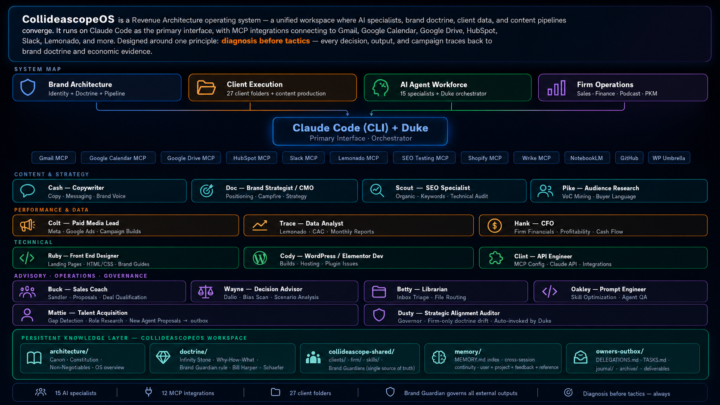

The Folder System Became My Agency

Twenty-four days ago I posted about Jake's folder system video. This is what happened next. Same foundation — markdown files, orchestration prompts, clear roles. I just kept building. Fifteen named specialists. Each one with a soul file, guardrails, and a playbook. Duke orchestrates. Cash writes. Trace pulls the data. Hank runs the financials. Clint handles the MCP integrations. Behind each one is either a human counterpart doing the real work alongside them — or a role I can't afford to hire yet. Katie who's been with me for 18 years, now has her own orchestrator running the same system. Twenty-seven client folders. Twelve live MCP integrations. One shared repo. The folder system isn't replacing my agency. It becoming my agency. Jake gave me the unlock. This is how it's going.

1-30 of 961

skool.com/quantum-quill-lyceum-1116

Jake Van Clief, giving you the Cliff notes on the new AI age.

Powered by