Write something

How I Turned SKOOL Docs Into a Working AI System

Bottom line: Two hours. Jake's frameworks went from a folder to live tools running in my workspace. Most community content has a 48-hour half-life. You read it, save it, and it ends up somewhere it influences nothing. The content is fine. The structure is the problem. Here's what I did: --Organized the vault: Cleaned up 30+ scattered files, classified by type, split into five sections. A file you can't find in 15 seconds doesn't exist. --Built an auto-ingestion pipeline: Scheduled task runs nightly. Drop anything new into _Inbox, it classifies and routes itself. New content stays in its lane. --Converted three frameworks into live skills: - Council of 5 — runs on command. Five advisor perspectives on any decision, simultaneously. - 60/30/10 Triage Rule — installed into workspace operating rules. Applies to every task without prompting. - Discovery Call SOP — no longer a document you read mid-call. Phases through pre-call, live support, and debrief automatically. --Audited workspace documentation: Cut 30-40% from routing and context files. Tighter files, faster responses. Before: Jake's frameworks lived in a folder. After: three of them are running. The best part is that it compared it against what my current business needs are and filtered out resources that weren't relevant to me (yet). For example @Curtis Hays full agency or @Roc Lee and his awesome conference talk engine. If you want to replicate it, I've attached a step-by-step guide with the exact prompts I used across all five sessions. Thank you ALL for your inspiration and to @Jake Van Clief for building this incredible community.

Cinematic prompt methodology, as an installable Claude workspace

Most AI image work fails at the brief, not the model. Three lines in. Generic out. People blame the tool. I shipped a fix. Pushing-creation installs into Claude. Drop reference images into refs/. Run /frames-brainstorm. Claude reads your refs, runs a DP-style interview, and writes the style pack live as you answer. /frames-shotlist drafts the full storyboard. /frames-shot polishes individual frames. Output is markdown. Drop it into PUSHING FRAMES, Midjourney, Sora, or any tool. → github.com/PUSHINGSQUARES/pushing-creation To install, paste this into Claude: "Set me up with pushing-creation from github.com/PUSHINGSQUARES/pushing-creation. Read INSTALL_WITH_CLAUDE.md and walk me through it." You don't get better output by prompting harder. You get better briefs by treating the model like a director of photography. Specificity transfers craft. Stop prompting. Start defining outcomes. Read the deep-dive: https://aris-space.com/documents/workspaces/pushing-creation

Every session is an audition

Most AI sessions complete a task and die. The system doesn't get smarter, it gets used. I'd been noticing this for months. Every new chat starts cold. Same context re-explained. Same one-off scripts rebuilt by hand. Strong reasoning lost. Repeated failure modes rediscovered. The fix isn't a better memory system. It's a mindset shift, encoded into how every session runs. The shift Don't do the task. Build the workflow that does this task and every future one like it. That's it. Two jobs per session, not one. Job one is the thing I asked for. Task, content, feature, fix. Job two is inheritance. Did the session spot a repeatable pattern? Did it ship the permanent fix, or at least flag the opportunity? Did it leave the system in a better state than it found it? Two trigger surfaces 1. Repeated tasks. Same operation done three or more times. Stop doing it manually. Ship the workflow. 2. Recurring failure modes. Patterns I keep re-correcting belong in a guardrail, not a re-prompt. The session that finds a new failure mode is the one that encodes it. The session doesn't have to act on every signal. But it should notice and ask whether it's worth formalizing. The reversibility floor Auto-promotion only works if undo is clean. Anything shipped this way lands as one isolated artifact. One commit. If it goes wrong, undoing it is a single operation, and the artifact moves to a trash folder rather than being deleted. Record stays. Reversibility is what makes aggressive shipping safe. Without it every proposed upgrade needs manual review, which defeats the point. The success moment One line back in chat: I noticed X, found a better way. The system just got an upgrade. Not a transcript. Not a report. One line. Green-light or kill. The takeaway Sessions don't get re-summoned. But they can leave inheritance. Every session is an audition. Not for the model's job security. For the infrastructure that the next hundred sessions will inherit. Full breakdown. The mindset shift, the trigger surfaces, the reversibility floor, and what it changes about how I run AI workflows, all live here:

[FREE GUIDE] Built AI versions of 15 of my favorite creators over the last 3 months.

Built AI versions of 15 of my favorite creators over the last 3 months. Mostly for myself. Yesterday someone asked me how. Today a few more people did. So here's the entire blueprint, free. Pick any YouTube channel. Run Claude Code. Paste one message. Wait 30 minutes. You now have an AI tutor that's read every video on that channel and can answer questions like the creator would. Hormozi-bot for pricing. VanClief-bot for whatever you've been meaning to ask Jake at 2am(Spoiler Alert: this happens a LOT). Your favorite teacher, on tap. Repo: https://github.com/aaronb458/youtube-tutor-template Free version runs on your laptop. Paid version (~$5-10/mo, with $200 in free Deepgram credits to start) lives in the cloud and plugs into Claude.ai as a permanent tool. Both versions are walked through end-to-end by Claude itself — you don't need to know what an API key is, what a database is, or what "deploy" means. You click links. You paste things back. That's it. Posting it here because @Jake Van Clief's content rewired how I think about creative + business + software being one unified system. The only way I know how to repay that is to make sure people in this community can mainline it the same way. Try it. Break it. Tell me what's confusing. I'll fix it.

![[FREE GUIDE] Built AI versions of 15 of my favorite creators over the last 3 months.](https://media0.giphy.com/media/v1.Y2lkPTQ3ZDA4Y2UwNnY4aDFkcjZ6MjhkMGJ4dG14dmRmd3ptM3NkeHBtNjRvOHV1ZXgyayZlcD12MV9naWZzX3NlYXJjaCZjdD1n/YAlhwn67KT76E/100.gif)

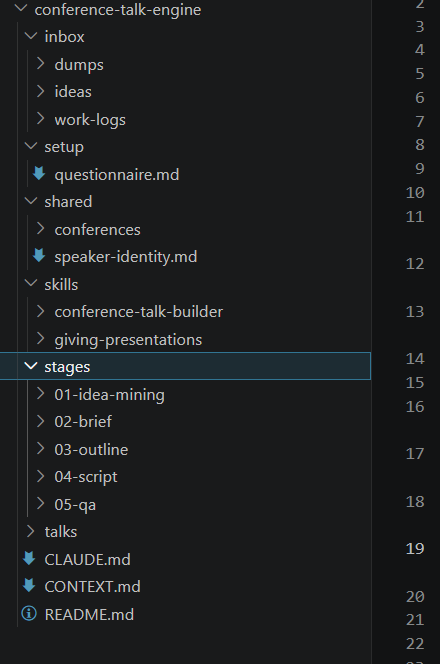

How to design a workflow - Conference Talk Engine (WIP) - update 5/3/2026

Practicing building in public and wanted to share what I've built with help from my other writing brainstorming thread from @Deacon Wardlow and @Siv Darmalingum @E G and @David Trammel Using some of their ideas I did research on the writers and topics they suggested using Notebook LM. I synthesized that research into digestible artifacts for claude to extract themes and guidelines for developing conference talks. I then described to Claude the workflow I wanted to build, can share that prompt if people are interested. And then I also used the workspace-builder as a model for the scaffolding for the conference-talk-engine and my own workspace as models for schema in the Claude and Context mds. The output of all of this was a spec document to build the workspace. I incorporated guidelines to use SOLID principles for coding to allow a built workspace to be extended and improved upon over time without having to rebuild the whole workspace, this is because I think it's better to build a prototype fast and then iterate rather than be 100% perfect. You want to spend time to make sure that the spec doesn't produce errors, but some things will only show up with actual use cases. I then researched existing skills relevant to my use case and came up with 2: conference-talk-builder and giving-presentations. I asked claude to evaluate my research and the existing skills to see what gaps could be improved in the existing skill. I then asked it to write the spec After first draft of the spec I ran a reader-test which is a custom skill derived from @Ari Evergreen 's 6 phase workflow. A rough breakdown would be: 1) research data inputs and relevant skills, compile any useful context relevant to your workflow; 2) analyze inputs with claude; 3) describe your ideal workflow and any models you want to emulate, make sure to mention you are building a spec first; 4) review plan for spec; 5) draft the spec using claude; 6) reader-test to qa the spec.

1-30 of 156

skool.com/quantum-quill-lyceum-1116

Jake Van Clief, giving you the Cliff notes on the new AI age.

Powered by