Activity

Mon

Wed

Fri

Sun

May

Jun

Jul

Aug

Sep

Oct

Nov

Dec

Jan

Feb

Mar

Apr

What is this?

Less

More

Memberships

Clief Notes

23.6k members • Free

The Northbound Insider's Club

733 members • Free

Facilitator Club (Free)

9.9k members • Free

37 contributions to Clief Notes

Why are you here?

Jake talks about building systems that last a decade. That's a long time. What is the one thing you’re actually hoping to solve or scale with AI so you can focus on bigger things over the next 10 years? For me, it’s about mastering the technical logic so I’m not constantly chasing the next "hype" tool every six months. I want the systems to do the heavy lifting so I can reclaim my time.

1 like • 5d

It’s a consistent, repeatable working model that I can tweak a few things and get expected results. We see all the time that moats are getting more narrow. How might we develop a true unique value proposition that lasts for decades versus dependent on the latest fad. Plus, this shit is cool. 😂

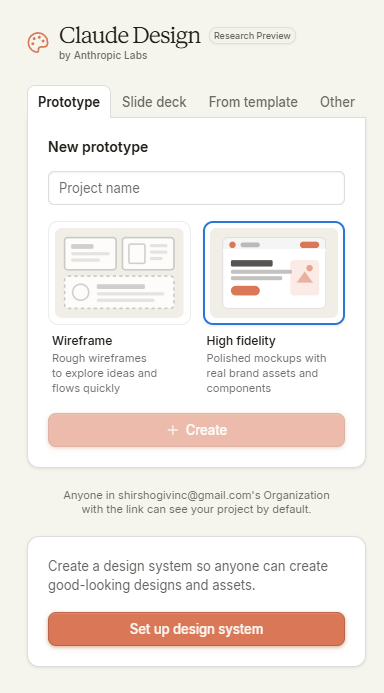

Claude design is here and no it's not a skill.

So, rivaling SaaS's like lovable, claude very recently launched claude design, a dedicated platform for designing anything you'd require with 3 main options. Available at https://claude.ai/design with any of the claude plans. So you've got Prototype's, Slide Decks and From a template. Templates work from past projects you've already made. You set up a design system and with the power of Opus 4.7, it makes you a wireframe or a high fidelity prototype. Lots of exciting new things coming out recently even with the new OpenAI Codex improvements, how far will the AI race go? Who knows. There also have been a lot of theory's of claude opus 4.7 being prime opus 4.6 (quick context: when opus 4.6 first released, everyone loved it but as time went on, maybe due to mythos, the performance degraded over time while the costs remained the same, whereas now opus 4.7 is much more expensive and is slightly better than prime 4.6)

3 likes • 5d

I mean, to be fair… this isn’t new. Claude could do these things before. They’ve just named it intentionally now. Again, we go back to the tale as old as time… what is the moat for these businesses. The problem with this is that they’re demanding loyalty to a singular model. What Anthropic is doing is, honestly, spreading way too thin in order to cover all bases and use cases for its model while also excommunicating tons of users at the same time. (Love it or hate it, OpenClaw generated huge swaths of cashflow for them). Lovable users aren’t going to run over to Anthropic just because. And the trust that has been lost with the constant miscommunication and lack of transparency is growing. I think my bigger problem here is that they’re doing everything and it’s kinda just mediocre. Look at Cowork and Dispatch. With this newest release, I’d even argue degradation in Code. Removing extended thinking abilities to hand full judgment over to the model to decide how long it thinks. I dunno. I’m a big fan and advocate of Claude… but this release has kinda left me underwhelmed and irritated.

0 likes • 5d

@Shirsho Guha I definitely think we are going to see how entrenched folks using Lovable are. In terms of Figma, that’s not going anywhere anytime soon. Way too many enterprises have long-term contracts and infrastructure with them, and also too many guardrails with the models that would likely make Claude Design unusable. Agree. I’m hoping they hit the pause button and iterate on the things they’ve launched. Frankly, I don’t see OpenAI surviving much longer given the investment requirements, who’s investing, and incestuous learning model. We’ll see though.

🏁 Foundations 3.2 Check-In

You just saw how the folder structure adapts to different use cases. Vote below, then drop your customized setup in the comments. What did you name your workspaces and why?

Poll

264 members have voted

It’s the weekend! What’s everyone working on?

One thing I’ve found is there’s no time for true work during the week. So I find myself binging this community, working on side projects, and building out my architecture on the weekends. Would love to hear about some projects you folks are working on! What’s going down this weekend?

Opus 4.7 dropped. The real test isn't the benchmark, it's whether your scaffolding survives the upgrade.

Anthropic shipped Claude Opus 4.7 yesterday. Same price as 4.6. 13% lift on their internal coding benchmark. Vision input up to 3.75 megapixels. Stronger long-context reasoning. The headline most people will pull is the coding score. The line buried in the announcement is the one that matters: "Substantially improved instruction adherence, which means existing prompts may need retuning." Translation. The model now does what you actually said, not what you sloppily meant. Every lazy prompt you've been getting away with for six months is about to behave differently. This is the upgrade test. And it sorts everyone into two camps. Camp 1. Workflow lives in a chat window You upgrade. The model stops filling in your gaps. Outputs drift. You spend a week re-tuning prompts you can barely remember writing. The "AI got worse" tweets start. They didn't. Your contract was vague and the new model stopped guessing. Camp 2. Workflow lives in a folder You upgrade. The model reads the same _config/ files. The same stage briefs. The same compliance checks. The constraints were already explicit because the system needed them to be portable. The new model is more obedient, so it follows them harder. Outputs get tighter, not wronger. That's the whole difference. Not which model you use. Whether your instructions are written down somewhere a model upgrade can land cleanly on top of. What I actually changed today Nothing in the workflow. I swapped the model name in one config file and re-ran the same content pipeline I ran last week. Carousel mining, draft, render, QC. All four stages produced cleaner output because the constraints in _config/voice.md and _config/compliance.md were already specific. The model just executed them more literally. The new self-verification behaviour is the other quiet one. Opus 4.7 checks its own outputs before reporting back. That changes how I write stage acceptance criteria. Less "produce X." More "produce X and confirm Y, Z, and W are true."

1 like • 5d

I’m trying to understand why taking things more literally is a good thing for the model’s outputs. The way it is explained, it feels like a backwards step in reasoning, not a step forward. Maybe I’m misunderstanding the intention. But I agree with your assessment that any model is going to test the architecture strength and the user’s reliance on the model versus the infrastructure.

1-10 of 37

@justin-solomon-9449

Human-Centered Design Practitioner | AI × Design Thinking | Certified Facilitator | Designing How Organizations Change, Not Just What They Build

Active 5h ago

Joined Mar 24, 2026

Atlanta, GA

Powered by