Write something

Pinned

Welcome to Clief Notes. Here's where to start.

1. Watch the intro video and introduce yourself in the intro post here 2. Start with The Foundation (free course). Concepts, folder architecture, prompting framework. Everything else builds on this. 3. Check in at the bottom of each lesson. Polls, discussion posts, other members working through the same stuff. Use them. 4. When you're ready to build real things, move to Implementation Playbooks (Level 2). When you're ready to build your own tools, Building Your Stack (Level 3). 5. Post your work. Ask questions. Help others when you can. What are you here to build?

Poll

4364 members have voted

Pinned

🌶️ CINCO DE MAYO FIRESALE — STARTS NOW 🌶️

Locked in for the next 5 days only. Ends May 5th at 10:00 AM EST. No exceptions. 🎉 Premium: $27 → $14/mo 🎉 VIP: $97 → $67/mo The closest you'll get to our original launch pricing. We're doing this because the community has shown up for us, and we want to show up back. 🤝 🔥 Already a member? Read this carefully. To lock in the new rate, you need to: 1. Cancel your current plan 2. Resign under the new price That's the only way the system can apply the new rate. We have way too many members for manual refunds, so we can't refund anyone who just signed up at current pricing. But the savings stack month over month, so if you plan to stick around (and you should 😁), the math works out fast. 🚫 A few ground rules: Please do not DM myself or Jake about pricing, exceptions, or extensions. We love you, but we're a small team and we need to stay focused on building. Everyone gets the same window. Everyone gets the same deal. If you miss it, you miss it. We'll do more things for the community down the road. ⏰ The clock: 🟢 LIVE NOW 🔴 Locks May 5th, 10:00 AM EST - Premium gets you The Vault and Afternoon Tea calls. - VIP gets you The Drawing Room, High Tea, and bespoke folder builds from Jake himself. If you've been on the fence, this is the moment. 🚀 Tag a friend who needs to be in here. Let's make Cinco a movement. 🎊 🌶️🌶️🌶️

🏁 Foundations 2.4 Check-In

You just learned the book, movie, video game framework. Vote below, then tell us in the comments: name one thing in your work that you now realize is on the wrong layer.

Poll

404 members have voted

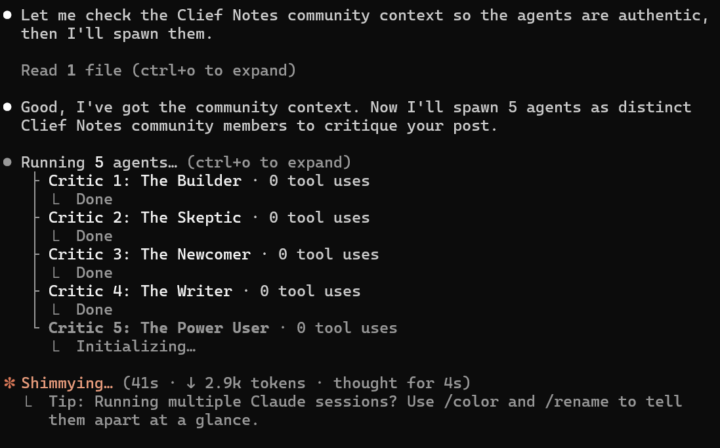

Spawn Your Critics - Claude Code / Cowork

This is a quick one. I took for granted that you guys already know this. But maybe you don't? It's pretty great. In Claude Code / Cowork, have Claude spawn agents to critique your code, writing, anything. You can even define who they are. You will get a much better idea of what you've written from several points of view. I get WAY less sycophantic feedback from the agents. They will even find code problems and writing inconsistencies that default Claude wont. And they can get feisty! For instance, you can give your Clief Notes post to Claude and say, "Spawn 5 agents that represent the Clief Notes community. They should all have strong opinions. Have them critique this post." I gave everything above this line to Claude and then ran the agents, so they don't know THEY are the examples. haha ;) Here's what they said: --- Agent 1 -- The Builder: Solid concept, but this reads like a tweet stretched into a post. You're telling us that it works without showing us how it works or what it actually looks like. Drop in a real example -- show the prompt you used, show one of the agent responses, let people see the feisty feedback you're bragging about. "Spawn 5 agents" is doing a lot of heavy lifting with zero specifics on what makes a good critic prompt versus a bad one. The idea has legs, but right now it's a teaser for a post you haven't written yet. Agent 2 -- The Skeptic: You're telling me agents "get feisty" and "find code problems" -- show me. One before/after example would do more than five sentences of hype. Also, "spawn 5 agents" is doing a lot of heavy lifting here -- are you prompting each one with a distinct persona, or just asking Claude to roleplay five slightly different yes-men? Because without deliberate persona design, you're just getting the same model talking to itself in different fonts. The idea is legit -- adversarial feedback loops are genuinely useful -- but this post asks me to take your word for it instead of proving it. Agent 3 -- The Newcomer:

Stop tuning the model. The harness rewrites itself.

jcode boots Claude Opus in 14 ms, runs at 27.8 MB, and edits its own Rust source mid-session. Same model inside. I believe this is the start of a sub-genre nobody is naming yet. Quick context: The first era of LLMs was prompt engineering. Era two was context engineering. Era three is what we've all been arguing about all year, model picking, Opus 4.7 vs Gemini 3 Pro vs Kimi K2.6. Now, someone rebuilt the wrapper around Claude in Rust, and the gap on cold start, RAM, and per-session scaling is bigger than any model gap I've seen this year. What jcode actually is: - Coding agent harness, 94% Rust - Custom terminal called handterm, custom Rust mermaid renderer - Native logins for Claude, OpenAI, Gemini, Copilot, Azure, plus aggregator providers - MCP works out of the box. Falls back to your existing .claude/mcp.json so anything you've already wired up keeps running - Install via brew, curl, or cargo build. Single binary - 3.3k stars on GitHub, 57 releases, actively maintained The numbers (jcode vs Claude Code, from the README): - Cold start: 14 ms vs 590 ms to 3.4 seconds. 42 to 245 times faster - Idle RAM with local embedding off: 27.8 MB - 10 sessions in parallel: 260 MB total vs 334 MB to 3.2 GB for Claude Code - Per added session: 9.9 MB vs 76 to 318 MB - Custom mermaid renderer the author claims is 1800x faster than browser-based versions That last one is the kind of detail that tells you what they're really doing. Someone is going through every layer of the Claude Code experience and rebuilding it natively, and the gains compound. What's actually different at the harness layer: - Self-dev mode. Agents inside jcode can edit the harness's own Rust source, run cargo build, hot-reload the binary across active sessions without dropping you. The wrapper is recursively modifiable from inside the agent loop. - Memory as semantic vectors per turn. Recall is automatic via cosine similarity. Not "remember to update CLAUDE.md". There's an ambient mode that consolidates memory and resolves conflicts in the background while you work.

1-30 of 942

skool.com/quantum-quill-lyceum-1116

Jake Van Clief, giving you the Cliff notes on the new AI age.

Powered by