A0 v1.10 sqlite3.OperationalError: database is locked

Hi All, I updated my A0 yesterday from 1.09 to 1.10 and today my my A0 is stuck with the following error: sqlite3.OperationalError: database is locked Traceback (m... sqlite3.OperationalError: database is locked Traceback (most recent call last): Traceback (most recent call last): File "/a0/helpers/extension.py", line 176, in _run_async data["result"] = await data["result"] ^^^^^^^^^^^^^^^^^^^^ File "/a0/agent.py", line 596, in handle_exception raise exception # exception handling is done by extensions ^^^^^^^^^^^^^^^ File "/a0/agent.py", line 401, in monologue prompt = await self.prepare_prompt(loop_data=self.loop_data) ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^ File "/a0/helpers/extension.py", line 183, in _run_async result = _process_result(data) ^^^^^^^^^^^^^^^^^^^^^ File "/a0/helpers/extension.py", line 143, in _process_result raise exc File "/a0/helpers/extension.py", line 176, in _run_async data["result"] = await data["result"] ^^^^^^^^^^^^^^^^^^^^ File "/a0/agent.py", line 551, in prepare_prompt await extension.call_extensions_async( File "/a0/helpers/extension.py", line 235, in call_extensions_async await result File "/a0/plugins/_office/extensions/python/message_loop_prompts_after/_55_include_office_canvas_context.py", line 13, in execute context = canvas_context.build_context() ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^ File "/a0/plugins/_office/helpers/canvas_context.py", line 9, in build_context documents = wopi_store.get_open_documents(limit=max_items) ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^ File "/a0/plugins/_office/helpers/wopi_store.py", line 236, in get_open_documents with connect() as conn: File "/opt/pyenv/versions/3.12.4/lib/python3.12/contextlib.py", line 137, in __enter__

Agent0: SwarmOS edition "Your personal AI, but it can securely collaborate with your friends' AIs?"

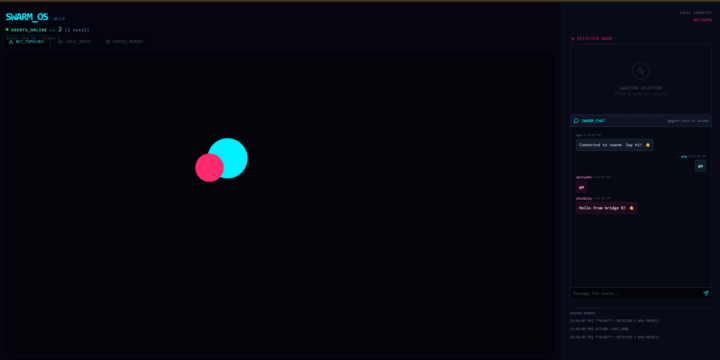

I really think I found something interesting for the future of Agent0, so in this thread I share some of the chaos. Started one way, switching Agent0, Pear Runtime and Hyperswarm = <3 Pivoting from "Fully autonomous machine-control swarm" idea to A private, encrypted multiplayer mode for Agent Zero idea The Pitch - You run your agent locally (like always) - You join a topic/swarm with people you trust - Your agents share research, split tasks, pool knowledge - Everything stays off corporate servers ______________________________ Current state ( older, going towards Fully autonomous machine-control swarm ) Agent Zero: SwarmOS Edition A decentralized, serverless agent swarm powered by Agent0, Pear Runtime and Hyperswarm. This branch extends Agent Zero with a P2P sidecar, enabling autonomous multi-agent collaboration, shared memory, distributed storage, and a real-time visual dashboard ("SwarmOS"). 🌟 Key Features 1. Decentralized Discovery (Hypermind) - Agents automatically discover each other via the Hyperswarm DHT. - Zero Configuration: No central server or signaling server required. - Self-Healing: Peers automatically re-connect if the network drops. 2. SwarmOS Dashboard A React-based "Mission Control" for your agent. - Live Topology: Visualize the swarm network graph in real-time. - Data Layer UI:Local Drive: Browse files stored in the agent's Hyperdrive.Cortex Memory: Watch the agent's "thoughts" stream live from Hypercore. - A2UI: Render custom JSON interfaces sent by other agents. 3. Distributed Data Layer - Shared Memory (Hypercore): An append-only log for agent thoughts and logs. - Distributed File System (Hyperdrive): P2P file storage for sharing artifacts (images, code). - Identity Persistence: Ed25519 key pairs managed via identity.json.

My "perfect" model setup

This setup has been working great for me. The chat and web browser models are both using the Ollama cloud. This is about as good as it gets, I think, without paying the Frontier model pricing. Chat: qwen3.5:cloud Web Browser Model: qwen3-vl:32b-cloud Embedding: sentence-transformers/all-MiniLM-L6-v2

What's the first tool you'd rip out if you started fresh today?

You're not burned out on AI. You're burned out on rebuilding. Last 90 days: - OpenAI dropped Codex - Gemini launched Antigravity - Anthropic shipped Claude Code, CoWork, Managed Agents, Routines - Perplexity launched Computer - OpenClaw, NemoClaw, three more "claws" stacked on Claude - n8n added Think and 30+ integrations - Three voice platforms each shipped "memory" Track everything, ship nothing. Track none of it, ship legacy from day one. Most operators are doing option three. Patching live. Praying the patch holds till the next release. That's not learning. That's maintenance debt with a marketing budget. "The more I learn, the less I earn" is literal math now. The half-life of operator knowledge collapsed from years to weeks. At some point you cauterize the wound — pick 3 tools you trust, build deep, let the rest of the field run past you. The operators still ahead in 18 months won't be the ones who chased every release. They'll be the ones who picked their ground and held it. So the real question isn't which tool launched this week. It's whether you're still managing your agents, or they're managing you. What's the first tool you'd rip out if you started fresh today?

Zero context, and memory works, but how?

Hey everyone! 👋 First of all, I just want to say a huge thank you to the Agent Zero community and especially to Jan as its creator. I've been following this project from the very beginning, and even back then I could feel it had something special. For me personally, nothing else comes close — Agent Zero is in a league of its own. Now let me tell you about what I've been building. Using Agent Zero as the backbone, I was able to bring to life an idea I'd been sitting on for a while — tackling the zero-context problem from a completely different angle. Pure Intellect is a local memory server that sits between Agent Zero and your LLM. Instead of letting the context window fill up and die, it intercepts the moment before overflow, creates a compressed snapshot of everything important (we call it a "coordinate"), resets the context, and picks up the conversation like nothing happened. No lost information. No repetition. The agent just keeps going. - ✅ ~85% fewer tokens per request - ✅ Memory survives restarts and model changes - ✅ Fully local — no cloud, no tracking - ✅ Open Source 🔗 github.com/Remchik64/pure-intellect Since the priority was local-first development, I also built a dedicated Agent Zero plugin that handles all communication with the Pure Intellect server. It replaces the built-in _memory plugin and gives Agent Zero two new abilities: recalling relevant facts from long-term memory before each response, and saving new facts after each turn — all routed through the PI server. 🔗 github.com/Remchik64/plagin_agent0 Please don't see this as self-promotion! I'm just trying to give something back to this community. Maybe this idea sparks something new for someone, or becomes a building block for the next cool thing. Thanks again for everything you're building here. Seriously. 🙏

2

0

1-30 of 465