Activity

Mon

Wed

Fri

Sun

Jun

Jul

Aug

Sep

Oct

Nov

Dec

Jan

Feb

Mar

Apr

What is this?

Less

More

Memberships

44 contributions to Agent Zero

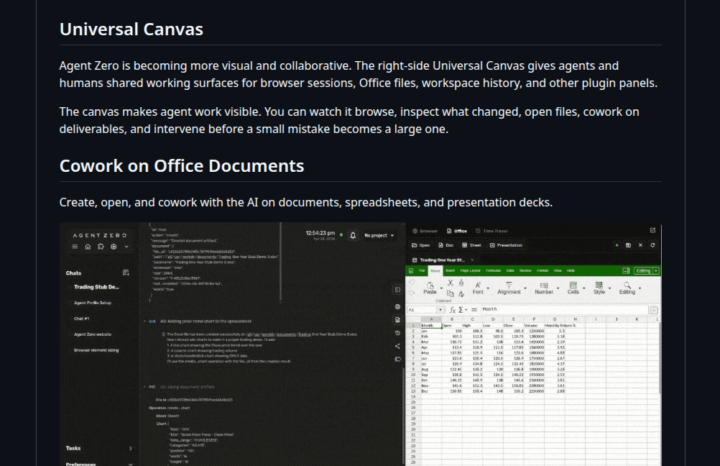

A0 v1.10 is HUGE! (Native Browser, Time Travel, Office Canvas) ⚡

Hey everyone, Version 1.10 is finally here, and it fundamentally changes how you interact with Agent Zero. What’s new? - The Universal Canvas: A brand new right-side panel where you can cowork with your agent. - Native Browser: Watch the agent browse the web live. You can even use the new Annotation Mode to visually select UI elements and tell the agent to fix them. - Office Magic: Work on Word, Excel, and PowerPoint files natively. The agent can even generate real Excel charts for you. - Time Travel: Messed up a file? The workspace now has a snapshot history. Diff, preview, and revert changes easily. Powered by Agent Zero's Space Agent. - OpenAI OAuth: Connect your existing OpenAI/Codex subscription so you don't have to burn API credits. Don't forget the CLI Connector 💻If you want the agent to work on your local machine's files while keeping its code execution safely inside Docker, install the CLI (requires v1.9+). - Mac/Linux: curl -LsSf https://cli.agent-zero.ai/install.sh | sh - Windows: irm https://cli.agent-zero.ai/install.ps1 | iex (Ask the agent to use the a0-setup-cli skill if you need help). Update Agent Zero:Settings > Update > Open Self Update > Restart and Update Go build something awesome. See you all tomorrow, April 29th at 3PM UTC in the Skool Community Call. Join us here: https://www.skool.com/live/DlyvNKHbyWw

I NEED HELP TO INSTALL AGENTZERO

HELLO EVERYONE. I NEED HELP TO INSTALL AGENTZERO AI IF SOMENONE WOULD BE KIND ENOUGH I WOULD BE GREATFUL HERE IS THE LINK irm https://ps.agent-zero.ai | iex

It’s not even close.

Seeing the Openclaw updates I can say that Agent Zero has been years ahead of the new crew. Agent Zero’s most recent updates are huge and necessary but the capabilities were mostly always there.

3

0

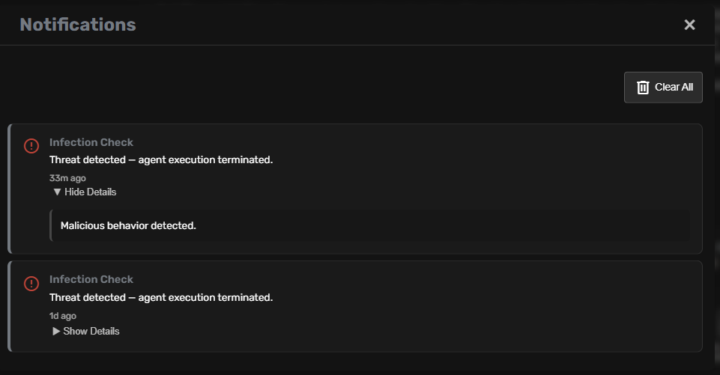

How to get rid of it "Infection check: TERMINATED"

How to get rid of it "Infection check: TERMINATED" shall i keep all in "secret store" as even then it recall secret and fails to use it? Can i bypass it somehow?

Very Small Local Setup - "no valid tool request found"

So I have a interesting setup. i big Unraid Server with a very small 5GB Nvidia Quadro P2200. My Ollama VM is 100GB RAM and has the Nvidia Quadro P2200 passed through to yet. I then have a Agent-Zero Docker container latest as of yesterday. The Models are Chat = qwen2.5:3b Utility = qwen2.5:1.5b When as it to create an create an ebook I get the following error. ValueError: Tool request must have a tool_name (type string) field Traceback (most recent call last): Traceback (most recent call last): File "/a0/helpers/extension.py", line 176, in _run_async data["result"] = await data["result"] ^^^^^^^^^^^^^^^^^^^^ File "/a0/agent.py", line 572, in handle_exception raise exception # exception handling is done by extensions ^^^^^^^^^^^^^^^ File "/a0/agent.py", line 485, in monologue tools_result = await self.process_tools(agent_response) ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^ File "/a0/helpers/extension.py", line 183, in _run_async result = _process_result(data) ^^^^^^^^^^^^^^^^^^^^^ File "/a0/helpers/extension.py", line 143, in _process_result raise exc File "/a0/helpers/extension.py", line 176, in _run_async data["result"] = await data["result"] ^^^^^^^^^^^^^^^^^^^^ File "/a0/agent.py", line 854, in process_tools await self.validate_tool_request(tool_request) File "/a0/helpers/extension.py", line 183, in _run_async result = _process_result(data) ^^^^^^^^^^^^^^^^^^^^^ File "/a0/helpers/extension.py", line 143, in _process_result raise exc File "/a0/helpers/extension.py", line 176, in _run_async data["result"] = await data["result"] ^^^^^^^^^^^^^^^^^^^^ File "/a0/agent.py", line 956, in validate_tool_request raise ValueError("Tool request must have a tool_name (type string) field")

1 like • 15d

Modern models that are larger can handle the turns necessary to navigate Agent Zero’s system prompt effectively. If you use cloud models to begin with then you may be able to prime it for use with smaller local models in the future but they still have to be able to act on what they know.

1-10 of 44

Active 7h ago

Joined Jul 17, 2025

Powered by