Pinned

A0 v1.10 is HUGE! (Native Browser, Time Travel, Office Canvas) ⚡

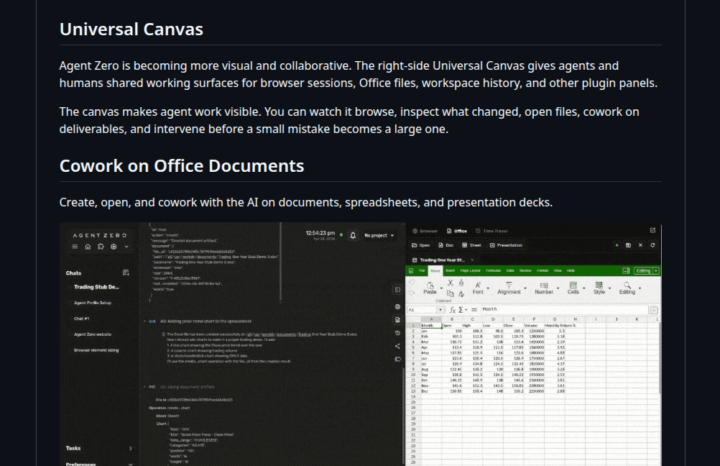

Hey everyone, Version 1.10 is finally here, and it fundamentally changes how you interact with Agent Zero. What’s new? - The Universal Canvas: A brand new right-side panel where you can cowork with your agent. - Native Browser: Watch the agent browse the web live. You can even use the new Annotation Mode to visually select UI elements and tell the agent to fix them. - Office Magic: Work on Word, Excel, and PowerPoint files natively. The agent can even generate real Excel charts for you. - Time Travel: Messed up a file? The workspace now has a snapshot history. Diff, preview, and revert changes easily. Powered by Agent Zero's Space Agent. - OpenAI OAuth: Connect your existing OpenAI/Codex subscription so you don't have to burn API credits. Don't forget the CLI Connector 💻If you want the agent to work on your local machine's files while keeping its code execution safely inside Docker, install the CLI (requires v1.9+). - Mac/Linux: curl -LsSf https://cli.agent-zero.ai/install.sh | sh - Windows: irm https://cli.agent-zero.ai/install.ps1 | iex (Ask the agent to use the a0-setup-cli skill if you need help). Update Agent Zero:Settings > Update > Open Self Update > Restart and Update Go build something awesome. See you all tomorrow, April 29th at 3PM UTC in the Skool Community Call. Join us here: https://www.skool.com/live/DlyvNKHbyWw

Pinned

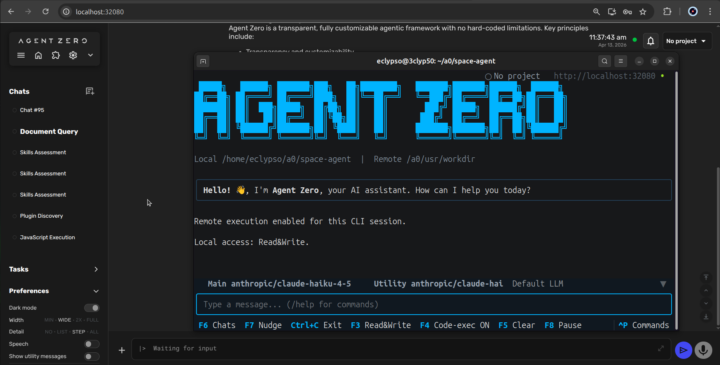

🧑🚀 Meet Space Agent, our newest creation!

Hello everyone, Big announcement today. We are publicly releasing our newest creation — the Space Agent. This agent can completely change the way you use and experience AI agents. Claim your Space and try it now. No install. No configuration. Just your API key or local inference, and that's it -> https://space-agent.ai If you like Agent Zero, share and give us a Star on GitHub, or subscribe on YouTube to keep the momentum going!

Pinned

Welcome to Agent Zero community

And thank you for being here. If you have a minute to spare, say Hi and feel free to introduce yourself. Maybe share a picture of what you've acomplished with A0?

Agent Zero v1.9 is live! Meet the A0 CLI Connector 🖥️

Hey everyone, v1.9 just dropped, and it comes with a brand new tool: the A0 CLI Connector. What it does: It's a CLI that connects your local machine to your Agent Zero instance. It can view and edit files directly on your host machine, while all code execution stays safely inside Docker. Best of both worlds. The only requirement is installing Agent Zero version 1.9. Previous version don't support the CLI connector. Install it now: - Mac/Linux: curl -LsSf https://cli.agent-zero.ai/install.sh | sh - Windows irm https://cli.agent-zero.ai/install.ps1 | iex Or ask your Agent to use the a0-setup-cli Skill to guide you. 🔒 Security Note: This release also patches two CVEs (SSRF and Path Traversal). Please update your instances. Other highlights: - Browser Agent can now use its own dedicated model preset. - /project and /config commands work directly in Telegram, WhatsApp, and Email. - Redesigned messaging settings with clearer setup flows. Update Agent Zero: Settings > Update > Open Self Update > Restart and Update

🔴 Community Call Recording (April 29th)

These are two hours of demos, Q&A and new features. Thanks @Leigh Phillips for your Superordinates plugin showcase. As always we really appreciated having you, thanks everyone for dropping by! If you missed the call or want to rewatch any part of it, the full recording is now available 🎥

1-30 of 815