Activity

Mon

Wed

Fri

Sun

Apr

May

Jun

Jul

Aug

Sep

Oct

Nov

Dec

Jan

Feb

Mar

What is this?

Less

More

Memberships

Agent Zero

2.4k members • Free

11 contributions to Agent Zero

Need Suggestions

Hello everyone, I recently set up Agent Zero useing docker and Lmstudio. I don’t have much experience yet and I’m still trying to understand what I can do with it. It would be really helpful if you could share some ideas or examples of how you use it. Also, please suggest some models that work well. I’m using an RTX 5070 Ti (16GB VRAM) and 32GB RAM. Thanks a lot! 😊

2 likes • 7d

Hi @Jubaer Utshob That’s a great question. "Unlimited" things =). I think of it as an agentic LLM orchestrator. Because it’s running with full control of its own environment (Kali Linux), it isn’t just limited to writing text—it can actually execute commands, manage system files, and interact with the OS just like a human developer would. Here is a quick look at what it can handle: - Web & Software Development: It can write code, build out project structures, and handle the deployment process directly from the terminal. - System Automation: It can manage scheduled tasks, monitor background processes, and automate repetitive system maintenance so you don't have to. - Security & Diagnostics: Given its home on Kali Linux, it’s well-positioned to assist with network diagnostics, security scanning, and auditing tasks. - Workflow Orchestration: Instead of just answering a question, it can link multiple steps together—for example, searching for info, writing a script to process it, and executing that script to get you a final result. - Extensibility (Coming Soon): With custom Skills and Plugins on the way, you'll be able to teach it new tricks and specialize its behavior for your specific needs. Basically, if you can define the logic or the script for a task, a0 can take the wheel and run it for you. What do you want do or build with it?

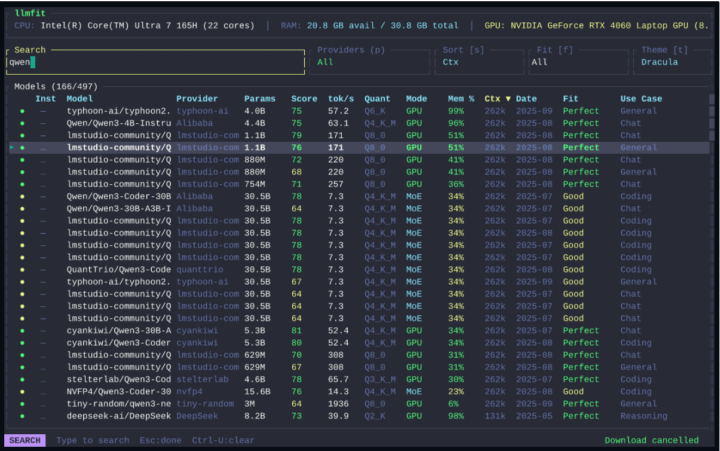

Working With Local LLMs

My setup is with Local models only. Have had great success with the latest Qwen3.5 family (vision support in all of them). The latest GLM (4.7 and 5) models are pretty good too. I just wanted to quickly share a tool that I came across. Will help save you time and testing in what model will work on your PC. LLMFIT https://github.com/AlexsJones/llmfit

A0 is really slow, any optimizations?

I just started trying to use Agent Zero and I've just found its responses to be painfully slow. And for just basic queries, it sometimes takes five, ten, twenty minutes to get a response. Does anybody have any suggestions to speed things up and optimize the platform? Here is my system and what I'm using below. RTX Pro 4500 Blackwell with 32GB VRAM Chat: qwen2.5-coder:32b Utility: qwen3:8b Embedding: mxbai-embed-large

0 likes • 15d

Thanks for sharing @Dale Thomas , im tinkering on my Azure Vm and trying a hybrid approach, but the web model isn't really working for some reason, it get stuck.. - Chat Model: Cloud/Web ex Kimi K2.5 - Utility Model: qwen3:8b - Web model: qwen3:8b - Embedding Model: the on out-of-the-box or nomic-embed-text:latest

I would be great to get Agent Zero on the thursdai Pod

Link: https://x.com/thursdai_pod Great for visibility in the era of agentic AI frameworks. 😎

0

0

24h time?

Just something stupid, but how can I change the time to 24h format ? I don’t see anything in the setup to change. Thank you.

1-10 of 11

Active 11h ago

Joined Feb 21, 2026

Powered by