Pinned

🎉 We have our FIRST graduate of the 7-Day Challenge!

Huge congrats to @Antra Verma for being the first to cross the finish line 👏 To celebrate, we're hooking her up with a FREE AIS shirt, and her official completion certificate is attached below 🏆 Let's give her a massive round of applause in the comments, she set the bar! Can't wait to see more of you submit your projects and join the graduate club. 👉 Want to take on the challenge? Head to the Classroom section or jump in HERE 👕 And if you want to grab some AIS merch for yourself, check it out HERE Cheers everyone! - Nate

Pinned

🚀New Video: 32 Claude Code Hacks in 16 Mins

I went from complete beginner to mass-producing workflows, websites, and AI agents in real time. This video covers 32 Claude Code hacks I actually use, sorted from beginner to pro. The best ones are saved for the end

Pinned

🏆 Weekly Wins Recap | Apr 18 – Apr 24

From high-ticket deals and agency SaaS launches to client systems, websites, and real-world automations - this week inside AIS+ was packed with serious builder energy. 🚀 Standout Wins of the Week 👉 Michael Wacht closed a $10K AI Readiness Assessment deal, sponsored by finance with training and system-integration readiness included. 👉 @Uros Pesic signed a £9K UK agency client for a 3-month ops audit and used multi-agent Claude Code to prep 20+ interviews in parallel. 👉 @Fernando Gómez turned a corporate social-media automation system into an agency SaaS with €2.5K setup + €100/month per client. 👉 @George Mbajiaku closed his first $1,300 client by shifting his pitch from “n8n builder” to “problem solver.” 👉 @Josh Holladay wrapped a 30-day client sprint and earned a retainer offer for ongoing strategy, builds, and AI education. 🎥 Super Win Spotlight | Balaji Iyer Balaji joined AIS+ knowing he could build something useful - but he needed structure, clarity, and confidence. Since joining, he has: • Set up his own cloud instance, Docker, Postgres, and self-hosted n8n • Built a real backend workflow from scratch • Created an app he now improves daily • Moved from “Can I really do this?” to “How can I make this better?” His biggest shift? Going from sitting on the sidelines → to finally building something he’s proud of. Balaji’s journey is proof that once you take the first step, momentum starts to build. 🎥 Watch Balaji’s story 👇 ✨ Want to see wins like this every week? Step inside AI Automation Society Plus and start building assets that compound 🚀

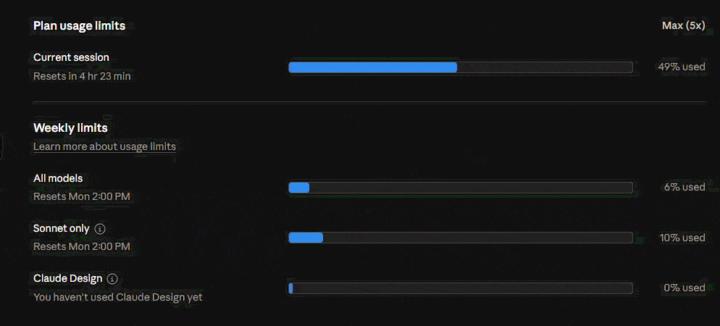

🚨 URGENT: Claude Code is silently burning my session usage. Has anyone found a fix?

I’ve got a client call today and I’m effectively blocked because Claude Code is unusable right now. I’m on Max 5x. As you can see in the attached GIF, my current session usage was at 49% and a slow silent consumption keeps going on. So the issue doesn’t look like normal weekly exhaustion. It looks like something inside the current Claude Code session is burning context extremely fast. I’ve disconnected MCP servers, tried reducing background activity and waited for my weekly limits to reset, but the same issue keeps coming back. Even when I’m barely doing anything, the session usage climbs very quickly. I’m trying to work out whether this is caused by bloated claude.md files, duplicated project context, hidden MCP activity, background indexing, prompt injection or something else repeatedly loading into context. Has anyone built a practical way to audit what Claude Code is actually consuming? I’m not looking for generic advice like “wait for your limits to reset” or “upgrade the plan”. I need to understand what is consuming the tokens so I can fix the setup and get back to client work. Any transparent breakdown, diagnostic approach or tool/plugin idea would be really appreciated.

We built an n8n alternative because AI workflows kept breaking our stack — open sourced it today

Hey everyone, For the past several months we've been building something that came out of a frustration most of you probably know well. We love n8n for what it does. But the moment a workflow needed real agent behavior, document retrieval, approval checkpoints, and full observability in the same place, we kept fighting the platform instead of building. It wasn't designed for that and it's not a criticism, just a different problem. So we built Heym. Self-hosted, source-available, visual canvas for AI-native workflows. Multi-agent orchestration, built-in knowledge retrieval, human review checkpoints, MCP support. Launched today on Product Hunt and the repo just went public. We're two engineers and genuinely curious: for those of you building real AI workflows with agents and orchestration, what's the part that breaks down most often in your current stack? github.com/heymrun/heym

1-30 of 16,066

skool.com/ai-automation-society

Learn to get paid for AI solutions, regardless of your background.

Powered by