Activity

Mon

Wed

Fri

Sun

Jun

Jul

Aug

Sep

Oct

Nov

Dec

Jan

Feb

Mar

Apr

May

What is this?

Less

More

Memberships

Clief Notes

29.1k members • Free

36 contributions to Clief Notes

A planning tool for us newbies

Meet the prompt queen 🫅 A quick and dirty framework to get Claude to nail your goal the first time around and save wasted time going back and forth. https://github.com/donroy26/prompt-queen As someone who is still exploring this space heavily, I often enter a task not knowing where I am going. I often let the AI guide me through until I have an idea where I am going. Issue is often Claude likes to jump the gun and go straight to planning or implementation before we've nailed down where we are going. This often leads to me saying "no change this, this is what i wanted, now try this". The prompt queen gives checkpoints so Claude will work toward getting you a true plan that you can then hand off to be implemented. This is very bare bones and simple. I took inspiration from @Ari Evergreen 's workflow (hers is far more impressive and advanced please go check it out) https://www.skool.com/cliefnotes/i-run-four-phases-before-any-ai-builds-anything?p=fb57ee52 but I developed my own tool as my needs are far simpler at this time. @Yucky Yuckyyyy I also introduced the queen to brofessor. She was slightly bloated so he set her straight. Everyone go check out this tool Yucky just shared https://www.skool.com/cliefnotes/class-meet-brofessor?p=30ca93bd For anyone new, to give you an idea of how to get going on similar tasks of your own, I built this by copying references to Jake's ICM methodology along with Ari's post on her process into a project and asked Claude to walk through implementing something similar for me. I gave my intent on what I wanted and Claude did the rest. I did not write any of these documents I merely gave them a read after and poked for any final tweaks. Find something you want to recreate or are inspired by and let Claude be inspired by it as well. Just dive in you almost can't fail.

0

0

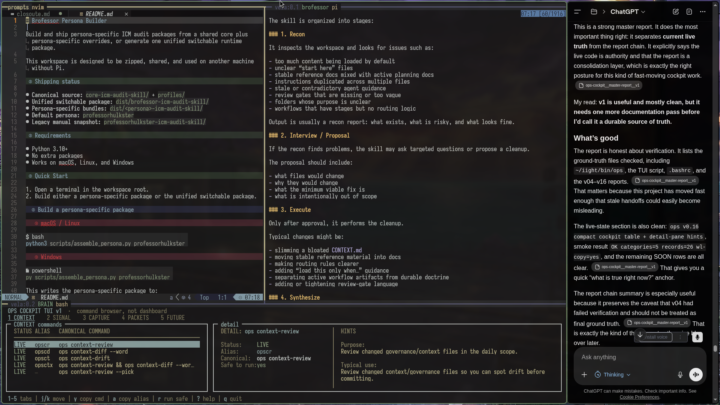

Class, meet Brofessor.

TL;DR /brofessor is ICM-based skill that helps clean up AI-agent workspaces. It's also a small persona factory: the audit engine stays the same, but you can switch and create personas, and even tune them with a CONFIG.md It checks whether your docs, routing, stages, review gates, and context-loading patterns make sense. It finds bloat, confusion, contradiction, and overbuilt process junk. Then it proposes the smallest safe fix, waits for approval, executes only what you approve, and wraps up with a clear synthesis. It is half workspace auditor, half context janitor, half theatrical menace. Yes, that is three halves. The math is fine. Keep moving. Grab it, run it on a messy workspace, and let it bully your docs into behaving. - Brofessor iight now that that's out of the way, I'm going to explain why this shit actually bangs - actually fuck it imma let him explain this too. ps - this skill was made in 3 prompts, I can show you how I did it if anyone's curious... - yuckyyy Yep — replace the longer **“How It Works”** section with this: ```md ## How It Works Brofessor works because the prompt is built like a layered workspace auditor, not a generic “clean up my docs” request. ### 1. Core Directive: Treat the Workspace Like a Factory The prompt frames the repo as a multi-stage context system: - each stage has inputs - each stage produces outputs - each stage loads only what it needs - stable rules live outside active work - review gates control movement between stages So Brofessor is not asking, “Are these files tidy?” It is asking, “Can agents move through this workspace predictably?” ### 2. Layer Model: Give Every File a Job Brofessor audits through five layers: - **Layer 0:** workspace identity - **Layer 1:** map/orientation - **Layer 2:** routing/context loading - **Layer 3:** stable rules, contracts, criteria - **Layer 4:** active products and outputs This prevents the classic markdown soup problem where `CLAUDE.md`, `CONTEXT.md`, plans, rules, drafts, and review notes all start pretending to be the boss.

🧪 New benchmark out

New benchmark out of Meta FAIR, Stanford, and Harvard called ProgramBench. The setup: you get a compiled executable plus its docs. Source code stripped. Rebuild the program from scratch in any language you want. Tests check input/output behavior against the original binary. 200 tasks, from small CLI tools up to FFmpeg, SQLite, and the PHP interpreter. 📊 Results across 9 models: Zero tasks fully solved. Opus 4.7 was the best, passing 95% of tests on only 3% of tasks. GPT 5.4, Gemini 3.1 Pro, and Haiku 4.5 hit 0% in that bucket. The interesting part is section 5. Even the model solutions that "worked" looked nothing like the human reference. Median 1,173 lines vs 3,068 in the original. Flat directories. Fewer functions, each one longer. GPT 5.4 wrote 96% of its final code in a single turn on most tasks and never modified existing files on roughly 40% of runs. 🎯 Why it matters for us: The benchmark separates writing code from designing software. Models can produce syntax all day. They cannot yet decompose a real system into coherent modules, pick the right abstractions, or organize a codebase the way a working engineer would. That gap is what computational orchestration points at. It is also where the durable value lives. 🛠 Try it: Pick an easier task from the repo (the paper flags nnn, fzf, gron, and jq as more tractable). Run it against Claude or your model of choice. Watch where you and the model split. Note the design decisions you make that the model never even raises. Post your runs and attempts to create a harness that would allow the model to do it. Wins, failures, weird outputs, all of it. 📍 Paper and Repo: ProgramBench I'm building something on top of this right now. More soon.

0 likes • 21h

@Jake Van Clief you’re probably right my man. I guess I already know this and am trying to confirm I’m not on the wrong path. I do genuinely believe no matter what as long as I’m learning the path with reveal itself but it’s hard not to try to make sure I’m optimizing for growth with how proficient this community is.

0 likes • 18h

@Alain Grignon in general I do agree but I’ve built some things that I’m not sure can replace current tools even if they work better because longevity and scaling I have no way to confirm will be reliable. And for a company that is not software based if I lose days to a system going down it could be pretty bad. I realize AI can likely solve all of the issues I just listed but it’s a risk that I can’t confirm for certain won’t happen.

This is a completely different discusion, but why not?

Just posted a comment in a lesson post, but I actually thought it will be amazing to read everybody's thoughts about it. It goes more towards the philosophical conversation, but I think is an interesting one. So, this is my hypothesis: What if we actually are just a more evolved type of LLM's, trained since birth, with thousands and thousands years of development, with more input sources (5 senses, so 5x different languages combined), and what we call consciousness is just a more sophisticated form of programming? Would love to hear your ideas ❤️

0 likes • 2d

I haven’t put a ton of thought into it other than the fleeting ones drifting into mind. It ultimately comes down to what consciousness is and there’s endless discussion and research on that topic, to no real conclusion as of today at least. At the end of the day what makes us human is that we are human. All the amazing things and all the imperfections. The AI will be an amazing tool and may even approach consciousness if not achieve it but it will never be us.

0 likes • 22h

@Josh Harper I definitely agree there may be a point where through audio or chat it is truly indistinguishable from a person. Even with regards to context limitations humans obviously can only retain so much immediate memory related to a task while actively working on it. There are a lot of parallels.

What Do You Put In Your Database?

First post here, so be gentle, lol. I'm having a hard time wrapping my perception about what kind of data you can, or should put into yours for your AI memory. I've been messing around with computers and by extension data manipulation, since the 70s. I have a good understanding of how a relationship database runs. But we're not building that kind of ecosystem(?) are we? We can go bigger. Before I dug deeper, I always picture an LLM like Chatgpt, as having this huge massive brain, which held all of the Internet, and when I asked would wave it's virtual hands to say "Here it is". I know that's incorrect. I'm using AI as a research assistant and junior co-writer. I'm doing a non-fiction book what kind of skills you'll need to make money in the next 20 years. I'm doing a ton of digging for trends and possibilities and...all of you have a good idea of what that means, I'm sure. I went into this thinking it would be more like a Wiki compiled form all my research and conclusions but is that what I want? Seems like there so much more than just a glorified book list. I do want to have a folder style system, with the full transcripts, complete articles, or other important documentation that I need. That's doable too. But recently, I wanted to start at least collecting the base data. If I don't start doing that, I'm just digging my hole deeper. So I laid down a basic schema, and then asked GPT to pull me 5-6 highlights that it thought should go into the database. Once I get the workflow built, I pretty sure I'll try to automate it, so as I research, the AI formats and stores any highlighted data I come across. It pulled those six, then another three from our discussions on the first six, and we were in a side chat at the time, the main chat was on markdown files. We got 10 entries from that. So I had nineteen. I haven't done any more, but when I look at what I have, I get this weird vibe that the majority of them aren't on the book subject, but are more of how the LLM views the way I work? That's a poor description of it, I hope it works.

1 like • 1d

I have occasionally had annoying interference with memory that the LLM thinks is relevant to a current task when it’s not. The options are really to open an isolated instance without memory. This is accomplished in slightly different ways among the major providers. Or manually control your AI memory or remove it entirely. If you are working in a project, you can always try to add to the Claude.md and context files that the agent should ignore all memory files and act only on the data in the project

1-10 of 36

Active 2h ago

Joined Mar 22, 2026

Powered by