Activity

Mon

Wed

Fri

Sun

Jun

Jul

Aug

Sep

Oct

Nov

Dec

Jan

Feb

Mar

Apr

May

What is this?

Less

More

Memberships

Clief Notes

27.9k members • Free

51 contributions to Clief Notes

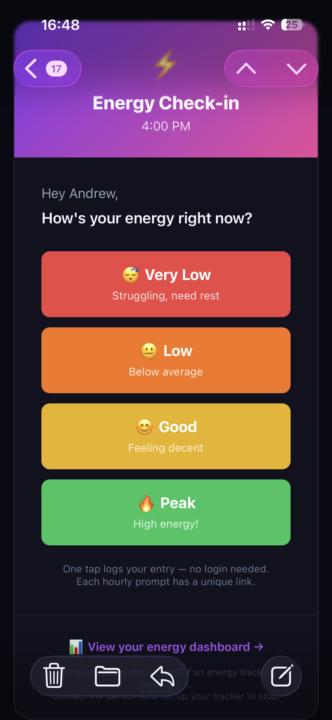

My first ICM project an Energy Tracker for Neurodivergent minds

The project started with a conversation with a trainee ADHD coach who was having friction engaging some of her participants. I mentioned to her a DIY energy tracker I'd used years ago, built from instructions I found online. It was crude but did the job as a great tool for engaging a young team I was managing at the time. It surfaced something they were potentially unaware of and gave them something to work with. The thought: could I use @Jake Van Clief's ICM to build something quick and dirty as a proof of concept to demonstrate what we were discussing? I found my original notes from 27 March 2017, 09:24. Set to work with Jake's Workspace Builder. Onboarding: Workspace Builder Q1: Neurodivergent-Centric Interactive Tool Development. Q2: Transform complex neurodivergent challenges into simplified, interactive visual snapshots and tracking tools through a process of concept ideation, cognitive load mapping, and accessible UI assembly. Q3: The primary user is a creative developer specialising in ADHD/Neurodivergent solutions with an intermediate-to-advanced skill level in AI-guided coding and Google Antigravity environments. Q4: 5 Stages 1. Context Mapping (defining the specific neurodivergent challenge); 2. Logic Structuring (data architecture); 3. UI/UX Generation (applying the "Snapshot" design system); 4. Interactive Testing (refining UX/feedback loops); 5. Deployment. The Logic Structuring stage is skippable for simpler, purely visual tools. ICM Workspace built. The Brief as entered: I want to build an online Energy Level Tracker like the one here: https://collegeinfogeek.com/track-body-energy-focus-levels/ by Thomas Frank. I want the website to ping someone on the hour and send a text or message with the form that they then enter their input. This is then tracked and a chart produced over time to give them their best time of day for energy levels. I would like it to be free.

Introducing the Hermes-Stack

Consider this a thank you @Jake Van Clief for the inspiration and giving us the mindset and tools to grow our businesses. ***Disclaimer: this is an advanced setup. DONOT use until you're fully comfortable with Jake's method*** =============================== Many of us eventually hit the limit with basic chat interfaces. The agent forgets context between sessions, data security starts to feel risky, and the setup creates more friction than value. This stack addresses those issues directly It combines Hermes Agent with Cognee as the memory engine, hosted on a simple DigitalOcean Droplet and secured through Cloudflare Tunnel. The entire deployment follows Jake Van Clief’s Interpretable Context Methodology for clean, repeatable orchestration. The result is a private, self-improving AI agent that grows more capable over time while keeping your data and server fully under your control. The components stay minimal and transparent: Hermes Agent as the autonomous gateway, Cognee for structured relational memory, and Cloudflare Tunnel for secure outbound-only access. You deploy once using the ICM workflow, then the system handles the repetitive memory management and self-improvement loops. The agent becomes a genuine thinking partner instead of a one-off responder. That frees up your attention for the judgment and creative work only you can do. If you are running a self-hosted agent setup or exploring similar private stacks, I would like to hear what you are using and what friction you have solved. Drop your thoughts below.

Build the workflow, not the feature. The variations come for free.

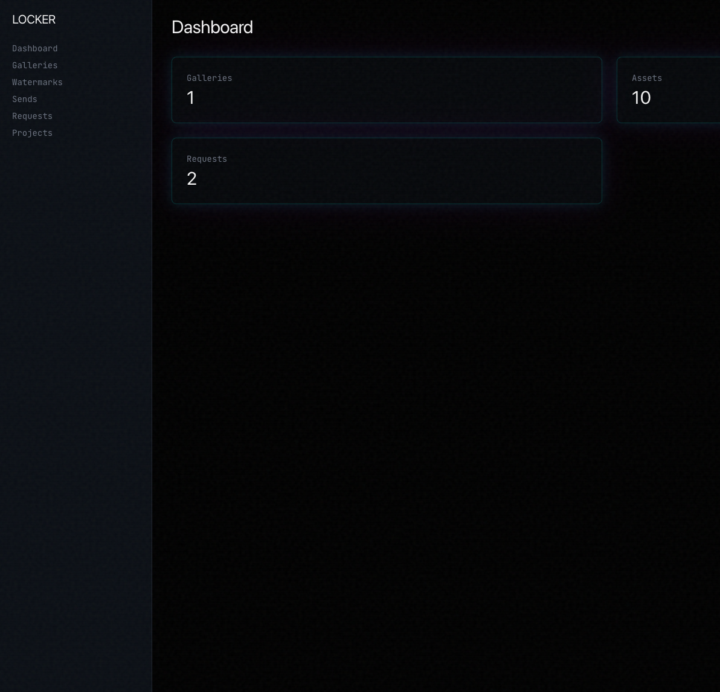

Shipped sends and the Locker into Beyond Content this afternoon. Third brand to get them. Took less than a day. The first time I built them, on Pushing Squares, it was real work. Spec, schema, auth flow, billing, gated downloads, the full stack. Days of thinking, not just typing. The second time, on creatioexnihilo.com, it was a port. Hours, not days. Same primitives, different brand surface. The third time, on Beyond Content, it was an afternoon. Copy the package. Re-wire the manifest. Swap the brand tokens. Ship. The pattern: The work isn't the feature. The work is the workflow that produces the feature. When I built sends the first time, I wasn't really building sends. I was building: - A schema shape that survives renaming - A deploy contract that doesn't care which brand it's serving - A Locker model that maps cleanly onto Stripe, Neon and R2 regardless of who owns the account - A handover surface so the next brand only touches config, not code Once that workflow existed, "ship sends to brand X" stopped being an engineering question. It became a config question. The takeaway If you find yourself building the same thing twice, the second build is the warning. Stop. Extract the workflow before the third one shows up. The first build pays for itself. The workflow pays forever. //A<3

From "Manual Hell" to a Global Partnership: My Meeting with the Head of AI

Today was a massive win. I had my meeting with the Head of AI for our global group, and it went beyond anything I had imagined. The Pitch: 132 Orders and a "Broken" System I had the chance to present a real-world challenge: Manually processing 132 sales orders in April. The workflow is a nightmare: Open each order, find the amount, cross-check it with an Excel sheet, invoice it, and repeat. To make it worse, there is a known bug in our D365 environment where the amount column simply shows "0" in the grid, meaning I can’t just export a list. It requires manual clicks. In a busy finance department, this takes days because of constant interruptions. I presented my workflow and explained how this concept isn't just for one task—it’s a framework for almost every repetitive monthly task we have. I knew from my previous Rebill Project that if I can automate the "friction," I can win back my time. The Result: Skipping the Queue When I told the Head of AI that this could turn a 3-4 day job into about 1 hour, his eyes lit up. Even though Claude Code is still stuck in corporate governance (it's currently with our CEO to decide on a global rollout), he didn't want me to wait. He immediately assigned me a Microsoft Copilot Studio license. These are highly restricted—usually, there’s a long waiting list, and if you don't use it for 30 days, you lose it. He bypassed the entire queue to get me started right away. Moving the Needle with IT To get "Copilot Cowork" talking to D365, I had to submit a technical IT ticket to enable the Model Context Protocol (MCP). I made sure to CC both the Head of AI and my own manager. The Head of AI jumped straight into the ticket with this comment: "I talked to Allan today. He has an idea to speed up a process in finance and save days of work... The use of the MCP server for this would help him very much. Open for a call if needed or any other help for the team."

Anthropic ships Claude design. OpenAI ships pets.

Whatever model you're using right now is good enough. The question isn't capability anymore. It's taste. Capability has been commoditizing for eighteen months. The benchmarks plateaued in the territory where the difference stops mattering for most work. The model is no longer the lever. Watch what the labs are shipping right now and notice the same thing from two directions. Anthropic shipped Claude design. Identity, typography, layout, voice, the editorial spine the whole product runs on. The brand has a point of view and they're letting it carry the surface. OpenAI shipped pets. Floating overlay. /pet command. Custom personality presets. The brand is leaning into character, presence, attachment. Don't read these against each other. Read them together. Both labs are reaching for the same lever at the same time, in different registers. Both are admitting taste is now load-bearing. Two flavors of the same lever Editorial taste fits a power-user surface. Rigorous. Stable. A design system signals reliability. Character taste fits a wider surface. Warm. Present. Pets signal companionship. Neither is "better." They're aimed at different rooms. Picking which room you're in, and refusing to be a generic version of all rooms, is the work. What this means for the rest of us If the labs are now competing on taste, the same thing is happening one layer down. To everyone using them. When AI gives you all the tools, your taste is the differentiator. To some extent. Craft, distribution, relationships still matter. But the lever that just rotated for the labs is rotating for the rest of us too. The model can't tell you what to make. Your judgment about what to do with all of it can. The takeaway The model is good enough now. The next leverage point isn't more capability. It's the judgment to use it well. Taste is the lever. For them. For us. Full breakdown. The good-enough plateau, the two registers of taste, and what it means for makers, all live here: https://aris-space.com/documents/thoughts-and-scribbles/the-taste-transition

1-10 of 51

@andrew-carter-8893

Ideas to execution with AI 'factories'. Turning theory into practice with build, iterate, refine, learn.

Active 6h ago

Joined Apr 20, 2026

Powered by