Made a full teaser for my first AI feature film — based on my grandfather's real story (Gulag survivor, 1948)

I've been working for months on a teaser for my debut AI-generated feature film, and I'd love feedback from this community. The film is called "Brotherhood in the Bitter Cold" — inspired by the true story of my grandfather, a Transylvanian Hungarian who survived a Soviet labor camp and walked home from Siberia in 1948. The project is part AI experiment, part memoir, part love letter to a man who rarely spoke about what he lived through. WORKFLOW: — Script and storyboard: written by me, based on my novel of the same name (not yet published) — Character references, Cloth Reference, Environment Generation: Nano Banana (Gemini) with family photo references for facial consistency — Video generation: Seedance with detailed per-shot prompts — Narration: ElevenLabs v3 with intention-based tags for an elderly voice — Music: Suno TECHNICAL NOTES: — Maintained character consistency across shots using reference sheets generated in Nano Banana, then reinforced via Img2Vid in Seedance — Developed custom prompt structures for every generation using Claude Desktop with dedicated Skills (one Skill per tool: Seedance director, Nano Banana reference-sheet builder, ElevenLabs voice director, etc.) — Built custom character identity docs for each of the main characters to keep visual continuity across 40+ generations — Aspect ratio 21:9 CHALLENGES: — Facial consistency across long sequences remains the hardest problem — Text generation (carved into wood, etc.) still fails reliably — Period-correct wardrobe required heavy negative prompting (Seedance defaults wanted to add German/Alsatian half-timbering to Eastern European scenes) - Seedance still denies a lot of prompts, especially images with faces — Higgsfield Cinema Studio 3.5 solves this quite well as an alternative - Cost is significant: ~$300+ for 3 minutes of final output (teaser + prologue combined), which is steep for an independent creator. Much of that is re-generations and failed prompts — the "visible cost" is only part of the total spend.

YouTube Culture & Trends Report

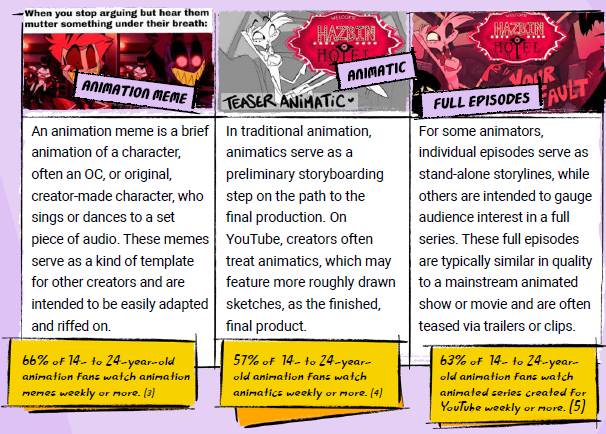

Creators often treat animatics as the finished, final product and animatics are a hit with YouTubers. 57% of 14 - 24-year-old animation fans watch animatics weekly or more. Independent creators on YouTube are more popular than the big animation studios.

Hello All 😊✨️💐👋

Hello 2D ANIMATORS 😊 I am very excited to join this community and I sincerely hope I can learn from all of you and progress in my animation journey. About me: I am a happy/busy father of two toddlers, full time engineer based in Switzerland and part-time YouTuber. I have been trying to animate for the last 2-3 years with very poor results. Certainly, I wasn't focused 100%. Then I kept switching tools: procreate, procreate dreams, Cartoon Animator 5 then now...AI. By joining this community, I am hoping to get some guidance 🙏 My main goal: make fun 2D animation for my YouTube channel which is dedicated for toddler education. I want to be able to make a 2D character move on the screen. Point to objects and lip-sync. I very much look forward to meeting you all and thank you for reading 😊🙏💐✨️

1

0

Fixing slightly wrong aspect ratios

I keep getting frustrated by the fact that many models and application platforms do not strictly respect 16:9 aspect ratios. This is a problem because if you want to export your final cut in a true 16:9 and the source video in not exactly 16:9 you will get small but annoying black bars on two edges. At least this is the case in my film editor, Filmora. It's even worse if you are joining several videos which have different aspect ratios, because then you get shifting bars which are terribly distracting and ugly. Probably those of you who are experienced already know how to fix this. But for newbies who may be struggling, I decided to write up this simple guide. The first rule is that you always want to EXPAND the 'too-short' direction rather than shrink the 'too-long' direction. This avoids problems with your post-processing software possibly getting confused. The first step is to divide the number of horizontal pixels by the number of vertical pixels. This ratio should be 16/9=1.777777... If the ratio is less than this, the frame is too narrow and it must be expanded horizontally. To do this, multiply the number of vertical pixels by 1.77777 to get the required number of horizontal pixels. Divide the just-computed required size by the current size and multiply by 100 to get the percent horizontal scaling to enter into your production software. If the ratio exceeds 1.777777 the image is too short and must be expanded vertically using the same basic idea. For example, suppose the image produced by the model is 2144 (horz) by 1216 (vert), The ratio is 2144/1216=1.763. It's too narrow. The required number of horizontal pixels is 1216 * 1.77777 = 2162. The horizontal expansion factor is 2162/2144*100 = 100.84 percent. On the other hand, suppose the model's image is 1928 by 1072. The ratio is 1928/1072=1.7985. It's too short. The required height is 1928/1.77777=1084. The vertical expansion factor is 1084/1072=101.12. If you want slightly greater accuracy in the calculations, use the exact ratio 16/9 instead of 1.77777, and carry out all calculations to the full calculator precision rather than rounding.

3

0

Hybrid AI + Cartoon Animator

Hello my AI production friends - how are ya? I haven't caught up in the class yet, lagging way behind, but wanted to share my latest. I have not yet been able to do a full AI-generated production that I'm comfortable posting on my channel, but I am finding ways to use AI more and more in my productions. This video is a compilation - the first video (Shark Hunt song) is the most recent. I always put the newest video first, then include approximately 20 minutes of additional videos to help increase average view duration and the number of ad breaks that run. The main characters (Zack and Maya) are my IP, established characters on my channel. I animate them using Cartoon Animator 5. The shark and the octopus are characters from Reallusion marketplace that I animated in CA5 as well. Most of the backgrounds were generated using AI, and one thing I did differently this time was to use AI to ANIMATE the backgrounds instead of using still images. It worked well in this particular production, bringing the sea weed and corals to life. I started with still images (2D animation style), set those as the first frame, and prompted Seedance or Kling to animate the backgrounds. On several of them, I had Claude refine my prompts, which was very helpful. I also created the giant snapping clams, the eels, and the camera movement through "Octopus Alley" (at around 2:20) using AI. These steps saved me a LOT of time during the animation process. I post a new video every two weeks, so any step that can save time is helpful. That's why I signed up for this class! I will definitely continue animating the backgrounds using AI. It's subtle, but adds to the overall effect, in my opinion. The camera motion through the coral reef was done in Seedance 2. I used a range of tools and services to get all these different results, some from my galaxy subscription, some from my Freepik subscription, which uses a lot of the same tools, honestly. I probably don't need them both, but I like the flexibility to be able to switch back and forth, and I also like being able to start with a stock image in Freepik and edit it with AI tools. I did that with several of the backgrounds, and used many as style references.

1-30 of 137

Powered by