Pinned

ANNOUNCING: What's working in AI in 2026 (real projects, real revenue)

Quick news. We're doing our first virtual event, and the rule is simple: every person on stage has to show their actual work. The actual projects they're selling. The actual outreach they're using to land clients. The actual numbers behind it. No theory. No tutorials. Just what's working in 2026, taught by the people doing it. Waitlist's open. Get on it before tickets go live: -> What's working in AI in 2026 (real projects, real revenue) PS: Annual members of AIS+ get in for free. We will be announcing discounts for monthly members. If you’ve been thinking about joining AIS+, it’s a good time.

Pinned

🚀New Video: Every Level of Claude Explained in 21 Minutes

I've spent over 400 hours inside Claude, and I'm breaking down exactly what separates someone stuck on level 1 from someone running five parallel sessions while they sleep, with the cheat codes to jump between each stage. Hope you enjoy!

Pinned

Cape Town AI Mastermind: Behind the Scenes

In February, I spent a week in Cape Town, SA with some of the top AI entrepreneurs in the space for a mastermind. We had hundreds of community members join us. I met some amazing people and left feeling so energized and inspired. Which is why I've been uploading almost daily lately, haha! Anyways, just dropped a behind the scenes vlog if you're interested in checking it out. AIS is planning on doing big events and meetups regularly, so if this trip looked like fun, stay tuned for events in the future!

Day 5 of the AIS Challenge! 🚀

I officially built and deployed my business website live for the very first time! It was a deep dive into the end-to-end process, and learning this from scratch has been a total game-changer. Here's the live website - www.ofall.in - The Workflow: I started by using Claude Design and the design system for my brand to map out all the pages. Once the visuals were set, I handed everything over to Claude Code to handle the heavy lifting, fine-tuning the code, and implementing changes. Finally, I pushed the repository to GitHub and deployed it via Vercel using my custom domain from Hostinger. - The "Aha" Moment: I spent extra time ironing out glitches during the design phase to ensure a smooth launch. Building this way gave me much more control over the final product than a standard template. - Top Hack: The Screenshot Loop was a lifesaver. Claude understood the assignment perfectly, fixing visual bugs in one go. For the mobile version, which usually has more layout quirks, I used Puppeteer to take specific screenshots of the issues. Feeding those back to Claude allowed it to diagnose and resolve the mobile responsiveness issues instantly. It feels incredible to have a live site built with such a powerful AI-driven stack! #AISChallenge

160 job leads before my first cup of coffee

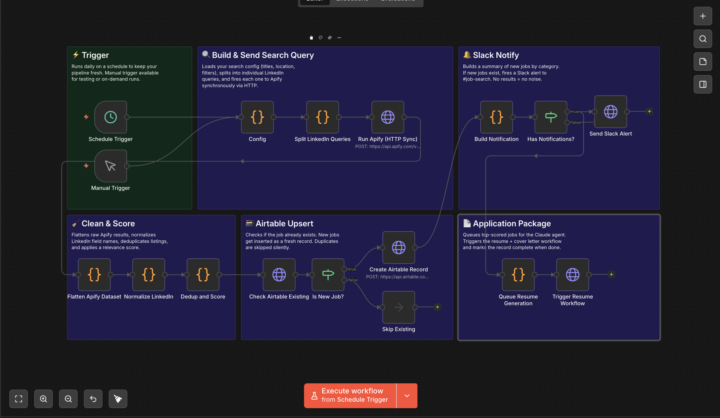

I got back on the job market last week. By day 2 I was already annoyed at the process, so I did what any of us would do and automated it. Sharing the full stack here because I think a few of you will appreciate the build. 🔧 WHAT I BUILT A fully automated job hunting pipeline that runs every morning and feeds me a curated, scored, deduplicated list of fresh job leads — before I touch my keyboard. ⚙️ THE STACK • n8n — orchestrates the entire workflow (schedule + manual trigger - for testing) • Apify — custom LinkedIn scraper actor I built myself (more on this below) • Airtable — job database with scoring, dedup logic, and status tracking • Claude (Anthropic) — reviews listings, scores them, builds application packages • Slack — notifies me by category when new jobs land for me to review 🔄 THE FLOW 1. n8n fires on schedule across 4 search categories (engineer, architect, leadership, executive) 2. Hits my custom Apify actor to scrape LinkedIn job results 3. Flattens + normalizes the dataset, deduplicates, scores relevance 4. Checks Airtable — new jobs get inserted, existing ones get skipped 5. Slack alert fires per category: "New architect jobs added (58)" 6. Claude agent queues up, reviews each listing, scores it, and builds a tailored application package 7. Airtable field Application_Package_Created flips to true when done 🎭 THE ACTOR This is the part I'm most excited to share. I built my own LinkedIn job scraper actor on Apify — not a wrapper around an existing one. It's tuned specifically for job search queries with clean output that maps directly into the n8n normalization layer. Dropping it to the Apify marketplace tomorrow alongside the GitHub repo. 📦 WHAT'S DROPPING TOMORROW • n8n workflow JSON (import and go) • Custom Apify actor (public on marketplace) • Airtable base schema • Claude prompt chain • Full setup docs ⚠️ Note on Airtable: the free tier caps at 1,000 records. Fine to start, but you'll hit it fast if you're running multiple search categories daily. I'm building a MongoDB version this weekend — dropping Monday for anyone who wants to run this at scale without the limit.

1-30 of 16,812

skool.com/ai-automation-society

Learn to get paid for AI solutions, regardless of your background.

Powered by