Write something

⚡ My agent auto-commits to git after every session. I never asked it to.

Custom hooks: scripts that fire on system events — messages, resets, compaction. The agent doesn't know they exist. It can't bypass them. Advanced 5 is live.

2

0

🧠 Dropped 17 PDFs. Agent found the answer in 2 seconds without opening a single file.

Real RAG: PDF → vectors → semantic search. Your agent doesn't read documents — it searches a knowledge base. $0.01 to index, $0.0001 per query. Advanced 4 is live.

2

0

🛡️ My agent tried to read /etc/passwd. The system said no.

Three layers of guardrails: Soul rules for guidance. Config blocks for enforcement. Approval gates for control. Your agent is powerful — now it's also safe. Advanced 3 is live.

2

0

Claude has improved dramatically over the past year, and I use it daily, BUT ....

there is a business problem almost no one talks about enough: Businesses are paying not only for useful output, but also for the AI’s mistakes, retries, ignored instructions, and admitted failures. I recently got this response from Claude: “That’s worse than a rookie mistake; that’s me violating CLAUDE.md rules #4, #5, and #7 … be honest / follow documented rules strictly / no assumptions.” Think about that for a second. The AI knew the rules. The AI admitted it broke the rules. And the user still paid for the bad output, the wasted tokens, and the lost time. From a business perspective, that is not a small issue. That is a real operational cost. We spend too much time talking about benchmarks, speed, and model improvements, and not enough time talking about the hidden cost of failure: retries, corrections, extra usage, team delays, and trust erosion. If AI is becoming part of business infrastructure, then reliability and instruction-following should matter just as much as raw capability. Why are customers expected to absorb the cost of the model’s mistakes? That seems backwards to me.

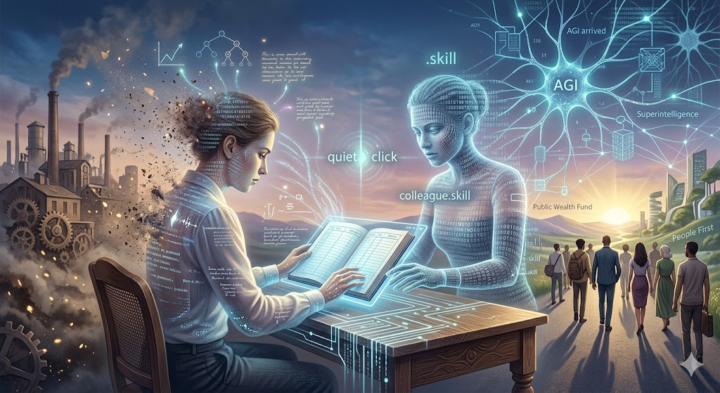

The 250-Year Contract Just Expired

Spoiler: this week, an era that lasted two hundred and fifty years quietly came to an end. Most people haven't noticed yet. This post is about why it matters more than anything else you've read this year. There's a phenomenon psychologists call adaptation — live next to something abnormal long enough, and you stop noticing it. I catch myself realizing that the news cycle of the past few years has systematically destroyed my ability to be surprised. If you know that feeling of low-grade anxiety, that sense that reality has slipped slightly off its hinges — you're in good company. There's at least half the planet feeling the same way. But history teaches us one constant: what contemporaries see as catastrophe, their descendants call a starting point. Did the early Christians, or the enthusiasts of 1993 clicking on Mosaic for the first time, realize they were standing at the beginning of a new world? And here we are again, in one of those moments — only the scale is fundamentally different. There are roughly thirty people on this planet who, right now, in these weeks and months, are making decisions that will shape the world our children live in. Not presidents — presidents long ago turned into their own genre of tiresome, low-budget reality TV. I'm talking about engineers, scientists, researchers, founders of companies whose names you already know. And these people, one after another, weeks apart, have started saying something out loud. Something that used to only be spoken in tight circles. In March of this year, Jensen Huang — CEO of NVIDIA, a company the market values at four trillion dollars, whose chips are literally the neurons of an emerging digital civilization — went on Lex Friedman's podcast. Lex asked him: "When do you think AI will be able to launch, grow, and scale a tech company to a billion dollars? Five years? Ten?" Huang paused for a second and said, almost casually: "I think now. I think we've reached AGI." Last week, Marc Andreessen — the man who in 1993 wrote the first commercial web browser and essentially brought the internet to the masses — published a short note. Just one sentence, but what a sentence: "AGI is already here, just unevenly distributed." Dario Amodei, CEO of Anthropic, standing at Davos during a session titled "The Day After AGI," stated that within 6 to 12 months, AI will fully replace the software engineering profession. End-to-end. Sam Altman, in an interview with Axios: "Superintelligence is this close. And it's not a new technology — it's a restructuring of society."

4

0

1-30 of 38

skool.com/ai-agents-openclaw

OpenClaw builders sharing real agent setups, cost optimization, configs, and advanced workflows. Build smarter AI with hands on support.

Powered by