Activity

Mon

Wed

Fri

Sun

May

Jun

Jul

Aug

Sep

Oct

Nov

Dec

Jan

Feb

Mar

Apr

What is this?

Less

More

Owned by Michael

Build real-world AI fluency to confidently learn & apply Artificial Intelligence while navigating the common quirks and growing pains of people + AI.

Memberships

AI Automation Society Plus

3.5k members • $99/month

AI for Life

28 members • $297

59 contributions to AI for Life

When's the last time your in-person meeting actually needed to be in-person?

A 60-minute in-person meeting rarely costs 60 minutes. Five of them a week costs you a full working day of hidden overhead. Every week. Nobody audits that number, so nobody fixes it. Default to video for execution work. Use in-person strategically. Most organizations still treat in-person meetings as the standard and video as a fallback. Flip the default for execution-layer work and speed goes up the same week. The operator case for video as baseline: 1. Meetings cost more than the meeting A 60-minute in-person meeting is rarely 60 minutes. Add 30 to 90 minutes of travel, buffer time on either side, and the cognitive hit of leaving your workspace. Run the math across a week. Five meetings, two hours of hidden overhead each, ten hours back. A full working day reclaimed every week. 2. Scheduling becomes elastic (with discipline) Video calls collapse to fit the work. Ten-minute syncs become viable. Reschedules stop cascading into lost half-days. The trap: video defaults can expand meeting volume instead of shrinking duration. Pair the elasticity with a protocol. Default length 15 minutes, agenda required, no agenda no meeting. 3. The cost structure runs deeper than fuel Surface costs: gas, parking, vehicle wear. Real costs: opportunity cost of lost working hours, meeting room infrastructure, coordination overhead, travel reimbursements. Most of it is invisible on the books. Video removes nearly all of it with no drop in output on execution-layer work. 4. Decision velocity compounds Pulling stakeholders together takes a calendar invite instead of a commute. Decisions happen in hours instead of days. Fewer blockers. Tighter feedback loops. Competitors still scheduling in-person reviews fall behind on iteration speed alone. 5. Meetings become assets, not ephemera This is where the operator edge lives. A typical pipeline: Fathom or Fireflies records the call. Transcript drops into n8n. Claude or GPT extracts action items, owners, and deadlines. Output writes to Airtable, Notion, or the CRM. A Slack DM fires to each owner with their tasks.

Your Claude API call is eating 1.2 seconds. Here is when that stops being acceptable

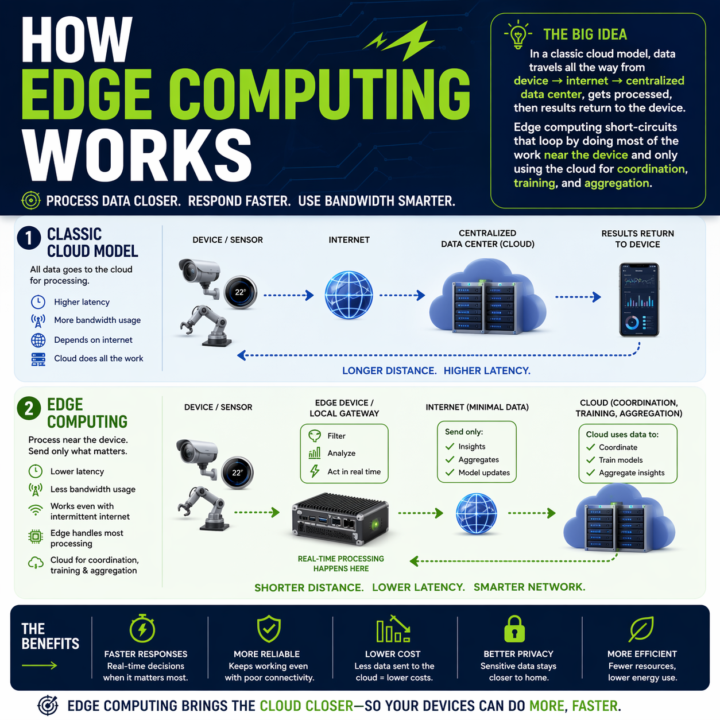

Every automation you build has a round trip baked in. Claude API, OpenAI call, n8n webhook, vector DB lookup. Data leaves the device, travels to a data center, gets processed, comes back. On a good day a cached Claude call runs 600ms to 1.2s. On a bad day you are watching a spinner. Where that round trip breaks: - Real-time perception in vehicles or robotics - Industrial control loops that cannot wait on a network - Anywhere connectivity is spotty or intermittent - High-volume sensor data where shipping everything to the cloud burns bandwidth and budget Edge changes the math. Compute runs where the data is generated. Local work stays local. Cloud only sees what needs cross-site context. The split that is emerging: - Edge: real-time inference, event detection, filtering, local control - Cloud: model training, cross-site analytics, long-term storage, heavy compute On-device SLMs are usable now. Llama 3.1 8B on an M-series Mac via Ollama hits sub-100ms first token. Haiku-class reasoning is running on phones with NPUs. If your automation touches physical systems, live audio, live video, or anything latency-sensitive, you have a real placement decision to make: edge, cloud, or hybrid. The pattern I keep reaching for: route by cost of latency. If a one-second delay breaks the experience, run it local. If the step needs cross-context memory or a frontier model, send it up. Cheap decisions at the edge, expensive ones in the cloud, with the edge doing the filter so the cloud only sees signal. The architecture question has changed. Not "which cloud model do I call," but "where does each step of this pipeline need to run." What is running in your stack right now that should not be making a cloud round trip? What is stopping you from moving it?

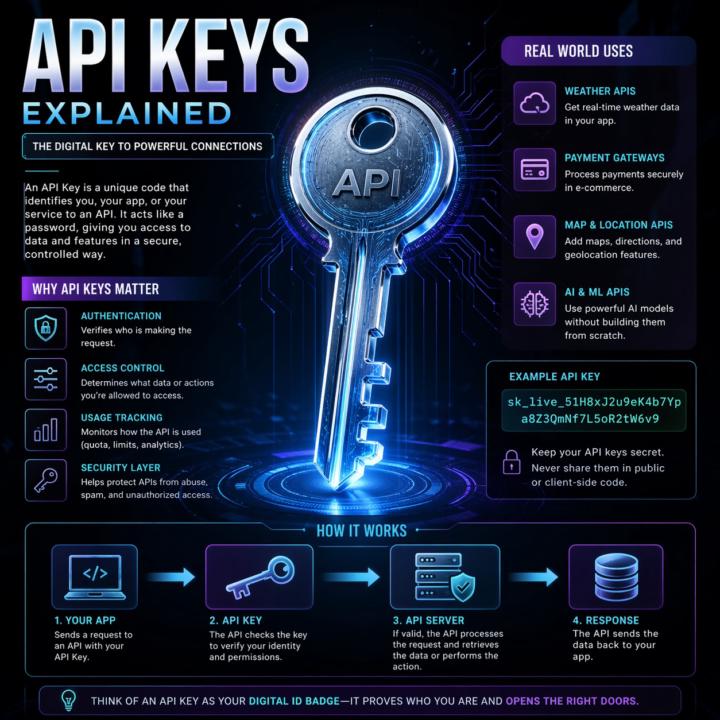

APIs, explained the way I explain them to clients.

Most automation problems I see trace back to a fuzzy mental model of what an API actually is. So here's the frame I use with clients. An API is a remote control for software. Your app presses a button (sends a request). Another app does something and sends back a result (a response). You don't see how the other app works inside. You just follow the rules printed on the buttons (the docs). That's it. That's the whole concept. Two analogies that work in client calls: Restaurant menu. The menu lists what you can order and how to ask for it. Kitchen is hidden. Meal is the response. Light switch. Flip the switch (request). Wiring, grid, power plant are hidden. Light turns on (response). Same idea either way: clear inputs, clear outputs, hidden complexity. The actual call pattern: 1. Client asks (your app, browser, script) 2. Request goes out with a URL, a method (GET, POST, etc.), and any data the server needs 3. Server does the thing 4. Response comes back, usually JSON Break any of those rules and you get an error, not data. Why this matters for builders: - Reuse beats rebuild. Use Stripe's API instead of building payments from scratch. - Complexity stays hidden. You don't need to know how Twitter stores tweets to pull the last 20. - Access is controlled. APIs decide what's exposed, who can call it, and how often. Security still depends on the implementation, but the boundary exists by design. - Apps mix APIs like ingredients. Maps, payments, email, auth, all stitched together. When two pieces of software talk in a structured, agreed way, they're using an API. Every n8n node, every Claude Code tool call, every trigger. All APIs under the hood. What analogy do you use when a non-technical client asks what an API is? Curious what lands for other builders. Highly recommended related information: Check out @Michael Wacht 's Daily Dose: https://www.skool.com/ai-automation-society-plus/ai-terms-daily-dose-api-use?p=5c08d0bf

Opus 4.7: 10 things that actually matter

A practitioner read on the April 16, 2026 release. Numbers cited are from Anthropic’s system card or named partner benchmarks. ## 1. Coding is the real jump SWE-bench Verified 80.8% → 87.6%. SWE-bench Pro 53.4% → 64.3%. CursorBench 58% → 70%. Anthropic’s internal 93-task benchmark reports a 13% lift across the suite. Rakuten’s partner eval claims 3x more production tasks resolved vs 4.6. On multi-file work, fewer back-and-forth loops and more one-shot fixes. ## 2. Agents run shorter and cleaner Long-running loops reason more before acting. Notion AI reports ~14% improvement on multi-step workflows at one-third the tool errors. Box’s figure: average calls per workflow dropped from 16.3 (4.6) to 7.1 (4.7). Fewer decisive steps instead of noisy chatter. ## 3. Vision is finally usable for screenshots Resolution 1,568px (1.15MP) → 2,576px (3.75MP) on the long edge, roughly 3x. XBOW visual-acuity 54.5% → 98.5%. OSWorld-Verified computer use 72.7% → 78.0%. This is the change that actually unlocks dense-UI automation, diagram parsing, and screenshot-based QA. ## 4. Still 1M context Context window and output limits match 4.6. Pipelines built around long documents or extended chains don’t need architectural changes. Self-verification is better, so coherence over long multi-step runs holds up longer. ## 5. Honesty and safety moved the right direction Reduced hallucinations and sycophancy, tougher against prompt injection. Good for client-facing systems. Note: 4.7 is also more conservative around offensive security work. Anthropic launched a Cyber Verification Program for approved red-team use cases. ## 6. Sharper codebase understanding CodeRabbit reports more real bugs found, more actionable reviews, and better cross-file reasoning than any model they’ve evaluated. The model builds a more persistent internal map of a repo instead of brute-forcing every file. Claude Code also shipped a new `/ultrareview` command for dedicated review passes. ## 7. New xhigh effort tier

Claude Code just shipped /ultrareview. Here is the practitioner breakdown.

Anthropic dropped a new slash command called /ultrareview in Claude Code v2.1.111, and it quietly changes how I review my own code before I ship it. Here is what it does, when to use it, when to hold back, and the catch most people are glossing over. What it actually is /ultrareview runs a full code review in the cloud using parallel reviewer agents while you keep working locally. - Type /ultrareview with no arguments. It reviews your current branch. - Type /ultrareview 123. It pulls PR #123 from GitHub and reviews that. By default it fires up 5 reviewer agents in parallel. Configurable up to 20. Each agent independently scans your diff for real bugs, and the command only surfaces a finding after it has been reproduced and verified. No "you might want to use const" noise. No lint-style nagging. Verified findings only. When to pull the trigger Spend a run when the cost of a missed bug is real: - Payment code - Auth changes - Database migrations - Large refactors touching many files - Any pre-merge review on a business-critical branch Do not burn a run on a one-line typo fix. The value lives in wide, high-stakes diffs where a human reviewer would take an hour and still miss something. The catch Users are reporting three free runs total on Pro and Max plans. Not three per month. Three, period. After that it meters against your plan. Treat them like good steakhouse reservations. You do not book one to show up and order a side salad. How I am using it 1. Finish a feature branch. 2. Run my own tests locally. 3. Fire /ultrareview before I open the PR. 4. Read the findings. Fix what matters. Push. 5. Only then ask a human to review. It does not replace a human reviewer. It does catch the things your eyes stopped seeing three hours ago. Try it Update Claude Code to 2.1.113 or later. Inside a git repo with real changes, type /ultrareview. Watch the fleet spin up. Come back in a few minutes. Feel free to share your initial result in the comments. I’m curious to see what it revealed about the code you deemed clean.

1-10 of 59

🔥

@michael-wacht-9754

AI Bits and Pieces | Learn to Close Deals | Become an AI Standout

Active 6h ago

Joined Feb 19, 2026

Mid-West United States