Activity

Mon

Wed

Fri

Sun

Jun

Jul

Aug

Sep

Oct

Nov

Dec

Jan

Feb

Mar

Apr

What is this?

Less

More

Owned by Joan

🚀 Comunidad para emprendedores, CEOs y marketers que quieren dominar la IA y automatizar su negocio. ¡Ahorra tiempo, escala y crece con 🧰 ToolBox!

Memberships

Design Turbo IA

3.9k members • Free

Ineffable.ai — Create with AI

531 members • $14/month

Run By AI

28 members • $27/month

Ninjas AI Automation

2k members • Free

SistemIA

6 members • $100,000

🦎 Camaleones Digitales

190 members • $10,000/month

Chase AI Community

60.2k members • Free

AI Marketing Hub Pro

147 members • $69/m

AI Automations by Jack

2.8k members • $77/month

111 contributions to AI Automation Society

PhD Student Paid Me $1,800 to Cut Literature Review From 120 Hours to 22 Hours 🔥

PhD student facing dissertation deadline in 4 months. Literature review: 6 months behind schedule already. Required comprehensive review of 200+ academic papers. Extract methodology, findings, limitations from each. Synthesize into coherent narrative demonstrating research gap. Manual approach: Read each paper carefully (45 minutes average), take detailed notes, extract relevant quotes, log complete citations properly. Estimated total time: 120+ hours minimum for thorough review. Current progress after 2 months of dedicated work: 34 papers fully reviewed, 166 still remaining. At current pace: 8 additional months needed to complete. Critical problem: Dissertation defense scheduled in exactly 4 months. Advisor already expressing serious concern about timeline viability. She paid me $1,800 to build academic paper processing system that could accelerate this dramatically. System functionality: Upload research paper PDF → Automatically extract key structured terms (title, authors, publication year, methodology type, sample size, key findings, stated limitations) → Generate concise one-paragraph summary → Auto-tag by research method category → Create fully searchable database. Processing time per paper: 3 minutes average versus 45 minutes manual reading and note-taking. Implementation timeline: Weekend 1 system development and testing. Weeks 1-3 systematically processed 247 papers (discovered more relevant papers than originally planned during search expansion). Total project time including setup: 22 hours from start to complete database. Result: Comprehensive literature review completed in 3 weeks instead of projected 8 additional months. Unexpected powerful benefit: Searchable database enabled sophisticated pattern analysis completely impossible with manual approach. Methodology breakdown became instantly visible: 87 studies used surveys, 34 used interviews, 18 used mixed methods. Critical research gap identification emerged from simple database queries that would have required weeks of manual cross-referencing and analysis.

🔥

3 likes • 3d

@Duy Bui , the pricing logic here is the part most people miss. You priced against the 98 hours saved, not the cost of running the pipeline — that's the whole game with academic clients. Two things I'd add from doing similar research-heavy work: 1️⃣ The synthesis step is where the deliverable lives or dies. Extraction is the easy 70%. Mapping the research gap into a coherent narrative is where reviewers will smell automation if you skip the human pass. 2️⃣ Re-selling. One PhD with a strong thesis tends to know 5–10 more in the same lab who are 3 months behind on the same problem. Ask for the warm intro before you deliver the final draft, not after. How are you handling source-checking when the model paraphrases a methodology section? That's the fail point I keep hitting 🔥

Leads and follow-ups.

I’ve been using an AI automation setup recently that completely changed how I handle leads and follow-ups. Before this, I was missing a lot of potential calls just because I couldn’t consistently follow up. Now the system I’m using: - captures leads automatically - follows up without manual work - helps turn conversations into booked calls more consistently It’s not a complicated setup, but it removed a major bottleneck in my process. I broke it down here: https://14lzvlw.atoms.world Curious has anyone else here solved follow-up in a better way, or still doing it manually?

🔥

0 likes • 3d

@George Jacob , the part most "speed-to-lead" setups get wrong is the second touch, not the first. Sub-5-minute first reply is table stakes now. The booked-call lift comes from what happens at hour 24 and day 4. What worked for me on a similar build: 1️⃣ First reply within 90s, but make it a real question, not "thanks for your interest." 2️⃣ Day 1 follow-up references something specific the lead said. If you can't, the model isn't ingesting the form properly. 3️⃣ Day 4 sends a one-line proof point (case study, screenshot) — no ask. That's where booked calls jump. What stack are you running it on? Curious whether you're going n8n + a CRM or keeping it inside one platform 💡

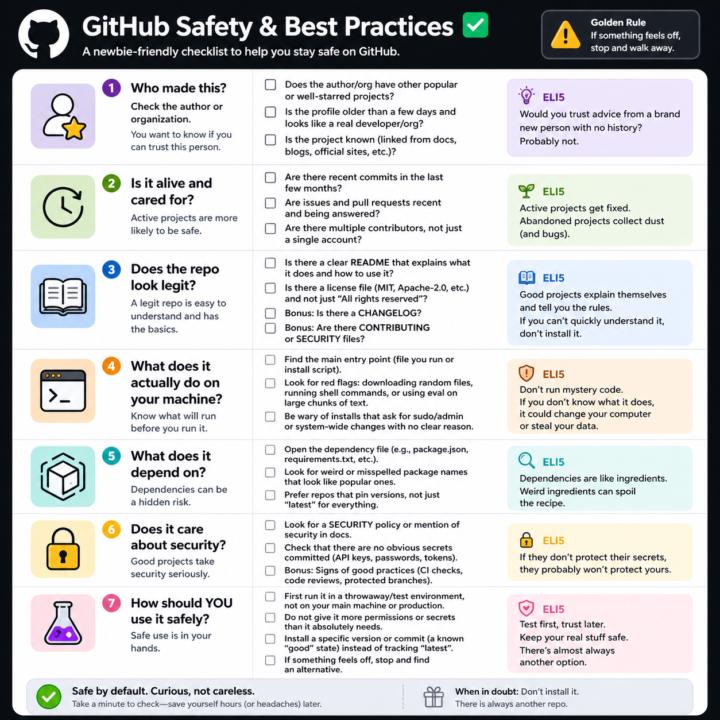

GitHub Safety and Best Practices Checklist

Here’s a simple, newbie‑friendly safety checklist you can run through every time you look at a GitHub repo. ## 1. Who made this? - Does the author or org have other popular or well‑starred projects? - Is the profile older than a few days and look like a real developer/org? - Does the project feel “known” (linked from docs, blogs, official sites, etc.)? If it’s a totally new account with one flashy repo, be extra careful. ## 2. Is it alive and cared for? - Are there recent commits in the last few months? - Are there recent issues and pull requests being answered? - Do you see multiple contributors, not just a single throwaway account? Abandoned projects aren’t always bad, but they age poorly from a security angle. ## 3. Does the repo look legit? - There is a clear README that explains what it does and how to use it. - There is a license file (MIT, Apache‑2.0, etc.), not just “All rights reserved”. - Optional but nice: CHANGELOG, CONTRIBUTING, SECURITY files. If you can’t quickly understand what it does, don’t install it. ## 4. What does it actually do on your machine? - Find the main entry point (the file you run, or the install script). - Look for obvious red flags: downloading random files, running shell commands, or calling `eval` on big chunks of text. - Be wary of “install” steps that ask for sudo/admin or system‑wide changes with no clear reason. If you don’t understand the install steps, don’t run them yet. ## 5. What does it depend on? - Open the dependency file (like `package.json` for Node, `requirements.txt` for Python). - Scan for weird or misspelled package names that look like popular ones. - Prefer repos that pin versions (not just “latest everything forever”). If the dependency list looks messy or huge for a simple tool, treat it carefully. ## 6. Does it care about security? - Look for signs of security features: security policy, mention of security in docs, or badges for scans/CI. - Check that there are no obvious secrets committed (API keys, passwords in plain text).

🔥

3 likes • 5d

Good checklist, @Matthew Sutherland One I would add as point 8: scan the lockfile (package-lock.json / poetry.lock / Cargo.lock), not just the manifest. Most supply-chain attacks land via a transitive dependency that the manifest does not even mention. Tools like `npm audit`, `pip-audit`, `cargo audit` give you that signal in 30 seconds. The post-install scripts comment from @Nigel Vargas is on point too — those are where 80% of the actually malicious code lives. 🔥

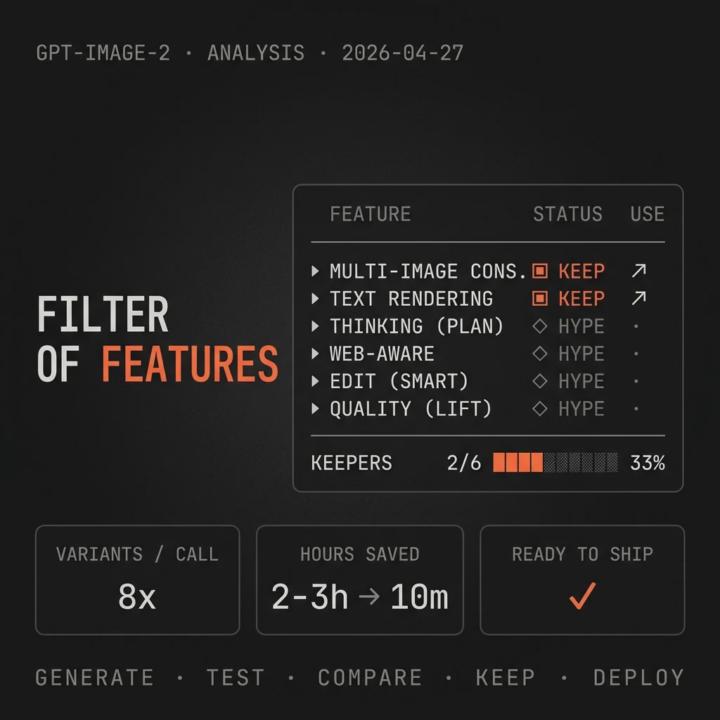

🎨 GPT Image 2: 6 hyped features, only 2 actually move the needle

🎨 GPT Image 2 just dropped. I reviewed the 6 announced features — only 2 actually change my work. OpenAI released ChatGPT Images 2.0 yesterday with 6 improvements. Most will stay in viral demos: - Thinking before generating - "Web-aware" images - Higher quality outputs - Smarter editing The two that actually move the needle in real marketing work: 1️⃣ Multi-image consistency (up to 8 coherent variants in one call) Until now, generating 8 visuals for a campaign meant 8 separate prompts, manual adjustments so they kept the same look, and around 2-3 hours of work. This reduces that cycle to about 10 minutes. For A/B testing creatives in ads, it is a transformation. 2️⃣ Serious text rendering (posters, slides, ads, infographics) The biggest limitation of previous models was text: either it rendered poorly, or you needed Photoshop afterwards. If this delivers on the promise, we can generate final social cards without going through editing. This week I am going to test both for: - Blog hero image series (30+ posts holding the same brand language) - Social card variants for LinkedIn ads What feature are you testing first? 🔥

4

0

Question for those doing client automations!

How are your clients actually getting contacted by their customers? Every time I try to come up with new automation ideas, it all keeps collapsing into the same thing, Instagram and Facebook DMs as the main entry point. (Cuz that's what SMBs use the most right?) Is that just reality right now? Are most client workflows basically built around social media messaging? I was expecting more cases with website forms, funnels, inbound leads through landing pages, etc… but I’m barely running into those. Am I missing something, or is the market really this DM-heavy? Would appreciate real-world insights.

🔥

0 likes • 6d

In SMB you are right @Aleksa Igic — Instagram and Facebook messaging wins because that is where the customer already is. But it flips by industry: B2B / SaaS / professional services lean form-first because the buyer wants to compare before talking. In SEO/GEO automation for B2B clients we see ~70% inbound via website forms. What kind of clients are you mostly building for? 🚀

1-10 of 111

🔥

@joan-marquez-5213

🚀 Co-fundador de 🧰 Tool Box | IA, Automatización y Crecimiento 📈 | Transformamos procesos manuales en sistemas inteligentes.

Active 13m ago

Joined Aug 15, 2025

Madrid

Powered by