Activity

Mon

Wed

Fri

Sun

May

Jun

Jul

Aug

Sep

Oct

Nov

Dec

Jan

Feb

Mar

Apr

What is this?

Less

More

Owned by Guerin

Master AI use cases from legal & the supply chain to digital marketing & SEO. Agents, analysis, content creation--Burstiness & Perplexity from NovCog

Memberships

The Great AI Shift

3.4k members • Free

7 Figure Visionary Mastermind

195 members • $17/month

AI SEO | Rank & Rent Lead Gen

1.5k members • Free

Vibe Coder

420 members • Free

AI Money Lab

69.3k members • Free

Turboware - Skunk.Tech

31 members • Free

Ai Automation Vault

15.3k members • Free

AI Automation Society

333.7k members • Free

CribOps

51 members • $39/m

72 contributions to Burstiness and Perplexity

Opus 4.7 drops against backdrop of criticism

Anthropic shipped Opus 4.7 under pressure after weeks of community revolt over perceived performance regression in 4.6, including a viral GitHub post from an AMD senior director calling Claude "no longer reliable for complex engineering." The community reception on launch day was notably skeptical despite strong partner testimonials, with Hacker News commenters essentially saying "we'll believe it when we see it." Key model card deltas to know: - Knowledge cutoff jumped from May 2025 → January 2026 - Vision resolution tripled: 1.25MP → 3.75MP - New xhigh effort tier (Opus 4.7 exclusive), now the default in Claude Code - Extended thinking budgets removed — a hard API break - Sampling parameters removed — another hard break that will catch people off-guard - New tokenizer inflates effective token usage up to 35% despite unchanged nominal pricing The Mythos shadow is the meta-narrative: Anthropic publicly concedes Opus 4.7 trails the unreleased Mythos Preview on every benchmark measured, and explicitly designed 4.7 as a safeguard testbed before any public Mythos rollout under Project Glasswing. That's a remarkable admission to lead a flagship release with. Developer Reception on Launch Day Despite pre-release skepticism, early-access partner testimonials — covering Cursor, Devin, Replit, Notion, Vercel, Ramp, and others — were uniformly strong. Aggregated themes from partner feedback: - Coding autonomy: Multiple partners reported 10–15% task-success lifts with fewer tool errors and more reliable follow-through on validation steps. - Self-correction: Opus 4.7 was consistently praised for catching its own logical faults during planning — not just execution — a behavior change developers called "new". - Long-horizon reliability: Devin's team noted it "works coherently for hours, pushes through hard problems rather than giving up". Warp confirmed it "passed Terminal Bench tasks that prior Claude models had failed, and worked through a tricky concurrency bug Opus 4.6 couldn't crack". - Dashboard and UI work: One early tester called it "the best model in the world for building dashboards and data-rich interfaces" — a direct reference to the improved vision and creativity.

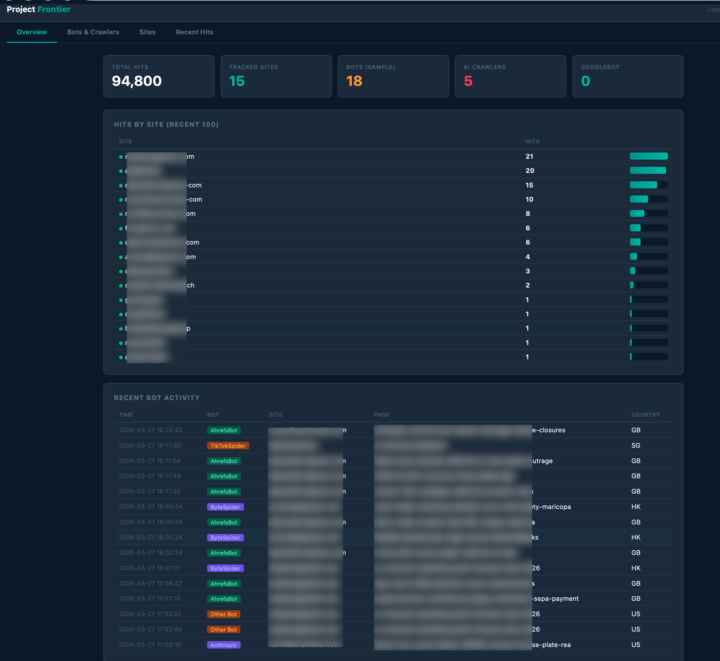

a learning content automation system

I built an automated content generation system that runs 24/7 on a Mac Mini in my house. No n8n. No Make. No Docker. No external orchestration dependencies. Pure Python, stdlib, launchd. It publishes across 18 sites daily. Every article is quality-scored against AP Style rubrics before it goes live. Here's the part most automation builders skip: the scoring model had a bias problem. GPT-4.1-mini's safety training bleeds into quality scoring. Political content — elections, protests, international conflict — gets reflexively penalized 3-4 out of 10 regardless of actual writing quality. The fix was chain-of-thought scoring: force the model to reason about specific criteria (headline accuracy, factual coherence, structure, tone) before outputting a score. That eliminated the topic-sensitivity reflex entirely. The quality gate rejects anything below 5.0/10. What passes gets a hero image generated via Fal.ai, publishes through WordPress REST API, and distributes to Bluesky, Telegram, and Tumblr — all with viral scoring that tiers articles into boost, standard, or skip. Cost: $0.92/day. Budget-capped at $2/day, $10/week. But the content generation is only half the system. Every article embeds a 1x1 tracking pixel from a Cloudflare Worker. That pixel tells me exactly which AI crawlers are ingesting the content and when. Within hours of publishing, I can see GPTBot, ClaudeBot, ByteSpider, Meta's external agent — all hitting the content. Not guessing. Measuring. Last week we deployed a 10-article interlinked content series across the network. 500 pixel hits in the first window. Breakdown: 14% GPTBot, 10% Meta, 4% ByteSpider, 2% ClaudeBot, 42% human readers. The content entered at least four major AI training pipelines within hours of publishing. The system improves daily without intervention. Quality scores trend upward because the rubric catches what the model misses. Publishing cadence stays natural with randomized 13-23 minute intervals — no fixed pattern for crawlers to fingerprint. Every run logs to a SQLite database. A daily email report hits my inbox at 7:03am with per-site metrics, quality trends, cost tracking, and pixel data.

Your own ground truth

what gets measured, gets managed This pixel system, which is devoted to tracking AI bots across entity graphs, is a gold mine of insights. It's not just a silly dashboard-- two AI models review mine for insights, independently, 3x daily looking for trends, deltas, opportunity gaps. There's no substitute for having your own independent ground truth data system at this moment. So build one. Can be run for free, or $5/month for nearly unlimited data (thanks CF) If you need the cookbook, it's in the Hidden State Drift Mastermind. (hiddenstatedrift[.]com) if you need one built for you, hit me up and we can talk about it.

3

0

welcome to the Burstiness and Perplexity community

Our mission is to create a true learning community where an exploration of AI, tools, agents and use cases can merge with thoughtful conversations about implications and fundamental ideas. To get a deeper overview of this Skool, click on the Classroom tab above, and enter the Welcome Classroom If you are joining, please consider engaging, not just lurking.Tell us about yourself and where you are in life journey and how tech and AI intersect it. for updates on research, models, and use cases, click on the Classrooms tab and then find the Bleeding Edge Classroom

1400+ APIs screened

There's an App for it. 2012 There's an API for it. 2026 scan the list; see what you can integrate for automation or workflow integration need a roadmap? hiddenstatedrift.com

2

0

1-10 of 72

@guerin-green-9848

Novel Cognition, Burstiness and Perplexity. Former print newspaperman, public opinion & market research and general arbiter of trouble, great & small.

Active 22h ago

Joined Jan 20, 2025

Colorado

Powered by