Activity

Mon

Wed

Fri

Sun

Jun

Jul

Aug

Sep

Oct

Nov

Dec

Jan

Feb

Mar

Apr

What is this?

Less

More

Owned by Diane

Under Construction

Memberships

Scaling Founders AI

10 members • Free

AI Automation Society

349.3k members • Free

AI for Life

28 members • $297

Synthesizer: Free Skool Growth

41.3k members • Free

Brand Sharks

501 members • Free

AI Automation Society Plus

3.5k members • $99/month

Skoolers

193.1k members • Free

🇺🇸 Skool IRL: Texas

432 members • Free

ACQ VANTAGE

912 members • $1,000/month

45 contributions to AI for Life

Claude Code Pro User Workflow

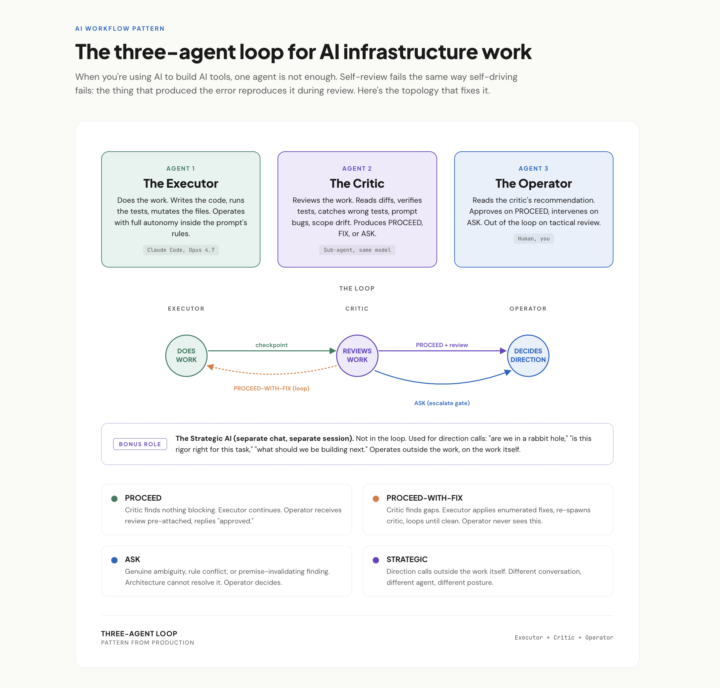

* Confidential. Use, Do not share.* Thank you! Pattern from a real session this week. When you're using AI to build AI tools, one agent is not enough. Self-review fails the same way self-driving fails: the thing that produced the error reproduces it during review. The fix is three roles, not two: The Executor does the work. Full autonomy inside clear rules. Stops only when it genuinely can't decide. The Critic reviews the work. Same model, different posture: read, verify, find what's wrong, recommend PROCEED, FIX, or ASK. The Operator (you) reads the critic's recommendation, not the raw work. Approves on PROCEED. Intervenes on ASK. Out of the loop on tactical review. Bonus fourth role I didn't have a name for until this week: a Strategic AI in a separate chat, used for direction calls outside the work. "Are we in a rabbit hole." "Is this rigor right." Operates on the work itself, not in it. The unlock isn't capability. It's posture. Same model, three system prompts, three different jobs.

GSD 2.0: Read This Before You Pay for Tokens

There's a new tool making the rounds called GSD 2.0. (Launched in early March 2026) The pitch sounds incredible: type one command, walk away, come back to a built project. Autonomous coding agent. Crash recovery. Cost tracking. The works. I went deep on it this week. Here's the part the hype tweets are skipping. What GSD 2.0 actually is The original GSD was a clever set of prompts you'd plug into Claude Code. It worked, but it was a prompt layer on top of Claude Code. GSD 2.0 is a different beast. It's a standalone application that runs the AI agent itself. You don't run it inside Claude Code anymore. You run it instead of Claude Code. That's the headline change, and it's the detail getting lost in the excitement. It manages git branches for you. It splits work into chunks small enough to fit in one conversation window. It retries when things break, recovers from crashes, and tracks every dollar you spend. On paper, it's serious engineering. Where the cost actually hits GSD 2.0 charges you per token. Same way you'd pay if you used the raw Anthropic API. If you have a Claude Max subscription, the $100 or $200 a month flat-rate plan that powers Claude Code, you already get effectively unlimited AI usage for one fixed price. Developers have publicly reported burning five figures of API spend in months that cost them $200 on Max. GSD 2.0 doesn't use your Max subscription. It bills directly to the API. It gets worse. GSD 2.0 is structurally more expensive per task than Claude Code, by design. It throws away the conversation history between every task to keep things "clean." That sounds great until you learn that the recycled conversation history is exactly the thing that makes Max so cheap. With Max, you pay nothing extra to reuse context. With GSD 2.0, you pay full price for it again every single task. Real numbers A small project on GSD 2.0 will cost you somewhere between $15 and $50 in API charges. A medium project, $40 to $150. A gnarly one with retries and crashes, several hundred dollars. None of that is covered by your Max plan.

Opus 4.7: 10 things that actually matter

A practitioner read on the April 16, 2026 release. Numbers cited are from Anthropic’s system card or named partner benchmarks. ## 1. Coding is the real jump SWE-bench Verified 80.8% → 87.6%. SWE-bench Pro 53.4% → 64.3%. CursorBench 58% → 70%. Anthropic’s internal 93-task benchmark reports a 13% lift across the suite. Rakuten’s partner eval claims 3x more production tasks resolved vs 4.6. On multi-file work, fewer back-and-forth loops and more one-shot fixes. ## 2. Agents run shorter and cleaner Long-running loops reason more before acting. Notion AI reports ~14% improvement on multi-step workflows at one-third the tool errors. Box’s figure: average calls per workflow dropped from 16.3 (4.6) to 7.1 (4.7). Fewer decisive steps instead of noisy chatter. ## 3. Vision is finally usable for screenshots Resolution 1,568px (1.15MP) → 2,576px (3.75MP) on the long edge, roughly 3x. XBOW visual-acuity 54.5% → 98.5%. OSWorld-Verified computer use 72.7% → 78.0%. This is the change that actually unlocks dense-UI automation, diagram parsing, and screenshot-based QA. ## 4. Still 1M context Context window and output limits match 4.6. Pipelines built around long documents or extended chains don’t need architectural changes. Self-verification is better, so coherence over long multi-step runs holds up longer. ## 5. Honesty and safety moved the right direction Reduced hallucinations and sycophancy, tougher against prompt injection. Good for client-facing systems. Note: 4.7 is also more conservative around offensive security work. Anthropic launched a Cyber Verification Program for approved red-team use cases. ## 6. Sharper codebase understanding CodeRabbit reports more real bugs found, more actionable reviews, and better cross-file reasoning than any model they’ve evaluated. The model builds a more persistent internal map of a repo instead of brute-forcing every file. Claude Code also shipped a new `/ultrareview` command for dedicated review passes. ## 7. New xhigh effort tier

1-10 of 45

Active 5h ago

Joined Feb 20, 2026

Texas