Activity

Mon

Wed

Fri

Sun

Apr

May

Jun

Jul

Aug

Sep

Oct

Nov

Dec

Jan

Feb

Mar

What is this?

Less

More

Memberships

Agent Zero

2.4k members • Free

19 contributions to Agent Zero

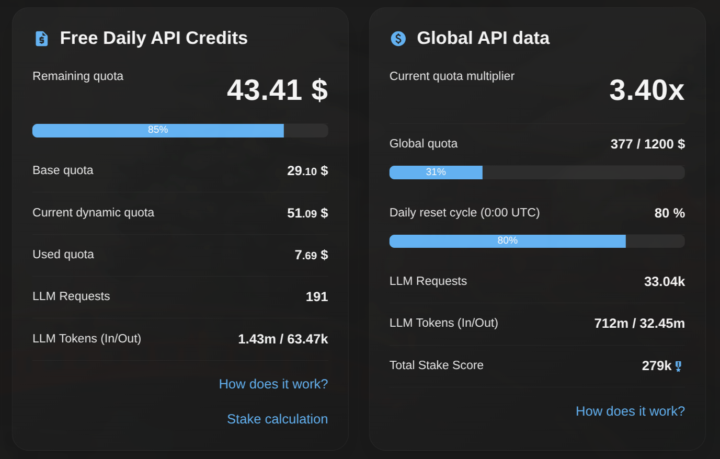

A0T multiplier issue plus adding to Venice pool

Hi folks, been staking A0T pretty much since the beginning to access Venice AI models, epic feature of A0! That said, for the first time I am seeing the multiplier not add up to the dynamic quota which at time of the screenshot was 3.4x but the dynamic quota is less than 2x Any idea what might be happening? Also @Jan Tomášek is there any plan to use more pool fees to add to the A0T pool and increase total compute available as more and more people join? I have noticed that as more people join, my balance continues falling which seems like a disadvantage to OG stakers who have been staking since the beginning I understand about locking up for a higher score and have locked up A0T for various periods even until 2028 as I have faith in the project and team plus use this compute daily to run many aspects of business and personal life Much respect just some honest questions from a long time A0 fan and staker, thanks

Memory issue for 0.98

litellm.exceptions.BadRequestError: litellm.BadRequestError: Lm_studioException - Error code: 400 - {'error': 'Context size has been exceeded.'} Traceback (most recent call last): Traceback (most recent call last): File "/opt/venv-a0/lib/python3.12/site-packages/litellm/llms/openai/openai.py", line 823, in acompletion headers, response = await self.make_openai_chat_completion_request( ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^ File "/opt/venv-a0/lib/python3.12/site-packages/litellm/litellm_core_utils/logging_utils.py", line 190, in async_wrapper result = await func(*args, **kwargs) ^^^^^^^^^^^^^^^^^^^^^^^^^^^ File "/opt/venv-a0/lib/python3.12/site-packages/litellm/llms/openai/openai.py", line 454, in make_openai_chat_completion_request raise e File "/opt/venv-a0/lib/python3.12/site-packages/litellm/llms/openai/openai.py", line 436, in make_openai_chat_completion_request await openai_aclient.chat.completions.with_raw_response.create( File "/opt/venv-a0/lib/python3.12/site-packages/openai/_legacy_response.py", line 381, in wrapped return cast(LegacyAPIResponse[R], await func(*args, **kwargs)) ^^^^^^^^^^^^^^^^^^^^^^^^^^^ File "/opt/venv-a0/lib/python3.12/site-packages/openai/resources/chat/completions/completions.py", line 2589, in create return await self._post( ^^^^^^^^^^^^^^^^^ File "/opt/venv-a0/lib/python3.12/site-packages/openai/_base_client.py", line 1794, in post return await self.request(cast_to, opts, stream=stream, stream_cls=stream_cls) ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^ File "/opt/venv-a0/lib/python3.12/site-packages/openai/_base_client.py", line 1594, in request raise self._make_status_error_from_response(err.response) from None openai.BadRequestError: Error code: 400 - {'error': 'Context size has been exceeded.'}

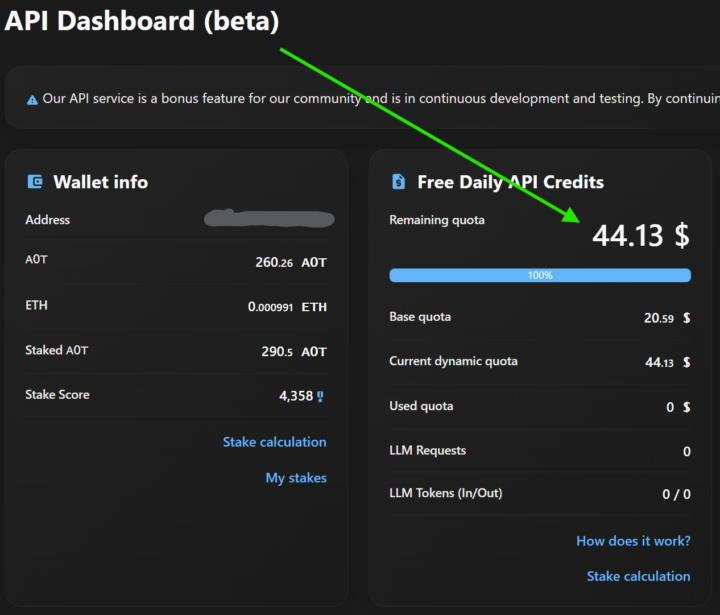

Agent Zero Utility Token

So I’ve been building with Agent Zero for a few weeks now……and somehow completely missed that it has its own utility token 🤯 💰 Picked up $600 worth of A0T 🔒 Staked it ⚡ Now receiving $44.13/day in FREE API credits, yes EVERYDAY! Unbelievable… So in about two weeks I'll have paid off my investment! I can now use any model via API key through venice.ai…paid for by staking. No subscriptions. No juggling billing dashboards. Just daily credits flowing in to run workflows, agents, automations — whatever you want to throw at it. Absolutely blown away by this project!! A decentralized agent ❤️ Let’s go, Agent Zero.

Model Configs

Why doesn't a0 have separate model configs for vision, audio, video, code. etc...? The reliance on a multimodal expensive model like opus is unfortunate. Please to esplain

Newbie

Question ? Can I run this on my Android or Iphone and how Thanks

1-10 of 19

Active 20d ago

Joined Sep 5, 2024

Bohemia

Powered by