Activity

Mon

Wed

Fri

Sun

Jun

Jul

Aug

Sep

Oct

Nov

Dec

Jan

Feb

Mar

Apr

May

What is this?

Less

More

Memberships

AI Automation Agency Hub

316.4k members • Free

Clief Notes

29.2k members • Free

35 contributions to Clief Notes

Folder structure do-loop

The Issue I'm struggling with folder structure — specifically, where to put different projects within a workspace or workflow. Background I needed to build a presentation to guide a workshop. The outputs of that workshop then drove a second presentation to roll out a new governance model for a type of Project Management Office (PMO). I did this using concepts I've learned, but before I had a solid understanding of folder structure. Here's what I ended up with: Current Folder Structure Company-Name/ ← Client/customer folder ├── drafts/ ├── resources/ │ └── Company-Name - Template.potx ← PowerPoint template ├── IMO_Governance_System/ ← Project 1 │ ├── CONTEXT.md │ ├── Governance_Model.md ← Brain dump of current thinking │ ├── Phases.md ← 5 phases of the to-be model (reference) │ └── drafts/ │ ├── Escalation_Methodology_Infographic.html ← Draft slide output │ ├── Opportunity_Management_Infographic.html ← Draft slide output │ ├── IMO_Governance_Workshop.pptx ← Workshop deck (draft) │ ├── IMO_Governance_Workshop_as_presented.pptx ← Workshop deck (final) │ ├── IMO_Governance_Deck.pptx ← Final report-out deliverable │ └── build_deck.py └── Other-Project/ ← Completely separate project, same client ├── Final-output-document.docx ├── Background-document.docx ├── Background-email.eml └── drafts/ ├── Draft.docx └── Draft.md One top-level company folder with shared elements (drafts, resources) and one subfolder per project — everything related to a project lives together. Proposed To-Be Structure Using a content creation workflow model as a reference, I'm wondering if projects should be broken apart across workflow stages rather than kept together: Company-Name/ ├── CLAUDE.md ← Always loaded ├── CONTEXT.md ← Task router │ ├── writing-room/ │ ├── CONTEXT.md │ ├── drafts/ │ │ ├── IMO_Governance_System/ ← Project 1 working files │ │ └── Other-Project/ ← Project 2 working files │ └── final/ │ ├── IMO_Governance_System/ ← Project 1 finished writing │ └── Other-Project/ ← Project 2 finished writing

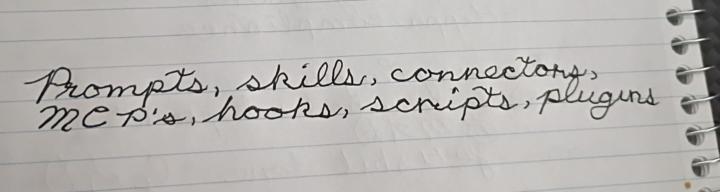

Just Dropped - Secret Code Behind Better Results

Most people think they have an AI problem. What they actually have is a packaging problem. You don’t need to become a developer to use AI effectively. This guide breaks down the real “code” behind better results: prompts, skills, connectors, MCPs, hooks, scripts, and plugins — and shows you exactly when (and why) to use each one. Check it out in Davids Corner: https://www.skool.com/cliefnotes/classroom/c7f102c7?md=9a0d198e1c0140578f37f1c93873c2b6

🧪 New benchmark out

New benchmark out of Meta FAIR, Stanford, and Harvard called ProgramBench. The setup: you get a compiled executable plus its docs. Source code stripped. Rebuild the program from scratch in any language you want. Tests check input/output behavior against the original binary. 200 tasks, from small CLI tools up to FFmpeg, SQLite, and the PHP interpreter. 📊 Results across 9 models: Zero tasks fully solved. Opus 4.7 was the best, passing 95% of tests on only 3% of tasks. GPT 5.4, Gemini 3.1 Pro, and Haiku 4.5 hit 0% in that bucket. The interesting part is section 5. Even the model solutions that "worked" looked nothing like the human reference. Median 1,173 lines vs 3,068 in the original. Flat directories. Fewer functions, each one longer. GPT 5.4 wrote 96% of its final code in a single turn on most tasks and never modified existing files on roughly 40% of runs. 🎯 Why it matters for us: The benchmark separates writing code from designing software. Models can produce syntax all day. They cannot yet decompose a real system into coherent modules, pick the right abstractions, or organize a codebase the way a working engineer would. That gap is what computational orchestration points at. It is also where the durable value lives. 🛠 Try it: Pick an easier task from the repo (the paper flags nnn, fzf, gron, and jq as more tractable). Run it against Claude or your model of choice. Watch where you and the model split. Note the design decisions you make that the model never even raises. Post your runs and attempts to create a harness that would allow the model to do it. Wins, failures, weird outputs, all of it. 📍 Paper and Repo: ProgramBench I'm building something on top of this right now. More soon.

3 likes • 24h

I know where you're coming from. I grasped parts, but not the whole. So, I copied and pasted the post into ChatGPT and asked it to explain it to me like I'm in 7th grade. This is what I got back: It means this: Researchers gave AI models a really hard coding test. They gave the AI: a working computer programthe instructions for how the program is supposed to workbut not the original code Then they said: Now rebuild this program from scratch. The AI could write the new program in any coding language it wanted. The goal was not to copy the old code. The goal was to make a new program that worked the same way. But the AI models did not do very well. None of them completely solved the tasks. Some models got parts right, but even when the program kind of worked, the code was not organized like a real programmer would usually organize it. A human programmer might build a project like this: one file for commandsone file for settingsone file for testingone file for helpersclear sectionssmall pieces that work together But the AI often did something more like this: put almost everything in one big filewrite long messy sectionsskip planningtry to do the whole thing in one giant answernot go back and improve much So the big lesson is: AI can write code, but it does not always know how to design a whole software project well. It is kind of like this: A student might know how to write good sentences, but that does not mean they know how to organize an entire research paper with an introduction, sections, evidence, transitions, and a conclusion.

What Do You Put In Your Database?

First post here, so be gentle, lol. I'm having a hard time wrapping my perception about what kind of data you can, or should put into yours for your AI memory. I've been messing around with computers and by extension data manipulation, since the 70s. I have a good understanding of how a relationship database runs. But we're not building that kind of ecosystem(?) are we? We can go bigger. Before I dug deeper, I always picture an LLM like Chatgpt, as having this huge massive brain, which held all of the Internet, and when I asked would wave it's virtual hands to say "Here it is". I know that's incorrect. I'm using AI as a research assistant and junior co-writer. I'm doing a non-fiction book what kind of skills you'll need to make money in the next 20 years. I'm doing a ton of digging for trends and possibilities and...all of you have a good idea of what that means, I'm sure. I went into this thinking it would be more like a Wiki compiled form all my research and conclusions but is that what I want? Seems like there so much more than just a glorified book list. I do want to have a folder style system, with the full transcripts, complete articles, or other important documentation that I need. That's doable too. But recently, I wanted to start at least collecting the base data. If I don't start doing that, I'm just digging my hole deeper. So I laid down a basic schema, and then asked GPT to pull me 5-6 highlights that it thought should go into the database. Once I get the workflow built, I pretty sure I'll try to automate it, so as I research, the AI formats and stores any highlighted data I come across. It pulled those six, then another three from our discussions on the first six, and we were in a side chat at the time, the main chat was on markdown files. We got 10 entries from that. So I had nineteen. I haven't done any more, but when I look at what I have, I get this weird vibe that the majority of them aren't on the book subject, but are more of how the LLM views the way I work? That's a poor description of it, I hope it works.

Im understanding about 5% of what I see here 😄

Still, I'm new here. So hopefully in a month or so I will get to 10% Anyone else feeling the same?

1-10 of 35

@carla-bosteder-7722

M.Ed., Developing apps and other digital products.

Active 18h ago

Joined Apr 22, 2026

TX

Powered by