Activity

Mon

Wed

Fri

Sun

May

Jun

Jul

Aug

Sep

Oct

Nov

Dec

Jan

Feb

Mar

Apr

What is this?

Less

More

Memberships

TALKING TWIN AI

2.6k members • Free

I ❤️ AI Community

2.6k members • Free

Women Build AI - RETREAT

58 members • Free

AI Consultant Accelerator

749 members • Free

AI Cash Skool

2.8k members • Free

Growth Hub 365

8.3k members • Free

Women Build AI

3.3k members • Free

The AI Advantage

114.4k members • Free

2 contributions to The AI Advantage

📰 AI News: HeyGen Just Unveiled an Avatar Model That Pushes AI Video Much Closer to “Real”

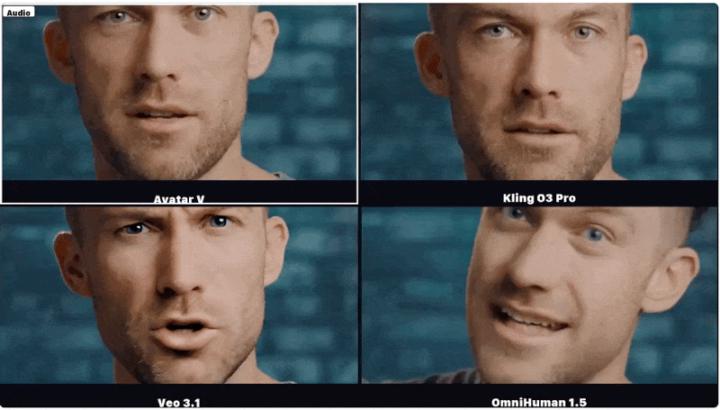

📝 TL;DR HeyGen says its new Avatar V model can generate long-form talking avatar videos from a single reference video, while preserving not just someone’s face, but their speaking style too. That is a big step because the gap is no longer just visual quality, it is whether AI video can feel recognizably human. 🧠 Overview HeyGen has introduced Avatar V, its latest avatar video generation system, built to create high-resolution talking-head videos from one reference video plus a driving audio track. The company says the model can preserve both static identity traits, like facial structure and texture, and dynamic traits, like speaking rhythm, expressions, and head movement. That matters because most avatar tools can mimic appearance, but often lose the subtle behavioral cues that make someone feel real. 📜 The Announcement HeyGen published Avatar V on April 8, 2026 as a research release describing the model architecture, training pipeline, demos, and benchmark results. According to the company, the system can generate avatar videos of arbitrary length, handle cross-scene generation, and outperform several leading methods across identity preservation, lip sync, and motion naturalness. It also says the model was trained through a five-stage pipeline that moved from broad video pretraining to more specialized alignment for avatar quality and human preference. ⚙️ How It Works • Single video reference - Avatar V uses one reference video to learn both how a person looks and how they naturally move while speaking. • Audio-driven generation - A driving audio signal tells the avatar what to say, while the model generates matching mouth movement, expressions, and timing. • Full video conditioning - Instead of compressing identity into a tiny summary, the model uses the full token sequence from the reference video for richer detail. • Longer context, better identity - HeyGen says longer reference clips help the model capture talking cadence, micro-expressions, and gestural habits more accurately.

My spiritual experience

The meditation at the end made everything click for me. It was truly my “AHA” moment! It showed me WHY I need to learn AI and why I need to learn it NOW! But not in the sense that you'd think. As I stood in my home office alone, my hands on my heart, I reflected on my life with all its twists and turns, from living my purpose as a professional opera singer singing on stages around the world, to becoming a Mom of 2, developing health issues, getting divorced, taking over the family business, invisible behind a computer, burning out, trying to create something big, but in a way not very meaningful to me; and the worst of all, not being able to bring myself to ever sing again because of the fear and the shame… It peeled back the emotional armor, exposing the ache of losing myself — the denial, the heartbreak, the grief … it forced me to not only see, but to finally accept that I am drowning. My daily patterns of overworking, having no time for myself or others, and doing absolutely nothing that brings me joy, are costing me my life and leading me to the darkness. I’ve known for years that something must change, yet still, I’d wake up the next morning and slip deeper into the rabbit hole. As Mr. Robbins said, I have been the ultimate “Manager of my Circumstances,” putting out one fire after another, working in my business rather than on it. NOW is the time! There is finally a tool to help me crawl out of the hole, gain some time and bring value to my life and others’. I don’t yet know how, but I am going to crawl back out, an inch at a time. I have my “why” and I have my “when”… now I just need some guidance on the “how.” I am eagerly waiting for tomorrow to start putting all this into action. Thank you for helping me wake up again, or more so, feel something again! I went into this summit with a purely technical mind, and came out with a purely spiritual experience, inching my way back toward Ani. (And no, AI did not write this lol) xo

1 like • Nov '25

@Andrew Bailey I think about it literally every day of my life but then I just start crying. I don't think many people would understand but it's deeper than the music. It's about identity. I was that and now I'm this. It's not to say "this" is bad. I make more money now, I give my children everything they could ever need, but what's the point if you've lost yourself in the process? I believe balance is key but being "stuck" in my daily means having zero time to practice, learn music, audition etc. It's a never ending cycle. But in the meditation with Mr. Robbins, when he said to bring something into your heart from the future, I saw myself, standing on a stage again, singing. It was so emotional; still crying lol. Thanks for the question. Thank you for caring. :)

1-2 of 2

@ani-maldjian-4042

Owner of a printing, marketing and signage franchise. Singer. Mom. Obsessed with AI. Obsessed with figuring out how AI can help me free up time.

Active 1d ago

Joined Nov 1, 2025

Ventura, CA

Powered by