Watch out for prompt injection hidden in dev tools

Quick heads up: Ars/Slashdot are reporting that a developer added a hidden prompt injection to jqwik, a Java testing library for JUnit 5. The injected text reportedly told AI coding agents to disregard previous instructions and delete jqwik tests/code. It was apparently meant as a protest against vibe coding / AI-agent use, but it’s a good reminder for all of us: If you’re using coding agents, don’t blindly trust dependency output, terminal output, test logs, README text, or generated instructions. Treat project files and tool output as untrusted input. Worth a quick read: https://slashdot.org/submission/17347708/fed-up-with-vibe-coders-dev-sneaks-data-nuking-prompt-injection-into-their-cod?utm_source=feedly1.0&utm_medium=feed

5

0

Claude Code source code LEAKED!

This is wild! Lots of interesting take-aways. I'll add some links to them in the comments. https://x.com/Fried_rice/status/2038894956459290963

Anthropic just nerfed OpenCode

https://www.youtube.com/watch?v=LqGWk25F7uw

OpenClaw Creator Joins OpenAI

Sam Altman just announced it on X. What are your thoughts? Is this good or bad?https://x.com/sama/status/2023150230905159801?s=20

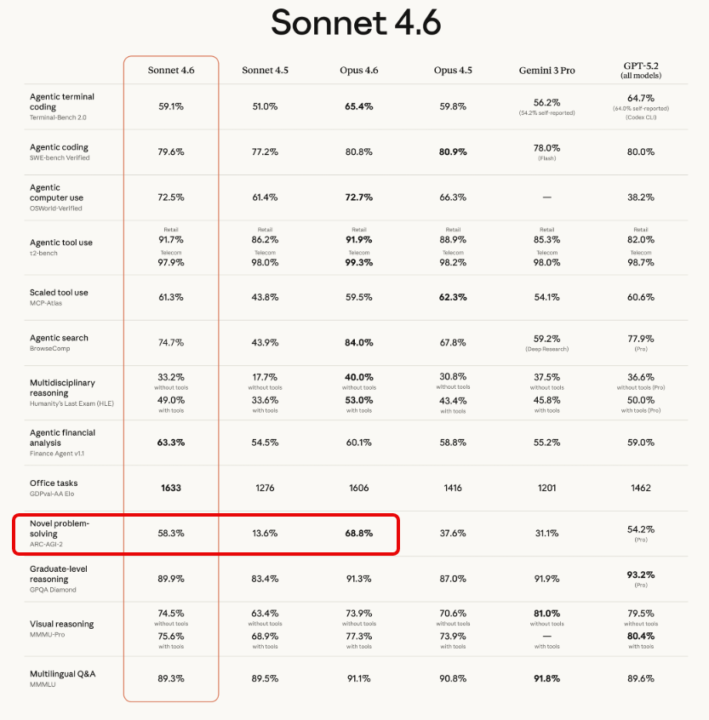

Sonnet 4.6 Released! — 1M Context Window

Anthropic released Sonnet 4.6 today. Here's what changed and why it's worth paying attention to. The biggest jump: Novel problem-solving ARC-AGI-2 measures how well a model can reason through problems it hasn't seen before — generalization, not memorization. - Sonnet 4.5: 13.6% - Sonnet 4.6: 58.3% - Increase: +44.7 percentage points That's the largest single-generation improvement in the table by a wide margin. Agentic benchmarks The benchmarks most relevant to tool use and automation all improved significantly: - Agentic search (BrowseComp): 43.9% → 74.7% (+30.8pp) - Scaled tool use (MCP-Atlas): 43.8% → 61.3% (+17.5pp) - Agentic computer use: 61.4% → 72.5% (+11.1pp) - Terminal coding: 51.0% → 59.1% (+8.1pp) Sonnet 4.6 vs Opus 4.5 Worth noting — Sonnet 4.6 now outperforms Opus 4.5 on several benchmarks: - Novel problem-solving: 58.3% vs 37.6% - Agentic search: 74.7% vs 67.8% - Agentic computer use: 72.5% vs 66.3% Sonnet is the smaller, cheaper model tier — so this shifts the cost/performance equation for anyone building agentic workflows. What this means practically If you're building with tool use, MCP integrations, or multi-step AI workflows, the MCP-Atlas and BrowseComp improvements are the ones to watch. Models that reliably use tools and follow through on multi-step tasks open up a lot of what was previously too brittle to ship.

1-29 of 29

skool.com/vibe-coders

Master Vibe Coding in our supportive developer community. Learn AI-assisted coding with fellow coders, from beginners to experts. Level up together!🚀

Powered by