📌 Welcome to The AI-Driven Agents Network

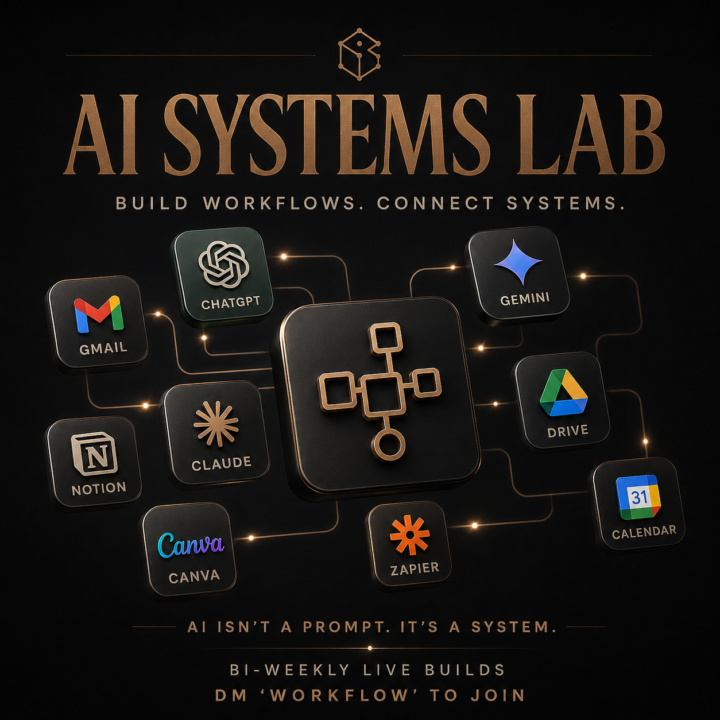

This community is for real estate professionals and entrepreneurs who want to: - Stop running their business in chaos - Use AI to enhance lead generation and streamline operations - Buy back 5–10 hours a week through increased efficiencies To get the most out of this group, do three things: 𝟭 - 𝗜𝗻𝘁𝗿𝗼𝗱𝘂𝗰𝗲 𝘆𝗼𝘂𝗿𝘀𝗲𝗹𝗳 𝗯𝗲𝗹𝗼𝘄 - What you do + how long you've been doing it - Your #1 business challenge right now - What you hope AI can do for your business 𝟮 - 𝗔𝘀𝗸 𝘀𝗽𝗲𝗰𝗶𝗳𝗶𝗰, 𝗔𝗜 𝗾𝘂𝗲𝘀𝘁𝗶𝗼𝗻𝘀 𝘆𝗼𝘂'𝘃𝗲 𝗴𝗼𝘁 𝗶𝗻 𝗺𝗶𝗻𝗱 - "Here's my current process — where would you plug in AI first?" - "How are you using [tool]?" - "What's the fastest way to stop [X] from falling through the cracks?" 𝟯 - 𝗦𝗵𝗮𝗿𝗲 𝗽𝗿𝗼𝗴𝗿𝗲𝘀𝘀 𝗮𝗻𝗱 𝘄𝗵𝗮𝘁 𝘆𝗼𝘂'𝗿𝗲 𝗯𝘂𝗶𝗹𝗱𝗶𝗻𝗴 - Wins, experiments, and "this broke — what did I miss?" are all useful - The more you implement and share, the more you and everyone else get out of this ━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 𝗪𝗵𝗲𝗻 𝘆𝗼𝘂'𝗿𝗲 𝗿𝗲𝗮𝗱𝘆 𝗳𝗼𝗿 𝘀𝘁𝗿𝘂𝗰𝘁𝘂𝗿𝗲𝗱 𝗶𝗺𝗽𝗹𝗲𝗺𝗲𝗻𝘁𝗮𝘁𝗶𝗼𝗻 The free group great is for ideas, experiments, and staying current. If you know you need to integrate AI into the core of your business — simplify your systems and stop babysitting every detail — there's a deeper level: AI Systems Lab. For $99/month you get: - Bi-weekly working sessions, hosted live by me - Each call, you bring one real area that feels messy right now — a listing process, a lead source, your follow-up, team communication, whatever it is. Together we turn it into a simple, AI-powered system and checklist that runs without you hovering over it. - Access to the 75-Day AI Execution Challenge and a growing library of skills, templates, and systems you can plug into your business today It's for people who are good at getting business, but know their current systems are costing them time, energy, and income. If that's you, go to Classroom → 75-Day AI Execution Challenge. If it’s locked, Skool will prompt you to upgrade. Once you unlock it, you’ll get access to the Challenge and invites to our bi-weekly private AI workshop (AI Systems Lab)

Build Your AI Operating System with us on the Next Call

You’ve opened ChatGPT or Claude at least a dozen times this month. You type a prompt, get a decent answer, copy-paste, move on. Tomorrow you’ll do the same thing again from scratch…because none of it is saved as a repeatable system in your business. That’s where most agents, leaders and business owners are with AI right now: Using it like a smarter Google experience. Getting answers one at a time. Never turning any of it into something that streamlines business and makes life easier. The gap is workflow design. Knowing how to take a process you do every week, break it into steps, feed it the right inputs, and build it so the AI runs it the same way every time and becomes a digital employee for your business. That’s exactly what we do in our bi-weekly mastermind, The AI Systems Lab. Every other week we get on Zoom for 60 minutes and integrate AI into your business systems. - Setting up Automations - Claude CoWork Strategies - Building your AI Second Brain Walk out of those sessions with AI workflows you can use immediately to grow your business and earn 5-7 hours of your time back each week. 𝗧𝗼𝗺𝗼𝗿𝗿𝗼𝘄 𝘄𝗲’𝗿𝗲 𝗯𝘂𝗶𝗹𝗱𝗶𝗻𝗴 𝗔𝗜 𝘄𝗼𝗿𝗸𝗳𝗹𝗼𝘄𝘀 𝗹𝗶𝘃𝗲 and you'll have at least one installed before we end the call. If you want the Zoom link, DM me “𝗪𝗢𝗥𝗞𝗙𝗟𝗢𝗪” and I’ll send you the details. You can also unlock the 75-Day AI Challenge inside Skool for $99/month. That gives you access to the bi-weekly AI Systems Lab, session replays, workflow assets, and the full 75-day challenge designed to help you become a top 10% user of AI in your business. Send “WORKFLOW” or click on the 75-day challenge and we'll see you on tomorrows call.

No judgment, doing some research on the AI Gap

When it comes to your daily business workflows, how are you currently utilizing your AI stack?

Poll

9 members have voted

1

0

Google Just Dropped Gemini Omni ⚡︎ (And it changes video creation)

Google just dropped Gemini Omni. The big takeaway for me is not just that AI can edit video. It’s that every few months, another part of the agent business gets easier to execute if you are paying attention. A lot of the old excuses are getting weaker: - “I don’t have time to follow up.” - “I don’t know what to post.” - “Video takes too long.” - “I’m not good at editing.” - “I need to hire someone before I can do that.” Those used to be real constraints. Some of them still are. But they are not as strong as they were 12 months ago. With tools like ChatGPT, Claude Cowork, Gemini, and now Omni, agents can take the things they already know they should be doing and move faster. Better follow-up. Cleaner client education. More consistent database touches. Stronger listing content. Faster market updates. Now video editing is moving that direction too. Instead of shooting a rough property walkthrough and spending an hour in CapCut, the workflow is starting to look more like this: - Brighten the kitchen. - Stabilize the video. - Clean up the audio. - Remove the clutter. - Make this feel more polished for Instagram. That is where this gets practical. I think 15-20% income growth in 2026 should be a real conversation for agents who actually adopt these tools. For some, doubling their business is not unrealistic. Not because AI is magic. Because it removes friction from the fundamentals. First workflow I’d test: Shoot a raw property walkthrough on my phone, drop it into Omni, and ask it to turn it into a cleaner listing clip or to furnish it in a luxury minimalist style—matte charcoal and champagne-gold accents and overall just play with it to see how things work. Where are you still doing things manually that AI could already be helping with?

Chat/Claude/Gemini fro $8/month?

I saw this company (the founder is someone on X/twitter that I have followed for years. You get ChatGPT/Claude/Gemini (chat and code - no cowork or GPT) for $8. It sounds amazing. What am I missing? https://www.capriole.ai

1-30 of 56

skool.com/the-ai-driven-agent

A community of real estate agents and entrepreneurs focused on mastering AI, and smarter business systems. Buy back time & scale with freedom.

Powered by