Write something

ChatGPT 5.5 > Claude Mythos

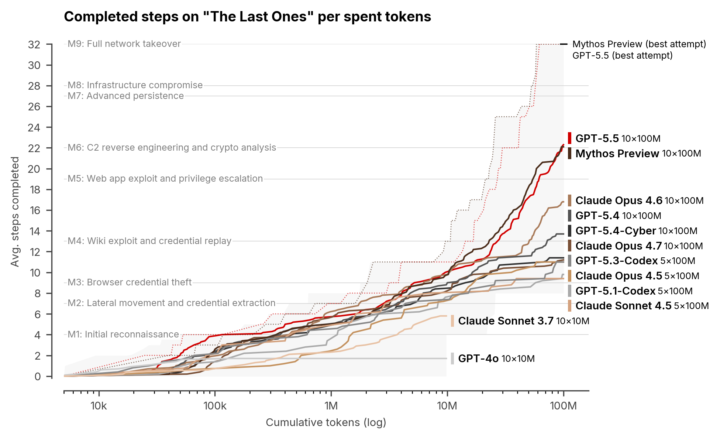

You can access chatGPT 5.5 today. It looks like the most powerfull AI model for CyberSecurity yet. Even better than Claude Mythos. GPT-5.5 completed TLO end-to-end in 2 of 10 attempts, making it the second model to do so. Mythos Preview, the first model to solve TLO, did so in 3 of 10 attempts. https://www.aisi.gov.uk/blog/our-evaluation-of-openais-gpt-5-5-cyber-capabilities On the Expert-level tasks, GPT-5.5 achieves an average pass rate of 71.4% (±8.0%, 1 standard error of the mean), compared to 68.6% (±8.7%) for Mythos Preview, 52.4% (±9.8%) for GPT-5.4, and 48.6% (±10.0%) for Opus 4.7. On this measure, GPT-5.5 may be the strongest model we have tested.

0

0

Real Mythos Data

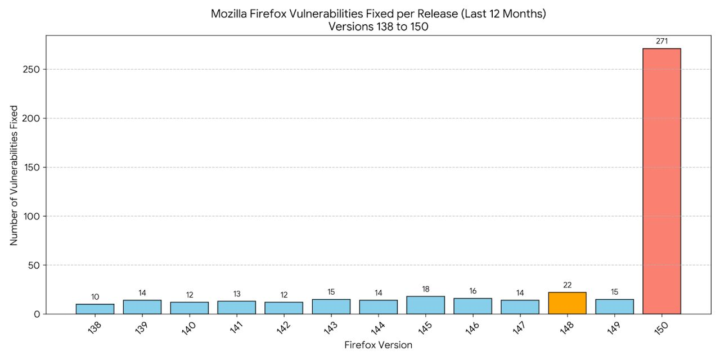

Mozilla released Firefox 150 yesterday, fixing 271 vulnerabilities found with the help of Mythos. Here is a chart of the amount of vulnerabilities they fixed in the previous 12 months of releases. Source: https://lnkd.in/ecwWPden

0

0

📅 Weekly Security Briefing — Apr 13–19, 2026

🚨 Microsoft Patch Tuesday Fixes 167 Flaws & 2 Zero-Days What happened: Microsoft released one of its largest updates ever, fixing 167 vulnerabilities, including two zero-days — one actively exploited in the wild. The most critical is CVE-2026-32201, a SharePoint spoofing flaw that allows attackers to manipulate data and access sensitive information. 🔗 https://www.bleepingcomputer.com/news/microsoft/microsoft-april-2026-patch-tuesday-fixes-167-flaws-2-zero-days/ 📂 13.5 Million McGraw Hill Accounts Leaked in Data Breach What happened: The ShinyHunters group leaked data from 13.5 million McGraw Hill users, reportedly due to a Salesforce misconfiguration. The breach exposed personal data and highlights ongoing risks tied to SaaS misconfigurations and weak cloud access controls. 🔗 https://www.bleepingcomputer.com/news/security/data-breach-at-edtech-giant-mcgraw-hill-affects-135-million-accounts/ 🤖 Frontier AI Models Raise Concerns Over Offensive Capabilities What happened: Policymakers and researchers are raising concerns over increasingly powerful AI systems capable of autonomous vulnerability discovery and exploit chaining. Discussions are underway around leveraging these capabilities defensively, while limiting misuse as models become more capable. 🔗 https://datainnovation.org/2026/04/federal-government-should-partner-with-frontier-ai-labs-on-cybersecurity-defense/ 🕵️♂️ Operation PowerOFF Disrupts Global DDoS-for-Hire Networks What happened: Law enforcement agencies identified 75,000 users of DDoS-for-hire services and seized 53 domains as part of Operation PowerOFF. The coordinated action significantly disrupts access to low-cost attack infrastructure used worldwide.

1

0

📅 Weekly Security Briefing — Mar 30 – Apr 5, 2026

Honestly the last week was insane, so many interesting and scary staff. If you don't read this usually, I would highly recommend reading this week summary. 🛡️ Axios npm Supply Chain Attack Impacts Millions of Developers What happened: A major supply chain attack compromised the widely used Axios npm package, impacting a library with ~100 million weekly downloads. Attackers hijacked a maintainer account and published malicious versions that injected a hidden dependency executing a cross-platform remote access trojan (RAT) during installation. The campaign has been attributed to a North Korean-linked actor, with payloads targeting Windows, macOS, and Linux systems. 🔗 https://www.microsoft.com/en-us/security/blog/2026/04/01/mitigating-the-axios-npm-supply-chain-compromise/ 🤖 DeepLoad Malware Uses AI to Evade Detection What happened: Researchers identified a new malware strain dubbed DeepLoad, which leverages AI model APIs to dynamically generate polymorphic malicious code during execution. By continuously changing its structure and behavior at runtime, the malware can bypass traditional signature-based defenses and adapt in real time to detection mechanisms. 🔗 https://reliaquest.com/blog/threat-spotlight-deepload-malware-pairs-clickfix-delivery-with-ai-generated-evasion/ ☁️ AWS Expands Bedrock Security with Cross-Account Guardrails What happened: AWS announced general availability of cross-account guardrails for Amazon Bedrock, enabling organizations to enforce consistent AI security and governance policies across multiple accounts. This allows centralized control over model behavior, compliance rules, and safety constraints across enterprise-scale AI deployments. 🔗 https://aws.amazon.com/blogs/aws/amazon-bedrock-guardrails-supports-cross-account-safeguards-with-centralized-control-and-management/

1-17 of 17

powered by

skool.com/cloud-ai-security-academy-4626

Learn AI, automation and security tools reshaping modern SOC and cyber careers.

Suggested communities

Powered by