Activity

Mon

Wed

Fri

Sun

Jul

Aug

Sep

Oct

Nov

Dec

Jan

Feb

Mar

Apr

May

What is this?

Less

More

Owned by Ward

AI for DBAs is AI for Everyone. We empower everyone to master AI with databases, making data management accessible to all.

Memberships

AI Automation Base

310 members • Free

AI Automation Vault

14.5k members • Free

AI Video Hub

284 members • Free

Skoolers

182.6k members • Free

WotAI

762 members • Free

Vibe Coders Club

887 members • Free

Ai Titus

908 members • Free

kev´s AI OS Academy

178 members • $99/m

AI Bootcamp-Military Families

28 members • Free

20 contributions to Vibe Coders

Birth of Bob Book released

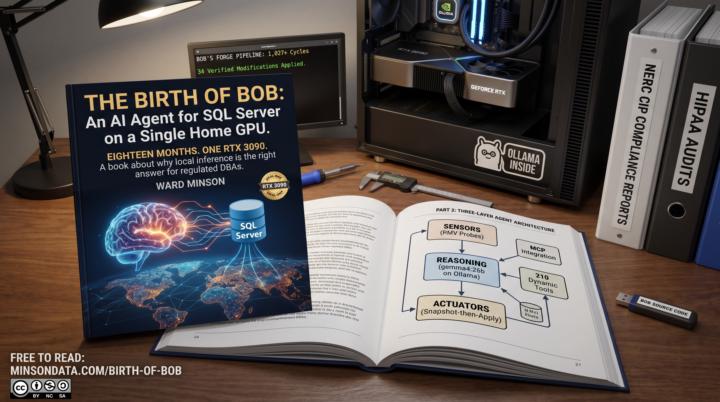

If you work in a regulated data environment, you already know you can't just send your SQL Server query plans to a cloud LLM. After 18 months of building on a single RTX 3090, I'm excited to share the solution: Bob, an autonomous, locally-hosted AI agent for SQL Server self-healing that never lets your data leave the LAN. Today I'm releasing The Birth of Bob a completely free book detailing the architecture, the code, and why local inference is the only viable answer for DBAs drowning in 200-page health reports. Check out the article below to read it, join the AI-for-DBAs community, and drop your own 3 AM database war stories in the comments. https://minsondata.com/birth-of-bob

0 likes • 22h

@Atul Pathria Bob is restricted from making critical changes autonomously. Just like in a standard corporate environment, any major changes require explicit human approval. His primary job is continuous monitoring and generating fix suggestions. While he can handle a few small, non-intrusive tasks on his own, safety and stability are built right into his core architecture—it's essentially part of his soul. He can also perform needed tasks in an assigned project, but nothing involving cross configuration or removals such as deleting and renaming. outside project guidelines nay changes must follow change requests.

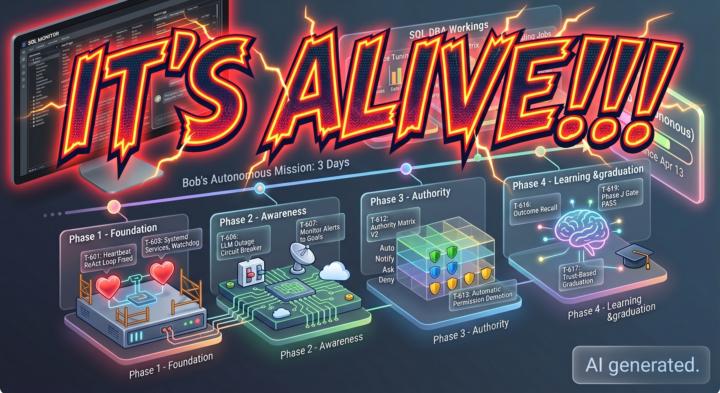

IT's Alive!!!

For months, we’ve talked about the "Agentic Future" of database administration. Today, I’m sharing the raw timeline of how that future became a reality. Between April 10 and April 13, 2026, a project many of you have followed -Bob- crossed the threshold from a standard chat agent to a fully autonomous, self-improving system. https://www.skool.com/ai-for-dbas-7678 Thanks to Vibe Coders

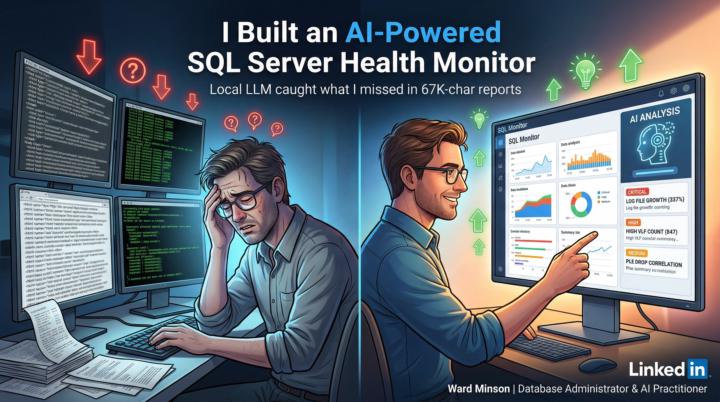

Part 2 - Real World use of Local AI

In Part 2 of his series, I demonstrates the practical power of a local LLM-driven "AI DBA Analyst" that processed a massive 67,000-character SQL Server health report in just 12 seconds to identify three critical, interconnected performance issues. By utilizing a three-layer architecture collection, storage, and a Python-based intelligence pipeline the system successfully correlated memory pressure with log file growth and job slowdowns, providing immediate, actionable T-SQL fixes. Beyond simple analysis, I Try to highlight the AI's ability to modernize legacy database code by auditing and fixing 34 stored procedures, ultimately arguing that while AI lacks business context, it serves as an invaluable, tireless partner that allows DBAs to bypass manual data parsing and move straight to strategic resolution. ❤️🔥This is a real world solution for a real world problem solved by AI integration with legacy tools.🔥 👾👾👾💥👾👾 https://www.linkedin.com/pulse/part-2-my-ai-dba-analyst-found-3-critical-issues-12-ward-minson-6uz6c

Part 1 of a 2 part article, building a SQL Server Monitor for AI

In this article, Database Administrator I describes building a local, AI-powered system using Ollama to analyze massive, automated SQL Server health reports that were previously unmanageable for human review. He explains how this approach, which keeps all sensitive data within the private network, solves the common industry problem of unread reports by using a local LLM to instantly correlate data, prioritize critical issues like log file growth, and provide actionable fixes. By transforming dense HTML reports into clear, intelligent summaries, I demonstrate how AI acts as a tireless mentor for junior DBAs and a sophisticated trend detector for seniors, ultimately evolving the DBA's role from reactive troubleshooting to proactive, data-comprehending management. https://www.linkedin.com/pulse/part-1-2-i-built-ai-powered-sql-server-health-monitor-ward-minson-6cdvc/

0 likes • Apr 15

@Atul Pathria, you hit the nail on the head regarding the "causation chain." Most DBAs are currently playing a game of whack-a-mole because their monitoring tools treat events as isolated incidents. You’re absolutely right. A spike in page life expectancy is rarely a "day one" problem; it’s usually the final domino in a chain that started with a specific query plan change or a maintenance job overlap hours prior. Why the On-Prem/Local LLM Approach Wins Here: - Temporal Context as a Feature: By using local vector embeddings, we can feed the model "Time-Series Context." Instead of just embedding a single error log, we embed windows of telemetry. This allows the AI to recognize that Event B almost always follows Event A within a 30-minute window. - The "Three-Hop" Logic: General-purpose LLMs struggle with SQL Server’s specific internal dependencies. A local model, fine-tuned or heavily prompted with your specific environment’s topology, can traverse that dependency graph (Memory, Log, Job) because it isn't just looking at text; it’s looking at your infrastructure's "digital twin." - Signal over Noise: The goal isn't more dashboards; it's a "Reasoning Engine" that sits on top of the telemetry and says: "Ignore the log growth alert; it's a symptom. Fix the memory pressure caused by the newly deployed ad-hoc reporting service." That shift from statistical correlation to causal inference is exactly where the "Automated DBA" needs to live❤️🔥

Prompts to Commands

The bridge between a "product idea" and a "deployed application" is usually built with hundreds of hours of manual labor. But what if you could automate the entire architectural life-cycle? By converting high-level engineering prompts into Claude Code Custom Commands, you can transform a standard AI chat into an autonomous development squad. This collection of commands creates a linear, high-precision pipeline that moves from vision to verified code with military discipline. I have included my build prompts here as claude code commands. you could possibly use them in other CLI coders

1 like • Apr 4

💥 Here is the updated TPV Command that addresses what you are asking for.🚀 I am going to update the Class on https://www.skool.com/ai-for-dbas-7678 to insure it is up to date. This updated command acts as a "Black Box Flight Recorder" for your autonomous build process. It ensures that if Claude hits a token limit or crashes mid-task, it doesn't just forget what it was doing. By maintaining a .claude_state.json file, it saves its "In-Flight Context" essentially its train of thought—alongside its progress (e.g., "Step 3 of 7" or "2 of 3 test passes"). When you restart the pipeline, it reads this file first to pick up exactly where it left off, preventing the agent from getting stuck in a loop or losing track of the specific logic it was trying to fix. I certainly hope this helps answer your question.🔥

1 like • Apr 5

@Atul Pathria so this is what is now happening with the latest TVP-gate.md file: 1. Namespacing & Collision Avoidance Instead of a generic .claude_state.json, the command now dynamically generates a filename based on the environment. It uses the pattern: ${PROJECT_NAME}_${SESSION_ID}.json. This ensures that a session in one tmux window doesn't overwrite a checkpoint from another. 2. State Pruning (Keeping it Lean) To address the file size concern, the "In-Flight Context" is treated as a sliding window. - Pruning Rule: Only the current task's logic and the last failed test output are kept. - Function Bodies: Instead of saving full code blocks, we save Line References or Function Names. This keeps the state file under a few kilobytes, ensuring fast re-loads regardless of project size. Update will be available in the class folder.

1-10 of 20

Online now

Joined Jun 11, 2025

USA

Powered by