Activity

Mon

Wed

Fri

Sun

Jun

Jul

Aug

Sep

Oct

Nov

Dec

Jan

Feb

Mar

Apr

May

What is this?

Less

More

Memberships

Your First $5k Club w/ARLAN

28.3k members • Free

Bedroom Boss Academy

112 members • Free

Skoolers Dopamine reset

22 members • Free

Automation Network

1.2k members • Free

TaskMagic Empire

4.3k members • Free

Editor Accelerator

5.9k members • Free

Full-Time Editors

2.2k members • Free

The Edit Club

492 members • Free

Make $1k-$10k in 30 days

18.4k members • Free

66 contributions to AI Automation Society

Honest question for this community...

Who in here has been thinking about building a personal brand or getting into content creation? I'm 4 months into posting consistently. Still learning. Still figuring it out. But the conversations it's started have been worth more than any credential I could've waited to earn. I'm just curious if anyone else here is sitting on something they haven't posted yet... and what's actually stopping you.

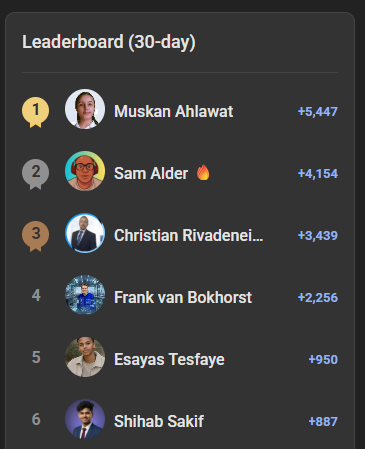

April MVPs Are Here! 🏆

Huge shoutout to the Top 5 Members of AI Automation Society for April! These are the people leading the way, sharing knowledge, and helping spread the AIS culture: 1️⃣ @Muskan Ahlawat – Back on top and absolutely dominating this month with incredible contributions! 🔥 2️⃣ @Sam Alder 3️⃣ @Christian Rivadeneira 4️⃣ @Frank van Bokhorst 5️⃣ @Esayas Tesfaye And a special mention to @Shihab Sakif, who landed in 6th place and just missed the Top 5. We're rooting for you to break through next month! 👏 Keep contributing, sharing wins, and helping others grow! Our community gets better because of you. Let's keep building, keep learning, and keep spreading that AIS energy. 🚀 Cheers, Nate

April 7-Day Challenge Graduate Cohort! 🎓

Massive congrats to the first-ever class of 7-Day AIS Challenge graduates! These builders showed up, put in the work, and shipped 7 builds in 7 days, from beginner foundations all the way through advanced territory. April 2026 Graduates: 🎓 Antra Verma 🎓 Darshan Patel 🎓 Grant G. 🎓 Takumi Nozawa Finishing the challenge isn't easy. It means watching the lessons, doing the actual builds, hitting every checkpoint, and submitting a capstone that proves you can put it all together. These four did exactly that. If you've been on the fence about starting the challenge, let this be your sign. 7 days, 7 builds, zero fluff. You can move at your own pace, but the structure is there to take you from day-one beginner to advanced builder by the end. Huge respect to our April grads. Can't wait to see who joins the Graduates wall in May. 🚀 Cheers, Nate #AISChallenge

your 67% discount expires today

Quick heads up. Your 67% discount on One Person AI Agency expires today. This is the complete playbook from building an AI agency to $100K/month and selling it. The client acquisition system, the pricing, the delivery process. Everything. It normally runs $299. Right now it's $99. That changes tonight at midnight. -> your 67% discount expires today PS: If you are an AIS+ member, this is included in the Scale module after 90 days. No need to purchase separately. - Nate

Most real estate agents don’t have a lead problem… they have a prospecting + consistency problem.

Leads exist. But finding the *right* ones consistently is where things break. I’ve been working on a system that delivers **verified real estate developer leads (actual decision-makers)** directly into your CRM every day. No manual searching No guessing emails No inconsistency Basically: I help real estate agents get ready-to-contact, high-quality developer leads daily without doing the prospecting themselves. Curious would this be useful for your business?

1-10 of 66

🔥

@shiv-pratap-singh-5671

I am interested in automation and systems.

I believe in helping people, sharing value, and keeping communities active and supportive.

Active 11h ago

Joined Aug 29, 2025

India

Powered by