Activity

Mon

Wed

Fri

Sun

Jun

Jul

Aug

Sep

Oct

Nov

Dec

Jan

Feb

Mar

Apr

What is this?

Less

More

Memberships

Early AI-dopters

1.3k members • $77/month

AI Automation Society

351.6k members • Free

3 contributions to AI Automation Society

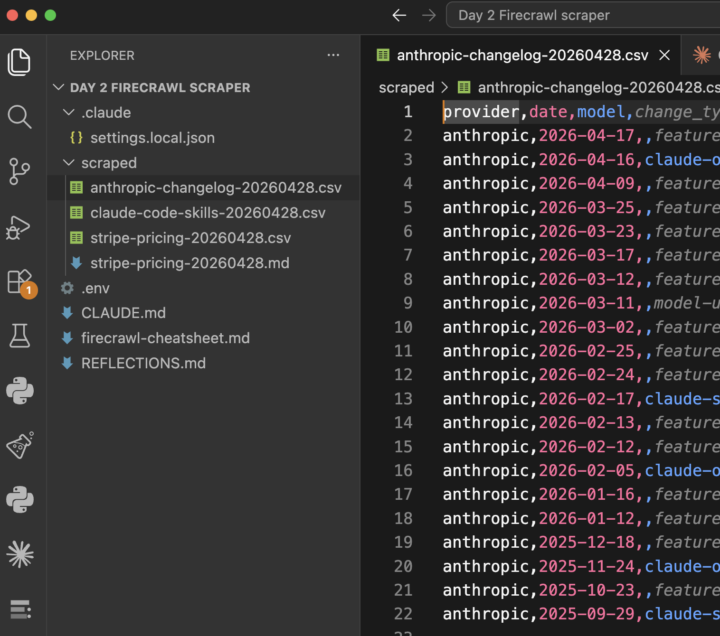

#7dayAISChallenge — Day 2 🕷️

Built a Firecrawl scraper today using its MCP server in Claude Code. A core lesson for me: how to make MCP servers actually reliable. 🔌 MCP servers ship "thin" on purpose. Each tool comes with just a one-line description, because those descriptions get loaded into Claude's context on every turn, keeping them short avoids bloating context across the many MCPs you might install. The tradeoff: the server can't ship opinions about your budget, your use case, or your project conventions. 📋 Fix: a project-local cheatsheet. A markdown file in the repo with an intent → tool decision matrix, cost annotations (cheap vs expensive calls), anti-patterns ("don't use X for Y"), and gotchas you've hit. Then wire it into CLAUDE.md with a line like "consult firecrawl-cheatsheet.md before calling any mcp__firecrawl__* tool." That trigger is what forces Claude to read it every time, not just when it feels like it. 🎯 Why this matters: LLMs are non-deterministic by default. Give it the same prompt, it might make different tool choices. Without rails, Claude might reach for the most powerful-sounding tool when a cheap scrape would do the job for 1/10th the cost. A cheatsheet collapses the decision space: predictable tool choice, predictable cost, fewer credit surprises. ⚡ But don't pre-cache. Not every MCP deserves a cheatsheet. Writing and maintaining one has its own cost. My rough test: does it have >5 overlapping tools, a real cost per call, non-obvious gotchas, and will I reuse it across many sessions? 2+ yes → worth writing. Otherwise skip and let Claude figure it out from the built-in descriptions. If unsure yet: install the MCP, use it raw, and let actual friction tell you what to encode. Don't speculate. On to Day 3. 🚀

#7dayAISchallenge — Day 1: Newsletter Automation 📰

Built an AI newsletter pipeline. Biggest unlock: structure beats raw prompting. WAT framework (Workflows / Agents / Tools) changed the game. Instead of one giant blob of run-it-all code, the LLM gets a clean split: markdown SOPs for what to do, Python scripts for the deterministic work, and the LLM itself just orchestrating. Way more reliable, way easier to debug, and the codebase actually stays organised as it grows. Stack: - 🔍 Tavily for web search (solid free tier — Perplexity now needs a $50 min top-up 😬) - 🧠 Claude for reasoning + summarising - 🎨 Kie.ai (Nano Banana wrapper) for image generation - 📬 Gmail for sending the final newsletter out The cool part: watching a structured agent stitch all four together end-to-end — search → summarise → illustrate → assemble → send — without me babysitting each step. The CLAUDE.md does the heavy lifting upfront, so the AI doesn't drift mid-task. Takeaway: the difference between "AI that kinda works" and "AI that ships" is almost entirely structure. Give it a mental model, guardrails, and a place for everything — then let it cook 👨🍳 6 days to go. 🚀

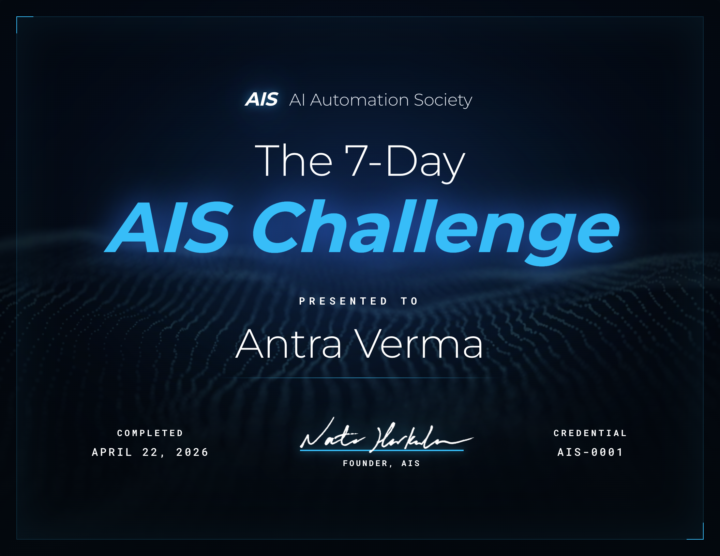

🎉 We have our FIRST graduate of the 7-Day Challenge!

Huge congrats to @Antra Verma for being the first to cross the finish line 👏 To celebrate, we're hooking her up with a FREE AIS shirt, and her official completion certificate is attached below 🏆 Let's give her a massive round of applause in the comments, she set the bar! Can't wait to see more of you submit your projects and join the graduate club. 👉 Want to take on the challenge? Head to the Classroom section or jump in HERE 👕 And if you want to grab some AIS merch for yourself, check it out HERE Cheers everyone! - Nate

1-3 of 3

@sherlynn-tan-1155

Software engineer focused on leveling up in AI. Here to learn, build, and stay relevant in a fast-changing tech world

Active 3h ago

Joined Apr 19, 2026

Powered by