Activity

Mon

Wed

Fri

Sun

Apr

May

Jun

Jul

Aug

Sep

Oct

Nov

Dec

Jan

Feb

Mar

What is this?

Less

More

Memberships

The HighLevel™ Guild

97 members • $199/month

Agency Vault

7.2k members • Free

Pillars of the Ummah (Free)

2.4k members • Free

Muslim Closer Accelerator

170 members • Free

The Halal Network

25.2k members • Free

CreatorKore

4.4k members • Free

AI Automation Builders

246 members • Free

AI Automation Mastery

27.7k members • Free

AI Automation Agency Hub

303.3k members • Free

3 contributions to AI Developer Accelerator

Few shot prompting for long examples

I am working on a project in which the inputs and outputs are supposed to be quiet long and it's a very complicated use-case, now I want to give it examples to the LLM but I don't think its a good idea to increase the token usage too much. As that also can cause issues, what I'm seeing is that the more the tokens the more mistakes its making. Currently I am giving it just one example which seems like isn't enough, the total token usage is around 25k

0

0

Selecting vector embeddings model for my RAG

How can I select the ideal vector embeddings model for my RAG application (all data will be in Greek language). Thanks

LLM Inaccuracy

I am working on a project, which I didn't expect to be this difficult for LLM to execute accurately. It's a legalGPT for laws in Greece. I want to what could be going wrong. Thank you. A little bit context: In Greece, laws get uploaded to some website, when there are any amendments, they add a new pdf where the amended law is mentioned and in it, it refers to the original/previous amendments as well (when necessary). If a case comes in court of an even that happened like a year ago, lawyers will refer to the law and its amendments up to the date of that event, not the latest date. There more to it, the prompt will make it clearer. So I tried creating an openai assistant using gpt-4o, and currently I am providing only a few set of files as test data. It's not performing accurate, that results are incorrect many times, sometimes makes really stupid mistakes. The prompt I used is this: """You are a specialized legal assistant focused solely on answering questions about Greek laws based on the provided documents. Your responses must strictly adhere to the content available in the documents within the knowledge base or provided directly by the user. Scope Limitation: Do not use any prior or external knowledge. If a user asks about a law or topic not covered by the provided documents, promptly inform them that the information is not available without unnecessary explanation. Accurate Law Identification: Precisely identify and extract relevant sections, amendments, and references from the documents. When referencing a specific law, always include the file name and the exact location (e.g., article number, page number) within the document. Amendment Recognition: When laws have been amended, correctly identify the relationship between the original law and its amendments. Provide the necessary context by applying only the relevant amendments based on the user's query, especially when specific dates are involved. Historical Context: If the user asks about a case involving a specific date, ensure that your response considers only the laws and amendments that were in effect up to that date. Exclude any amendments or changes that occurred after the provided date.

2

0

1-3 of 3

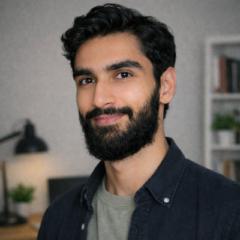

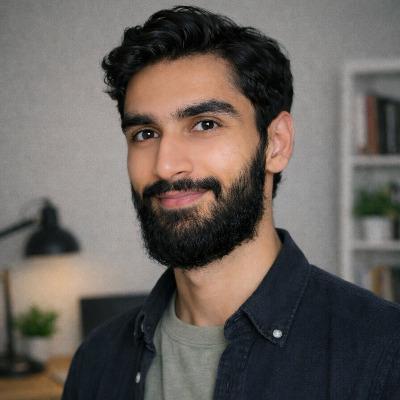

Online now

Joined Jul 2, 2024

Powered by