Activity

Mon

Wed

Fri

Sun

Jun

Jul

Aug

Sep

Oct

Nov

Dec

Jan

Feb

Mar

Apr

May

What is this?

Less

More

Memberships

AI Automation Agency Hub

316.6k members • Free

AI Automation Society

364.6k members • Free

114 contributions to AI Automation Society

Are You Measuring AI Impact… or Just Counting Activity?

A common failure in AI initiatives is mistaking activity for impact. Teams proudly report number of prompts, models deployed, or workflows automated, yet none of these indicate whether decision quality has improved. A serious AI audit should cut through this noise and ask: what decisions are now faster, what risks are now lower, and what outcomes are now measurably better. If AI only increases output without increasing clarity, consistency, or confidence in decisions, it is creating operational noise, not value. The role of an AI Transformation Partner is to redefine success metrics before scaling anything, because once AI is embedded, bad metrics don’t just mislead, they compound. If you don’t anchor AI to decision economics, you’re not measuring impact, you’re just counting movement.

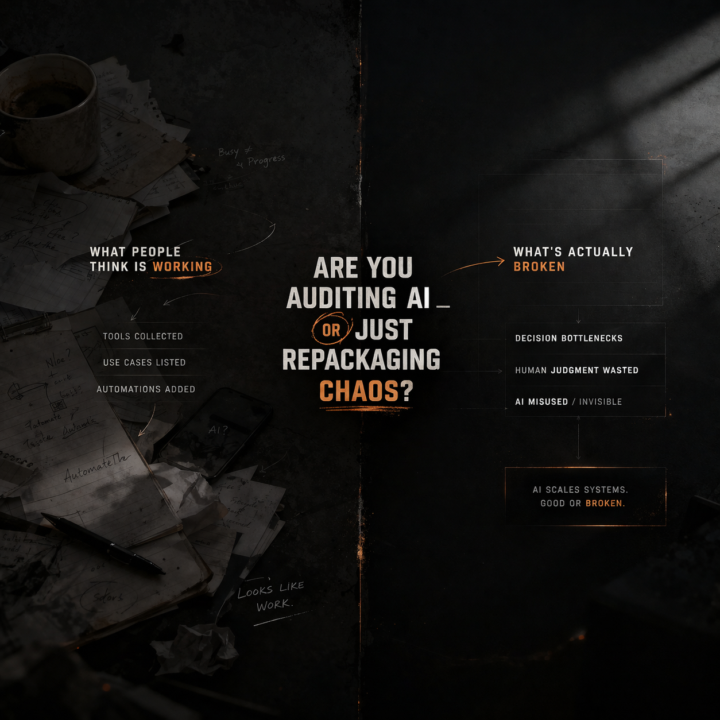

Are You Auditing AI… or Just Repackaging Chaos?

Most “AI audits” today don’t actually audit anything. They inventory tools, list use cases, and maybe suggest a few automations. That’s not an audit, that’s documentation. A real AI audit should answer three uncomfortable questions: where is the decision bottleneck, where is human judgment being wasted, and where is AI already being misused without visibility. If you can’t map how information flows, where it breaks, and who overrides it, you’re not auditing AI, you’re describing symptoms. The value of an AI Transformation Partner is not in recommending tools, but in exposing structural inefficiencies that AI will amplify if left untouched. AI doesn’t fix broken systems, it scales them. If your audit doesn’t challenge the system itself, you’re not creating leverage, you’re just adding layers.

4

0

Who Owns the Decision When AI Is Wrong?

AI rarely fails loudly, it fails ambiguously, which makes ownership blurry at the exact moment it matters most. When an output leads to a bad decision, does responsibility sit with the model, the builder, the operator, or the business owner who approved its use? In many organizations, this is never explicitly defined, which creates silent risk: people rely on AI but distance themselves from its consequences. An AI Transformation Partner audits accountability design, not just system performance, by mapping who approves deployment, who monitors outputs, who has authority to override, and who absorbs downstream impact. Without clear ownership, escalation slows, corrections fragment, and postmortems become storytelling instead of learning. Governance is not a policy document, it is a decision contract. If your system cannot answer who owns the outcome at every step, you are scaling uncertainty, not intelligence.

Is Your AI System Learning… or Just Repeating?

Many teams assume that once AI is deployed, improvement is automatic. It is not. Most systems are static loops: same inputs, same prompts, same outputs, with minor variance disguised as learning. Real learning systems require structured feedback, not occasional corrections. Where does feedback come from, who validates it, and how is it integrated back into the system? If user edits are ignored, if edge cases are not captured, if failures are not categorized, the system does not evolve, it drifts. Over time, drift creates a dangerous illusion: consistency without progress. An AI Transformation Partner audits the learning loop itself by mapping feedback capture, validation mechanisms, retraining triggers, and version control discipline. If your AI cannot systematically learn from its own mistakes, every improvement you see is manual effort wearing an automation mask.

3

0

Do You Know Where Your AI Actually Creates Value?

Many AI initiatives report activity instead of value: number of prompts, number of automations, number of models deployed. These metrics describe motion, not impact. Real AI value appears only when a decision becomes faster, a process becomes cheaper, or a capability becomes possible that did not exist before. Yet in many organizations the link between AI output and economic outcome is never mapped. The model generates insight, a team reads it, a decision happens somewhere later, and the causal chain disappears. An AI Transformation Partner must audit the value path: which outputs influence which decisions, how those decisions alter cost, revenue, or risk, and whether the effect compounds over time. Without this map, AI becomes a productivity theater where impressive dashboards hide unclear economics. If your organization cannot trace a line from model output to measurable business leverage, the system may be technically impressive but strategically invisible.

1-10 of 114

@le-lan-chi-2392

AI Transformation Partner | Helping Businesses Implement AI Automation

Active 4d ago

Joined Apr 23, 2025

Hà Nội, Việt Nam

Powered by