Activity

Mon

Wed

Fri

Sun

Jun

Jul

Aug

Sep

Oct

Nov

Dec

Jan

Feb

Mar

Apr

May

What is this?

Less

More

Memberships

Clief Notes

29.1k members • Free

12 contributions to Clief Notes

The Asgardian Council

🔥 16 saves. 1 day. 3 AI voices cooking together. I'm a retired NYPD detective. Zero coding background. Yesterday I caught something nasty in my empire: one of my businesses had been silently bleeding revenue for 11 days. Pipeline running, files getting written, but the daily summary email to my partner — gone. Dark. Eleven days. I didn't catch it. My Council did. What's the Council? Three AI voices I run in parallel using Jake's file method: ⚔️ Odin — the architect. Strategy, debate, big decisions. 🗡️ Tyr — the surgeon. Line-by-line, finds bugs others miss. 🛡️ Heimdall — the watchman. Cross-tree audits, sees what's drifting. They don't agree. That's the whole point. Yesterday Heimdall flagged something off in a system I thought was healthy. Tyr cut it down to the exact file. Odin built the recovery plan. I executed in shell. Total time from "wait, what's wrong" to "fixed and verified": 4 hours. Without them? I'd have found out when my partner asked "where's my email" and I'd have spent a week figuring out why. The wild part — none of this is some custom agentic framework. No fancy orchestration layer. It's just folders and SKILL.md files. Jake's method. A folder. A markdown file telling each voice what to do. They read the same file. They argue. They ship. By the end of the session: 16 distinct catches the Council made that I would've missed alone. One catch alone saved me from losing thousands of dollars of leads and a partner relationship. Right now as I type this, the same 3 voices are autonomously building a new state scraper for me — folder method, SKILL.md driving, Codex cooking overnight. I'm going to wake up to a finished Phase 1 report. I built none of this with code I wrote. I orchestrated it with files. If a cop with no tech background can run 3 AI models like a symphony using nothing but folders and markdown — what's your excuse? Jake's method works. Receipts above. 🥷 七転び八起き

TIL (today I learned) - let's share the 'stoopid' moments

I realized I have a bad habit of dropping abbreviations figuring the audience gets it. IYKYK kind of BS. I dropped IWKYM in a conversation with a friend who hasn't read "Dungeon Crawler Carl" and she called me out with a WTF. This group is getting large and people are coming at this (AI, large language models, programming, etc.) with varying degrees of comfort and familiarity. Feeling like I've coded since the stoneage, I grew up with this stuff. From vacuum-tube tape-drive building-sized mainframes to tiny little nano computers, I've been lucky enough to have some hand in things at a lot of layers. I see several posts where people are feeling discouraged when they hit a wall. They aim high and are frustrated when it lands low. Don't compare yourself against the rushing torrent of build posts (especially the successful build releases). You don't see the hours, weeks, months, of struggle - frustrations - and dead-ends hit to get past the pain and into the happy spot where things work (at least for a little while until they break and we go back to the basic(s)). So share your stupid here. Be vulnerable. Let others know the struggle is real and wide. Whether a total new player on the field or the top-tier champion, we all hit the wall. I put this under "General discussion" as it's less about looking for an answer and more just venting. I've learned that one late in life (ask my partner if she wants me to listen or problem solve when I notice she's unhappy about something... much better convo :)

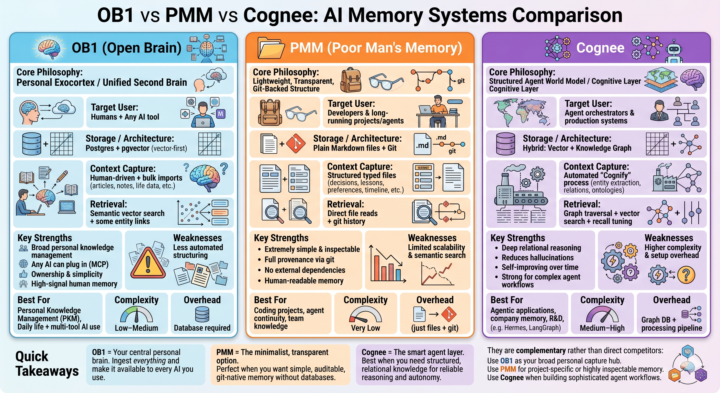

Memory management is the next frontier!

Most people are still treating LLMs like goldfish with infinite context windows. But the real power comes when you give your AI systems persistent, structured, and reliable memory. I’ve been diving deep into three distinct approaches: - Open Brain (OB1) — the personal exocortex - Poor Man’s Memory (PMM) — the ultra-lightweight, git-native path @Millenial Cat - Cognee — the structured graph + vector layer for serious agents Each represents a completely different philosophy for how we should capture, store, and retrieve context. Full breakdown dropping soon: architecture comparisons, strengths & tradeoffs, how they actually fit together in a real stack, and when I’d choose one over the others. If you’re building any kind of long-term AI workflow, personal knowledge system, or agent setup — this one’s for you.What’s your current memory strategy? Drop it below

1 like • 20h

@Millenial Cat cost of input tokens in api terms that panda filtered that claude didnt need...though, sometimes claude does need it and so has to do something different. so....the problem is money i guess. just being token efficient...is it really worth it? not sure. if i were running all locally i probably wouldnt use it. also, i contributed to the project which was fun.

This is a completely different discusion, but why not?

Just posted a comment in a lesson post, but I actually thought it will be amazing to read everybody's thoughts about it. It goes more towards the philosophical conversation, but I think is an interesting one. So, this is my hypothesis: What if we actually are just a more evolved type of LLM's, trained since birth, with thousands and thousands years of development, with more input sources (5 senses, so 5x different languages combined), and what we call consciousness is just a more sophisticated form of programming? Would love to hear your ideas ❤️

1 like • 22h

@Donald Roy I think you are right that it won't ever be us....but sometimes I notice how human-like it is, which makes sense because it is trained on us. Lack of instruction following, sometimes its does a certain thing correctly, sometimes not; it is always confident it has found the bug and knows the reason....I do all of these things too actually.

🚀 Vibe Coding is the new Technical Debt. Meet SDD.

If you want to drop this in the Skool community and actually get the attention of the high-level engineers, you need to lead with a pattern interrupt. These guys see "How to prompt" posts all day—you need to tell them why their current workflow is about to hit a ceiling. Here is the exact post I would write for you: Headline: Vibe Coding is the new "Technical Debt." It’s time to talk about SDD. We’ve all seen the magic. You vibe with Claude or Cursor, you prompt your way into a working MVP in three hours, and it feels like we’ve conquered the world. But there’s a wall coming for all of us. Once your project hits 5,000+ lines of code or requires complex state management, "vibing" starts to fail. The AI begins to loop, it hallucinations your file structure, and you spend more time "fixing the fix" than building features. The elite 1% of AI Engineers are moving toward Spec-Driven Development (SDD). The SDD Framework (How the Pros are building now): Instead of jumping straight to the prompt, you insert a "Contract Phase" using two specific files in your root directory: 1. spec.md: The "Source of Truth." This isn't just a prompt; it’s a rigorous definition of every user journey and data model. 2. plan.md: The "Execution Guardrails." This tells the AI exactly how to implement the spec, defining the file structure and API contracts before it writes a single const. Why this is your new "Moat": In a world where everyone can "vibe code," the code itself is a commodity. Your value as an AI Engineer in 2026 isn't your ability to prompt—it’s your ability to Architect. • Determinism: SDD stops the AI from guessing. • Context Management: By referencing a central spec, you keep the "God Object" in the AI's head consistent. • Scale: This is how you move from "cool demo" to "enterprise-grade SaaS." Stop prompting. Start Architecting. Check out this InfoWorld breakdown on why this shift is happening: https://www.infoworld.com/article/4166817/vibe-coding-or-spec-driven-development-how-to-choose.html

0 likes • 23h

@Luis Arias I just started using them so I don't have concrete evidence, but every time I have run it it has found issues which we have fixed. I am actually porting these skills to my own workflow. A big issue for me is reviewing, so we have 'vidhi-review' and 'vidhi-release-review' which is basically /improve-codebase-architecture. I've been using mp-skils (vidhi) for a few days and just implemented the review skills yesterday so it is a work in progress, but the foundation from Matt's skills seems to be strong.

0 likes • 23h

@Bonn Ortloff I've mostly been staying with 4.6. The times I use 4.7 have been for high level design, or for finding bugs. Twice it has found some pretty deep bugs on the first try which was really nice. I don't like the 1m context window and sometimes I just sit there being like...how is it really using so many tokens?!?!?!

1-10 of 12

@josh-harper-7968

My name is Josh and I'm working on learning new things and building my own business.

Active 1h ago

Joined May 7, 2026

Indiana

Powered by