Activity

Mon

Wed

Fri

Sun

Jun

Jul

Aug

Sep

Oct

Nov

Dec

Jan

Feb

Mar

Apr

May

What is this?

Less

More

Memberships

AITECH Institute

108 members • Free

Ai Automation On Premises

2.6k members • Free

Merkle Entrepreneurs

2.6k members • Free

VM

Voodoo Motorworks Hub

17 members • Free

Business Builders Club

8k members • Free

Citizen Developer

133 members • Free

Tech Careers

46 members • Free

Next Level Developers

29 members • Free

Software Engineering

745 members • Free

23 contributions to Software Engineering

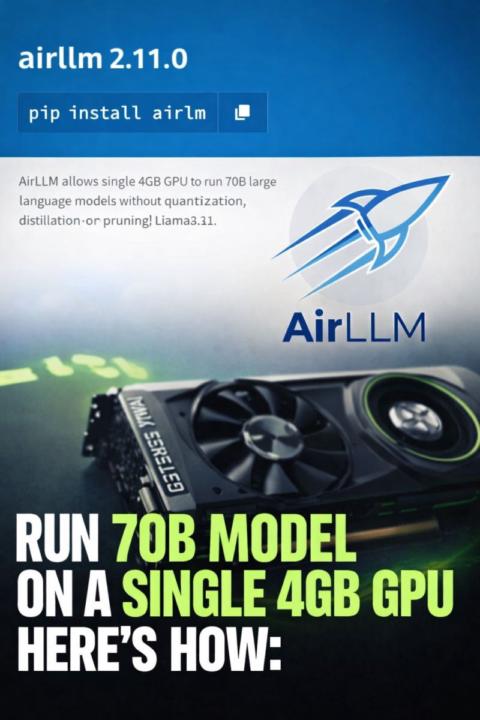

AI Is Getting Lighter, Not Just Bigger

You don’t need expensive GPUs to experiment with large models anymore. Tools like AirLLM are changing the game. Running a 70B model on a 4GB GPU would’ve sounded unrealistic not long ago. Now it’s possible — and even models at the scale of Llama 3.1 405B can be handled on minimal VRAM with the right approach. The idea is simple, but powerful: Instead of loading the entire model into memory, it processes one layer at a time — load, compute, discard — and repeats. That one shift makes large-scale models accessible on everyday hardware. Just smarter inference design. It already works with popular model families like Llama, Qwen, and Mistral, and runs across Linux, Windows, and macOS. And yes — it’s open source. This is the kind of innovation that actually matters: Not just building bigger models, but making them usable for more people. Because in the end, progress in AI isn’t only about scale — it’s about accessibility. If you're working in ML or building with LLMs, this is worth paying attention to.

1

0

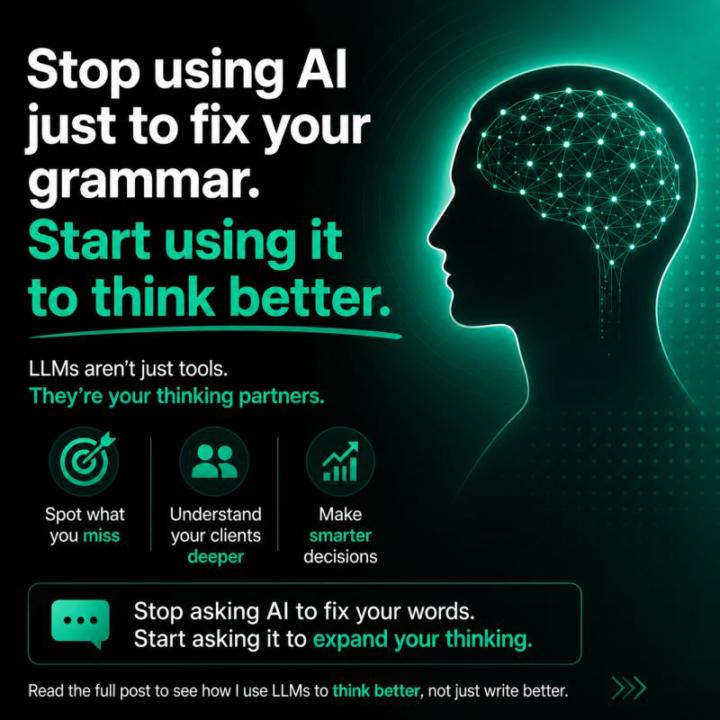

Most People Are Wasting AI's Real Power

Stop using AI just to fix your grammar. Start using it to think better. Most people use LLMs like ChatGPT as fancy spell-checkers, correcting sentences, polishing emails, fixing typos. That's using a supercomputer as a calculator. Here's how I actually use it: After every client conversation, I feed the entire chat history into an LLM and ask: • "What impression did I make on this client?" • "What are their unstated concerns?" • "Where did I miss addressing their pain points?" • "What decision are they really trying to make?" The LLM analyzes patterns I can't see. It picks up on hesitations, repeated concerns, and underlying needs that I might have missed in the moment. Then I take it further—I ask it to analyze the conversation from the perspective of a seasoned IT business executive. Suddenly, I'm getting insights on how to approach my next interaction, what objections to address proactively, and how to position my value differently. This isn't about generating content. It's about: → Better decision-making → Deeper client understanding → Strategic analysis of my own performance Think of it this way: You have a panel of experts—doctors, engineers, business strategists—sitting in front of you 24/7, asking "What can I help you solve?" Are you going to ask them to proofread your email? Or are you going to leverage their analytical power to transform how you work? The revolution isn't in what AI can write for you. It's in what AI can help you understand. How are you using LLMs beyond content generation?

1

0

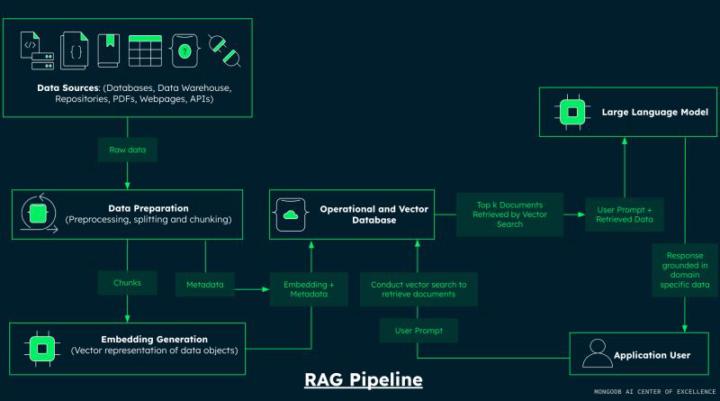

Turn Scattered Data into Instant Answers with RAG

Your team is wasting 15-20 hours per week searching for information. here how AI-powered RAG systems fix this (technical breakdown): 𝗧𝗵𝗲 𝗣𝗿𝗼𝗯𝗹𝗲𝗺 𝗜 𝗦𝗲𝗲 𝗘𝘃𝗲𝗿𝘆𝘄𝗵𝗲𝗿𝗲: → Information scattered across PDFs, Docs, Notion, Confluence → Employees can't find policies, procedures, or past decisions → Customer support repeating the same answers → New hires take forever to get up to speed → Knowledge dies when people leave Sound familiar? 𝗧𝗵𝗲 𝗦𝗼𝗹𝘂𝘁𝗶𝗼𝗻: 𝗥𝗔𝗚 𝗣𝗶𝗽𝗲𝗹𝗶𝗻𝗲 RAG (Retrieval-Augmented Generation) = Your institutional knowledge, instantly searchable. 𝗛𝗼𝘄 𝗜𝘁 𝗪𝗼𝗿𝗸𝘀: 1️⃣ Document Loaders - Ingest all your data (PDFs, Word, CSV, web pages) 2️⃣ Text Chunking - Break into optimal, meaningful pieces 3️⃣ Embeddings - Convert to numerical vectors for semantic search 4️⃣ Vector Store - Database that understands meaning, not just keywords 5️⃣ Retriever - Finds the most relevant info using similarity matching 6️⃣ LLM - Generates accurate answers from YOUR data 𝗧𝘄𝗼 𝗞𝗲𝘆 𝗙𝗹𝗼𝘄𝘀: 📥 One-time: Data → Process → Store 📤Ongoing: Question → Search → Answer 𝗪𝗵𝗮𝘁 𝗧𝗵𝗶𝘀 𝗠𝗲𝗮𝗻𝘀 𝗳𝗼𝗿 𝗬𝗼𝘂𝗿 𝗕𝘂𝘀𝗶𝗻𝗲𝘀𝘀: ✅ Customer support bot that knows ALL your product docs ✅ Internal assistant for compliance, HR, operations ✅ Onboarding tool that answers new hire questions 24/7 ✅ Research assistant for analyzing company reports No hallucinations. No generic answers. Just YOUR data, instantly accessible. 𝗧𝗵𝗲 𝗕𝗲𝘀𝘁 𝗣𝗮𝗿𝘁: No expensive model retraining. No complex integrations. Deploy in weeks, not months I help businesses implement production-ready RAG systems. Comment "INTERESTED" or DM me if you want to discuss how this could work for your organization.

2

0

🚨 Hidden LLM Issue That Can Break Your AI SaaS

While auditing a voice-driven AI eCommerce system, I discovered something alarming: 👉 A single request consumed 37K+ tokens — way beyond limits. ⚠️ The issue wasn’t the user input. It was poor architecture: • Full MongoDB documents returned in tool responses • Entire conversation history sent every time • Slightly heavy system prompts Result?Token usage exploded → costs increased → system became unstable 🛠️ What fixed it: • Limited tool responses to essential fields (max 35 records) • Smarter actions (combine steps into single calls) • Context control (last 8 messages for chat, 5 for agent tasks) • Reduced prompt size • Proper error handling for TPM / 413 issues 📉 Outcome: • Controlled token usage• Stable performance • Predictable billing• Production-ready system 💡 Lesson:LLMs don’t become expensive by default —bad architecture makes them expensive. If you're building AI systems, focus on: → Context → Data flow → Token control Curious — have you faced similar scaling issues in your AI apps?

0

0

My presentation

Hi everyone my objective Is to learn how to design a sistem and create each part of It. I joined this community to learn about those subjects and get some feedback in the process

1-10 of 23

@ibrahim-bajwa-5271

Technical founder building SaaS products. Background in full-stack development (Next.js/React). Focused on scalable architecture and AI integrations.

Active 2h ago

Joined Nov 24, 2025

Islamabad Pakistan

Powered by