Activity

Mon

Wed

Fri

Sun

Jun

Jul

Aug

Sep

Oct

Nov

Dec

Jan

Feb

Mar

Apr

May

What is this?

Less

More

Memberships

3 contributions to Agent Zero

issue with agent zero

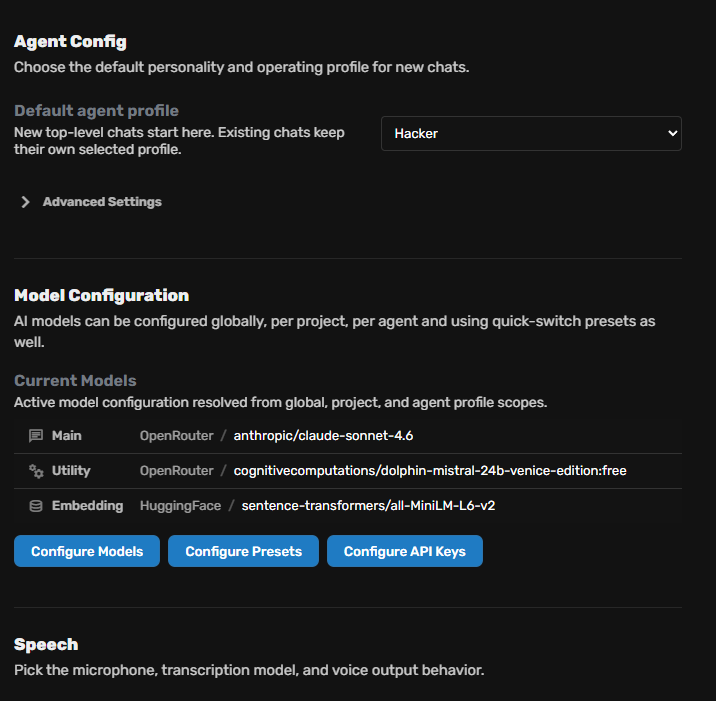

Hello everyone, I’m currently facing an issue with Agent Zero. My LLM provider is OpenRouter, and I still have available credits on my account. However, I keep getting the following error: litellm.exceptions.RateLimitError / OpenAIException - You exceeded your current quota, please check your plan and billing details From my understanding, Agent Zero seems to be trying to use the OpenAI API directly instead of routing everything through OpenRouter. In my configuration: - Main model: OpenRouter / anthropic/claude-sonnet-4.6 - Utility model: OpenRouter / cognitivecomputations/dolphin-mistral-24b-venice-edition:free Could someone please help me understand why this is happening and how to fix it? Thanks in advance. Version un peu plus directe et naturelle : Hello everyone, I’m having an issue with Agent Zero. My provider is OpenRouter, and I still have enough credits, but I keep getting this error saying that I exceeded my OpenAI quota. It looks like Agent Zero may be calling OpenAI directly instead of using OpenRouter for all requests. My current config is: - Main: OpenRouter / anthropic/claude-sonnet-4.6 - Utility: OpenRouter / cognitivecomputations/dolphin-mistral-24b-venice-edition:free Has anyone faced this before or knows how to fix it? Thanks. Le deuxième est meilleur pour Discord, GitHub ou forum support. litellm.exceptions.RateLimitError: litellm.RateLimitError: RateLimitError: OpenAIException - You exceeded your current quota, please check your plan and billing details. For more information on this error, read the docs: https://platform.openai.com/docs/guides/error-codes/api-errors. Traceback (most recent call last): Traceback (most recent call last): File "/opt/venv-a0/lib/python3.12/site-packages/litellm/llms/openai/openai.py", line 991, in async_streaming headers, response = await self.make_openai_chat_completion_request( ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

0

0

Agent Zero v1.12 is live — Desktop Runtime + Agent Habitat 🖥️

Hey everyone, v1.12 is now live. Agent Zero now has a full Linux Desktop Runtime inside the Web UI. You get: • LibreOffice — Impress, Calc, Writer • Terminal inside the Agent Zero workspace • File Browser for searching and managing files • Native Browser with multi-tab workflows and extensions • Time Travel for workspace history and recovery But here is the really exciting part: You can now run other CLI agents inside the same workspace as Agent Zero. From the Desktop terminal, you can install: • Claude Code • OpenAI Codex • Cursor Agent Plus Gemini CLI, Aider, Goose, OpenHands, Qwen Code, and others (full list in the Desktop README). That means Agent Zero can work alongside your favorite CLI agents in the same visible Linux environment. Give Agent Zero the goal and ruleset, and it can help coordinate the work, inspect files, review outputs, run tools, and keep progress moving without you having to babysit every step. Update Agent Zero: Settings > Update > Open Self Update > Restart and Update After updating, open the Desktop and check the README. It includes install commands for the most popular CLI agents and safety tips.

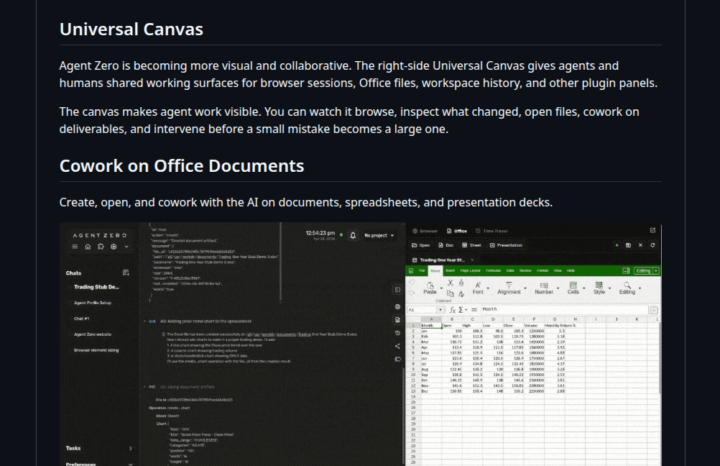

A0 v1.10 is HUGE! (Native Browser, Time Travel, Office Canvas) ⚡

Hey everyone, Version 1.10 is finally here, and it fundamentally changes how you interact with Agent Zero. What’s new? - The Universal Canvas: A brand new right-side panel where you can cowork with your agent. - Native Browser: Watch the agent browse the web live. You can even use the new Annotation Mode to visually select UI elements and tell the agent to fix them. - Office Magic: Work on Word, Excel, and PowerPoint files natively. The agent can even generate real Excel charts for you. - Time Travel: Messed up a file? The workspace now has a snapshot history. Diff, preview, and revert changes easily. Powered by Agent Zero's Space Agent. - OpenAI OAuth: Connect your existing OpenAI/Codex subscription so you don't have to burn API credits. Don't forget the CLI Connector 💻If you want the agent to work on your local machine's files while keeping its code execution safely inside Docker, install the CLI (requires v1.9+). - Mac/Linux: curl -LsSf https://cli.agent-zero.ai/install.sh | sh - Windows: irm https://cli.agent-zero.ai/install.ps1 | iex (Ask the agent to use the a0-setup-cli skill if you need help). Update Agent Zero:Settings > Update > Open Self Update > Restart and Update Go build something awesome. See you all tomorrow, April 29th at 3PM UTC in the Skool Community Call. Join us here: https://www.skool.com/live/DlyvNKHbyWw

1-3 of 3

@assi-stanislas-seka-5548

Happy to join! Based in Paris. Native French speaker, improving my English daily. Here to learn, grow, and connect with great people!

Active 8h ago

Joined Apr 11, 2026

paris

Powered by