Activity

Mon

Wed

Fri

Sun

Jun

Jul

Aug

Sep

Oct

Nov

Dec

Jan

Feb

Mar

Apr

What is this?

Less

More

Owned by Tom

We help founders build AI content systems so they stop being the bottleneck in their own marketing. No hype. Just what works. 👽

Memberships

useful AI

8.8k members • Free

The AI Advantage

120.2k members • Free

AI Automation Agency Hub

313.4k members • Free

AI Automation (A-Z)

153.2k members • Free

AI Automation Society

346.3k members • Free

Skoolers

193.2k members • Free

16 contributions to AI Automation Society

I Tried Something Instead of Explaining It…

Lately I’ve noticed something while talking to business owners… Whenever I explain ideas (websites, funnels, automation etc.)it makes sense… …but it doesn’t really click. People understand it but they don’t fully feel the difference. So I tried doing something different. Instead of explaining… I started building small demos based on their business. Not full projects. Not anything crazy. Just something simple where they can actually see: “oh… this is how it would look ”“this is how a user would move through it” And honestly… the reaction is completely different. Way more clarity. Way better conversations. Now I’m curious If someone showed you a live demo of your own website or flow improved, would that be more helpful than just advice? Or do you prefer just getting feedback?

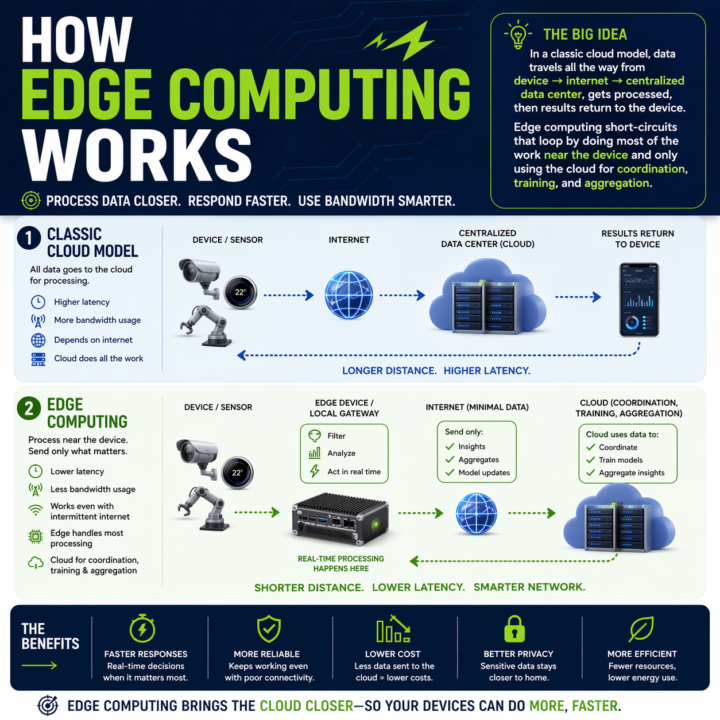

Your Claude API call is eating 1.2 seconds. Here is when that stops being acceptable.

Every automation you build has a round trip baked in. Claude API, OpenAI call, n8n webhook, vector DB lookup. Data leaves the device, travels to a data center, gets processed, comes back. On a good day a cached Claude call runs 600ms to 1.2s. On a bad day you are watching a spinner. Where that round trip breaks: - Real-time perception in vehicles or robotics - Industrial control loops that cannot wait on a network - Anywhere connectivity is spotty or intermittent - High-volume sensor data where shipping everything to the cloud burns bandwidth and budget Edge changes the math. Compute runs where the data is generated. Local work stays local. Cloud only sees what needs cross-site context. The split that is emerging: - Edge: real-time inference, event detection, filtering, local control - Cloud: model training, cross-site analytics, long-term storage, heavy compute On-device SLMs are usable now. Llama 3.1 8B on an M-series Mac via Ollama hits sub-100ms first token. Haiku-class reasoning is running on phones with NPUs. If your automation touches physical systems, live audio, live video, or anything latency-sensitive, you have a real placement decision to make: edge, cloud, or hybrid. The pattern I keep reaching for: route by cost of latency. If a one-second delay breaks the experience, run it local. If the step needs cross-context memory or a frontier model, send it up. Cheap decisions at the edge, expensive ones in the cloud, with the edge doing the filter so the cloud only sees signal. The architecture question has changed. Not "which cloud model do I call," but "where does each step of this pipeline need to run." What is running in your stack right now that should not be making a cloud round trip? What is stopping you from moving it?

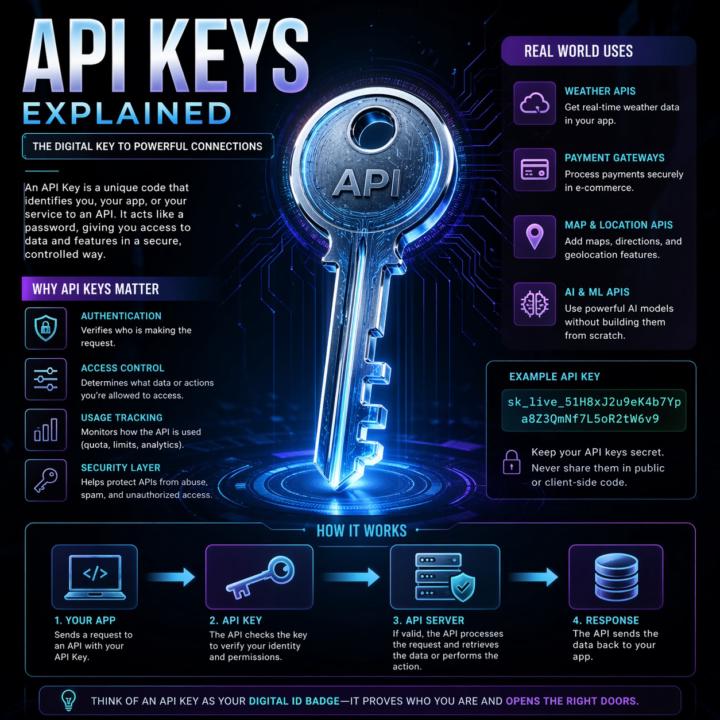

APIs, explained the way I explain them to clients

Most automation problems I see trace back to a fuzzy mental model of what an API actually is. So here's the frame I use with clients. An API is a remote control for software. Your app presses a button (sends a request). Another app does something and sends back a result (a response). You don't see how the other app works inside. You just follow the rules printed on the buttons (the docs). That's it. That's the whole concept. Two analogies that work in client calls: Restaurant menu. The menu lists what you can order and how to ask for it. Kitchen is hidden. Meal is the response. Light switch. Flip the switch (request). Wiring, grid, power plant are hidden. Light turns on (response). Same idea either way: clear inputs, clear outputs, hidden complexity. The actual call pattern: 1. Client asks (your app, browser, script) 2. Request goes out with a URL, a method (GET, POST, etc.), and any data the server needs 3. Server does the thing 4. Response comes back, usually JSON Break any of those rules and you get an error, not data. Why this matters for builders: - Reuse beats rebuild. Use Stripe's API instead of building payments from scratch. - Complexity stays hidden. You don't need to know how Twitter stores tweets to pull the last 20. - Access is controlled. APIs decide what's exposed, who can call it, and how often. Security still depends on the implementation, but the boundary exists by design. - Apps mix APIs like ingredients. Maps, payments, email, auth, all stitched together. When two pieces of software talk in a structured, agreed way, they're using an API. Every n8n node, every Claude Code tool call, every trigger. All APIs under the hood. What analogy do you use when a non-technical client asks what an API is? Share in the comments what lands for other builders? Highly recommended related information: Check out @Michael Wacht's Daily Dose: https://www.skool.com/ai-automation-society-plus/ai-terms-daily-dose-api-use?p=5c08d0bf

Looking for partner

Hi everyone I can build and sell. I'm looking for a partner for growth.

🚀New Video: I Tested GPT 5.5 vs Opus 4.7: What You Need to Know

OpenAI just dropped GPT 5.5 and the benchmarks look strong against Opus 4.7, but benchmarks only tell part of the story. I ran four head-to-head experiments in Codex and Claude Code to see how the models actually compare on speed, cost, and output quality. The results were not what I expected.

1-10 of 16

@41683790

Building AI content systems for founders. neonaliens.com

Online now

Joined Apr 17, 2026

Powered by