Write something

Pinned

Want to start your own Skool community?

Use this link: Download the Skool App

Pinned

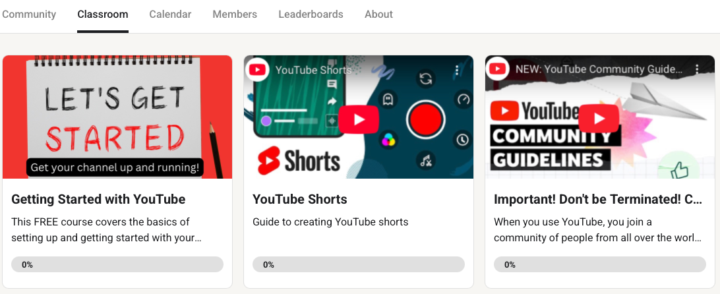

Check out the Classroom tab for Tutorials

I am continuing to add tutorials to the classroom area of our Skool group. Currently, tutorials include: 1. Getting started on YouTube 2. Community Guidelines - to avoid your account from being banned. 3. YouTube shorts. More to follow...

Pinned

Group Rules - Please Read - or risk being BANNED!

THIS COMMUNITY IS FOR PEOPLE WHO WANT TO LEARN ABOUT YOUTUBE. IT IS. NOT FOR PEOPLE WHO ALREADY KNOW YOUTUBE AND ARE TRYING TO SELL THEIR STUFF. IF THAT’S YOU, THEN LEAVE BEFORE YOU GET BANNED! ❌NO Soliciting leads ❌NO Selling/promoting your agency services, products, groups, etc. ❌NO Bait posts - Ex: "Look at these results, DM me for more info." ❌NO Referral links, affiliate links, etc. ❌No putting in pointless comments - to build your interaction score ✅General discussion ✅Sharing experiences from your journey ✅Networking with others ✅Finding accountability partners ✅Sharing feedback & ideas ✅Asking questions ✅Sharing your wins & progress ✅Sharing your insights ✅Supporting others

Real Feedback please

ive made some youtube content but getting views seems like insanely difficult i see channels with nothing but still images w/ 10000+ followers but no content just random slides. i know my channel is still barebones is that why nothing gets shown or is it my thumbnails help please https://youtu.be/VxUzxTXN4Ck?si=-_yUSRQqd7ddRiD-

YouTube’s AI deepfake detection opens up to Hollywood

YouTube announced that its AI-powered likeness detection tool, previously available to a small and select group of creators, extends to actors, athletes, musicians, and other public figures who might be impersonated. Including figures don’t even have YouTube channels. The expanded rollout was coordinated with major talent agencies CAA, UTA, and WME and works similarly to Content ID: it scans uploaded videos for simulated faces, then gives rights holders the option to request removal or flag the content for a privacy policy violation. Parody and satire are, of course, permitted. Audio detection is on the roadmap. Why It Matters The practical implication for most working creators is straightforward: if your content uses footage, images, or AI-generated representations of celebrities or public figures, that content is now subject to automated detection and potential removal by the rights holder. The reach of the system has meaningfully expanded in a single update. The broader story is about precedent. YouTube is building Content ID for faces. The architecture that made it possible for major labels to manage their catalogs at scale is now being adapted for likeness rights, and that’s a significant structural expansion of who can control what appears on the platform. Creators building in adjacent niches — commentary, reaction, parody, fan content — should watch this one closely, because the enforcement boundaries are still being drawn and they’re being drawn quickly. My thanks to Tech Crunch for the above...

2

0

1-30 of 501

skool.com/youtube-academy

The Community for Entrepreneurs and Business Owners who want to grow their business via YouTube Marketing for Lead Generation and subscriber growth.

Powered by