Write something

Pinned

🧭 Developers Are Using AI to Think Better, Not Just Type Faster, and Every Team Should Notice

A lot of people still describe AI in narrow productivity terms. It writes faster. It drafts faster. It autocompletes faster. Those gains are real, but they can understate what is actually changing for some of the most advanced users. Developers, in particular, are increasingly using AI not simply to type faster, but to think better. The system helps frame the problem, explore alternatives, test assumptions, surface edge cases, and reduce the time spent circling around uncertainty before useful progress begins. That matters far beyond software. It signals a broader shift in how professionals may begin using AI. The deepest time win may not come from faster output alone. It may come from shorter thinking loops, clearer framing, and less time lost wandering before the real work starts. ------------- Context ------------- Many work tasks are not slowed by execution as much as by ambiguity. A person knows something needs to be done, but they are still trying to figure out what the problem really is, what constraints matter, what direction makes sense, and what trade-offs will likely appear. That is thinking work. And thinking work often takes longer than the visible output it eventually produces. In software development, this dynamic is especially visible. A coding problem may require understanding the intent, the structure, the failure mode, and the likely edge cases before writing anything meaningful. If AI can help a developer reason through those dimensions earlier, the time savings are not just in typing fewer lines. They are in reducing the loops of uncertainty that surround the task. That is the broader lesson every team should notice. Most professionals do not only need faster execution. They need faster clarity. They need to get to a better problem frame sooner. They need to stop spending so much time in low-certainty wandering. That is where AI as cognitive leverage becomes so interesting. It supports progress not only by producing, but by helping people think with more structure and less friction.

2

0

Pinned

It’s Hard to Feel Grateful and Angry at the Same Time

One thing I’ve learned the hard way: It’s really difficult to feel gratitude and anger at the same time. Not impossible. But difficult. Because whatever emotion you feed tends to shape the lens you see your life through. When you stay stuck in frustration long enough, your brain starts scanning for more proof that things aren’t working. More proof that people are disappointing. More proof that you’re behind. And the scary part is… you’ll find it. But gratitude shifts your focus completely. Not fake positivity. Not pretending hard things aren’t real. I mean intentionally zooming out long enough to remember: • what’s still working • what you’ve already overcome • what opportunities are still in front of you • who’s still in your corner • how far you’ve actually come The people who build great businesses and great lives aren’t the people who never get frustrated. They’re the people who don’t stay there. They know how to reset their perspective before resentment becomes their identity. And honestly, this matters even more as entrepreneurs because this journey will give you endless reasons to focus on what’s broken. The algorithm changed. Sales slowed down. Someone copied your idea. A launch flopped. A partnership fell apart. People unsubscribed. Cool. Welcome to building something meaningful. But if you lose your ability to access gratitude in the middle of the mess, this game gets really heavy really fast. So here’s the question: What’s something in your life right now that you were once praying for… but have slowly started treating as normal?

Pinned

🔥 Quick Clarification: Which AI Advantage Community Should You Be In?

We've been getting a few questions in the community and inbox about the difference between our communities, unsure which one you should be in. Here's a quick breakdown to help you find your home base. --------------------------------------------------------------------------------------------------------------------------------------------------- 1. This Skool Community (Free) You're already here. This is our free hub where our team and members share value, ask questions, and grow together. What's inside: - Free trainings and resources in the Classroom - Ongoing community conversations and support - The latest AI news and AIA updates - Practical insights to help you grow with AI Best for: Anyone exploring AI, building community connections, and staying current without a monthly commitment. The Summit may be over, but this group isn't going anywhere. --------------------------------------------------------------------------------------------------------------------------------------------------- 2. AI Advantage Club (Paid Membership) Our premium membership for members ready to go deeper and build their AI skillset consistently. How you may already have access: - VIP members: 30-day trial included - Bootcamp members: 3 months included - VIP + Bootcamp: 4 months free What's inside: - Advanced trainings and step-by-step guides - "Hacks of the Week" you can apply immediately - AI workflows and copy-and-paste prompt libraries - Real business use cases and time-saving systems - Ongoing implementation support - A Technical Support Team for when you hit roadblocks - New resources added regularly Think of it as your AI gym membership: the place to train those AI muscles and really implement AI into your life and business. Best for: Members ready to move past learning and into hands-on implementation with structured support. Where is the AI Advantage Club? Right here: https://app.aiadvantage.com/login

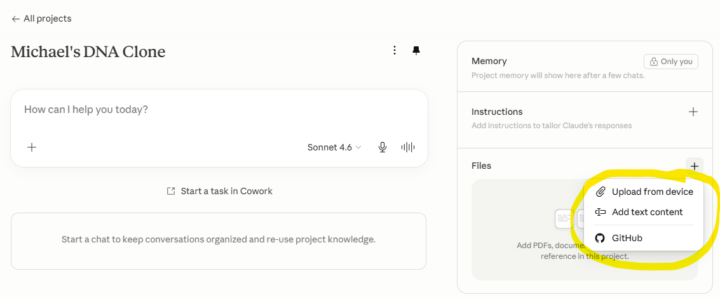

DNA document question in Claude

I do not have an option to link the google DNA doc to my project in Claude. How to I get it to show up? As you will see in my highlight it is not there where in the classroom example it is a drop down. Please advise how I can solve this. I asked Claude but when I went through the steps it still did not appear. Any input is appreciated!

0

0

Claude DNA set up question

I do not have an option to link the google DNA doc to my project in Claude. How to I get it to show up? As you will see in my highlight it is not there where in the classroom example it is a drop down. Please advise how I can solve this. I asked Claude but when I went through the steps it still did not appear. Any input is appreciated!

0

0

1-30 of 19,317

skool.com/the-ai-advantage

Founded by Tony Robbins, Dean Graziosi & Igor Pogany - AI Advantage is your go-to hub to simplify AI and confidently unlock real & repeatable results

Powered by