Fine Tuning Models for Task Specific Jobs.

Bit technical but super important. Would you use a 400B size model just to send an email? This is what a lot of people are doing right now.. And it's a complete waste of compute power and energy! What you should do instead, is fine tune a smaller model.. Say 8B in size. Fine tuning simply means to teach the model how to respond and give it new knowledge without it forgetting what it already knows. - Take 100 of your best email replies you've written yourself. - Put it in a spreadsheet. - 2 Columns: Prompt, Response - Prompt Column: Write the message you usually send to AI when you need emails drafted. - Response Column: Paste in the AI response with included email in the formatting you want it to master. - Use Unsloth for Windows & Linux. MLX for MacOS to fine tune your model. That's it! You've successfully trained a tiny model to write your emails for you :)

0

0

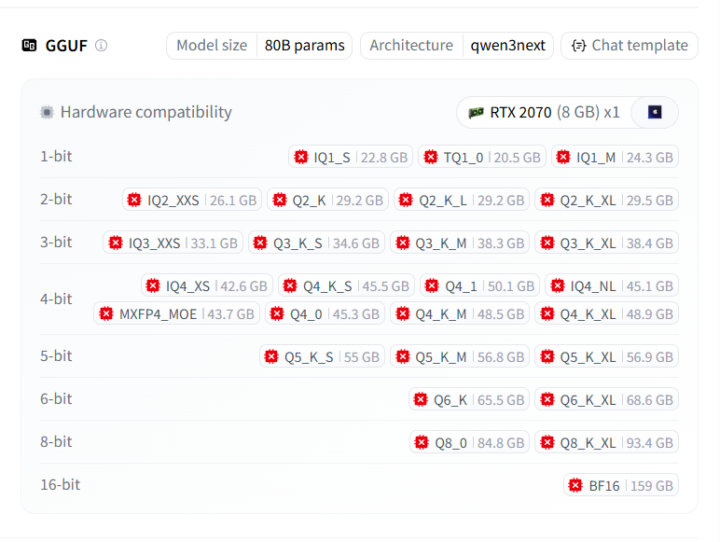

Qwen 3 Coder Next: 80b Model that can run on just 32gb of RAM!

Yes you read that correctly folks, Qwen has smashed this one out of the park. Thanks to the power of Quantization (the art of compressing models like ZIP files), we're able to get the model size down from 93gb to just 22GB while retaining 90% of it's intelligence.. Note it's an 80b model so in this case you'll still have a fantastic time even with a Q1 or Q2 version. I'm using Q2 K_M and it hasn't failed once. You too can run this incredible model completely locally, offline and without paying a single dollar. We'll cover this in depth in tomorrow's live so don't forget to tune in!

0

0

Using Terminal Agents like Claude Code

Quick one: If you're not using terminal based agents yet.. You're already behind Here's Why. Terminal Agents are: - Lightweight - only use minimal compute resources to run - Can run for hours if not days - without any human intervention required ( if you know what you're doing) - Can work on multiple projects at once - Come back once the build's complete And so much more. Big question though.. Do I have to pay? No. That's why you're here :) You can use Ollama with Claude Code and get shit done! Here's how it works. Claude Code is Anthropic’s agentic coding tool that can read, modify, and execute code in your working directory. Open models can be used with Claude Code through Ollama’s Anthropic-compatible API, enabling you to use models such as qwen3-coder, gpt-oss:20b, or other models. Install Install Claude Code: macOS / Linux curl -fsSL https://claude.ai/install.sh | bash Windows irm https://claude.ai/install.ps1 | iex Usage with Ollama Claude Code connects to Ollama using the Anthropic-compatible API. Set the environment variables: export ANTHROPIC_AUTH_TOKEN=ollama export ANTHROPIC_BASE_URL=http://localhost:11434 Run Claude Code with an Ollama model: claude --model gpt-oss:20b Or run with environment variables inline: ANTHROPIC_AUTH_TOKEN=ollama ANTHROPIC_BASE_URL=http://localhost:11434 claude --model gpt-oss:20b CLOUD MODELS ALSO WORK AND WORK VERY WELL! Note: Claude Code requires a large context window. We recommend at least 32K tokens. Free. Unlimited coding. All at your disposal. Happy building! PS. Do you want a live stream for this?

1-27 of 27

skool.com/open-source-ai-builders-club

The #1 Club for all developers, builders and innovators in Open Source AI Models, Apps and FREE Alternatives to Paid & Expensive tools!

Powered by