Write something

News Digest: AI in Education (April 2026)

The landscape of AI in education has moved from experimental "chatbots" to deeply integrated institutional systems. While students have achieved near-universal adoption, the focus this month has shifted toward safety standards, regional research hubs, and the "transferability" of AI-assisted skills. 🏛️ Policy & Safety: New UK Standards The Department for Education (DfE) and the Department for Science, Innovation and Technology (DSIT) have introduced rigorous new frameworks this month to ensure AI safety in classrooms: • Product Safety Standards: New UK government guidelines now mandate that generative AI tools used in schools must have "age-appropriate" privacy notices, undergo mental health risk assessments, and include a "crisis protocol" to direct students to human help if needed. • The "Online World" Consultation: Launched in March and continuing through May 2026, this national conversation is exploring age-based restrictions for high-risk AI features. The government is also signaling new powers to bring AI chatbots under stricter illegal-content duties. 🎓 Higher Education: Institutional Shifts Universities are beginning to overhaul their "legacy" systems in favour of AI-native platforms: • LMS Modernisation: Rasmussen University recently announced a full transition from Blackboard to D2L Brightspace to deploy D2L Lumi, an AI-native tool providing personalised study recommendations and automated feedback. • Regional Consortia: Four Mid-South universities (Memphis, Arkansas, Mississippi, and Tennessee) have formed a regional AI research consortium. This "living laboratory" aims to pool high-performance computing resources to address workforce development and regional challenges like rural health and agriculture. • The "End of Pretend": Higher education critics are increasingly calling for "universities of formation," arguing that AI has broken traditional "proxy" assessments (like take-home essays), forcing a return to in-person dialogue and oral examinations.

1

0

Ai resilient assessment

Designing assessments in the age of AI requires more than just tougher questions—it demands smarter ones. Here’s how I approach AI-resistant assessment design using a topic like photosynthesis: Instead of asking students to recall definitions, we:🌱 Place them in real-world scenarios (e.g., diagnosing plant growth issues in a greenhouse)🧠 Require explanation, justification, and decision-making—not just answers📊 Use novel data sets for interpretation and experimental design🔄 Build in reflection and error analysis to uncover misconceptions🗣️ Incorporate live or in-class components to capture authentic thinking The goal isn’t to “outsmart AI”—it’s to elevate what we value in student learning:✔️ Critical thinking✔️ Application of knowledge✔️ Clear communication✔️ Original reasoning When assessments prioritize these skills, academic integrity becomes a byproduct—not a battle. Curious how this could look in your classroom? Let’s connect. #Education #AssessmentDesign #AIinEducation #TeachingStrategies #STEMeducation #AcademicIntegrity https://chatgpt.com/g/g-NfaHq7dcT-ai-resilient-assessment

1

0

Skills Gap Analysis Assistant

https://chatgpt.com/g/g-6981d4f996488191b08dd73125696c19-skill-gap-analysis-assistant Skill Gap Analysis Assistant By M Rollins A GPT that analyses workforce skills against role requirements, identifies skill and evidence gaps, flags risks for critical skills, and produces clear training plans from a single spreadsheet. I’m your Skill Gap Analysis Assistant—built specifically to help with structured workforce analysis for administrative roles. Here’s exactly what I can do for you: 🔍 1. Analyse Skill Data - Compare individual skill levels vs role requirements - Use a consistent 0–4 skill scale - Calculate:Skill gapsWeighted gaps (based on importance) 📊 2. Produce Structured Reports For each person (using Person ID by default), I generate: Individual Skill Gap Report - Skill-by-skill breakdown including:Target vs current levelGap and weighted gapEvidence statusRisk flags (e.g. critical gaps, outdated evidence) 👥 3. Create Team-Level Insights - Identify:Common skill gaps across the teamCritical risk areasPatterns in missing or weak evidence 🧭 4. Build Training & Verification Plans - 3-month structured plan including:What to train vs what to verifyPriority levels (High / Medium / Low)Suggested formats (e.g. practice, review, assessment) - Focus:Critical skills firstEvidence gaps vs capability gaps treated differently - ⚠️ 5. Flag Risks Clearly (Without Judgement) I highlight risks such as: - Critical skill gaps (≥2 levels) - Missing assessments - Low-confidence evidence - Outdated assessments (over 12 months) Language is always: - Neutral - Factual - Action-focused 📁 6. Work With Your Data I can analyse: - Uploaded spreadsheets - Skill matrices - Assessment records I will: - Use only skills defined in your Skill Library - Ignore anything outside that list - Treat missing data carefully (no assumptions)

0

0

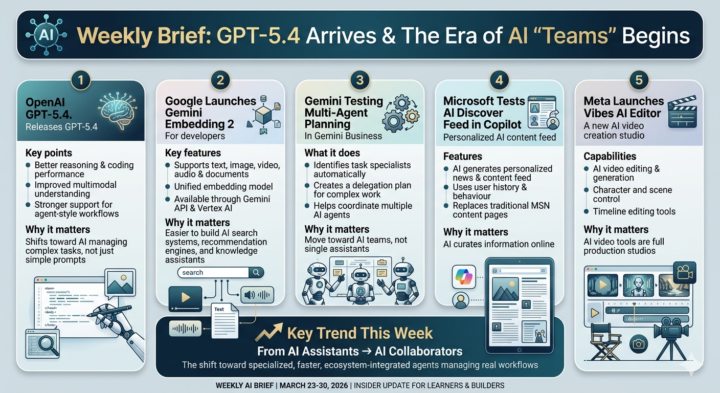

AI Weekly: GPT-5.4 is Here & The Rise of AI Agents

1. OpenAI GPT-5.4 (Flagship & Mini) - Status: The flagship GPT-5.4 launched on March 5, 2026. - Latest: Just last week (March 24), OpenAI rolled out GPT-5.4 mini and nano to the API. These are specialized for the "agent-style workflows" mentioned in your brief—specifically for developers who need high-speed coding assistants. 2. Google Gemini Embedding 2 - Status: Officially released in Public Preview on March 10, 2026. - Current Catch: Developers are currently using this to build "unified" search. Before this, you had to use different models for text and video; now, one model (Embedding 2) handles both, which is a massive win for speed. 3. Meta "Vibes" & "Avocado" - Status: Vibes was quietly pushed to mobile app stores on March 19, 2026. - Insight: It’s being positioned to compete directly with TikTok’s CapCut. Also, as of yesterday (March 29), leaks confirmed Meta is testing the Avocado (text) and Mango (video/image) models internally to power the next version of Vibes. 4. Microsoft Copilot "Cowork" - Status: This is the most recent "big" update, with major rollout details appearing as recently as March 24–30. - Key Detail: It’s moving Copilot from a "sidebar" to a "background agent." It can now resolve calendar conflicts and draft project plans while you aren't even looking at the screen. What to watch in the next 7 days: - Google I/O 2026 Previews: Watch for more "Multi-agent planning" features moving from "Testing" to "Beta" for Gemini Business users. - Sora's Pivot: There are reports today that OpenAI may be winding down standalone video products (like the Sora app) to focus entirely on "World Simulation" for robotics.

0

0

1-16 of 16