Activity

Mon

Wed

Fri

Sun

May

Jun

Jul

Aug

Sep

Oct

Nov

Dec

Jan

Feb

Mar

Apr

What is this?

Less

More

Memberships

BuilderzGym

53 members • Free

AI SEO | Rank & Rent Lead Gen

1.5k members • Free

Zero to AI

1.6k members • $9/month

Organic Maps Domination

19 members • Free

Future Proof

354 members • Free

TheJourneyClub

350 members • $210/y

AI Skill Growth Lab

222 members • Free

Vibe Coder

421 members • Free

Webido CTR

47 members • Free

5 contributions to Future Proof

How often LLMs re-check citations?

Most people working on LLM visibility right now are making one big assumption that fixing a citation works the same way as fixing something on Google. It doesn't. Not even close. Let me break down what's actually happening with citation accuracy in LLMs and why you need to rethink your timelines. THE CITATION ACCURACY PROBLEM Here's the reality LLM citations are not reliable to begin with. Research published in Nature Communications in 2025 and papers on arXiv that analyzed over 366,000 citations across ChatGPT, Claude, and Perplexity found that 50 to 90% of LLM citations fail to fully support the claims being made. We're talking fabricated references, outdated sources, and heavy bias toward older papers that were overrepresented in training data. So the first thing you need to understand is the citations you're seeing in LLM outputs right now? A huge chunk of them are already inaccurate. WHY FIXING A CITATION ISN'T INSTANT Now let's say you spot a wrong citation about your client or your brand. You reach out to the source, you get it corrected. On Google, you can request a recrawl, check the status, and within a reasonable time, the updated version is live in search results. It's not instant, but it's relatively fast and trackable. With LLMs, that's not how it works. Imagine how come chatGPT is reading 50+ landing pages processing it under 10 seconds and giving you summary. There are many questions left on the table. What if the website is slow? How consensus is verified within such short amount of time. Without a cache version this action isn't possible for LLMs to perform. To compare you can give 10 newly published articles links to chatgpt and ask it to summarise it. Now compare the time taken yourself. LLMs run on what's basically a cached version of the web. Crawlers like GPTBot and ClaudeBot are scraping the web, but they're not doing it in real time. GPTBot recrawls roughly every 30 to 60 days. And here's a wild stat ClaudeBot has a crawl-to-refer ratio of about 38,000 to 1. That means it's scraping constantly but rarely sending traffic back or updating its understanding of those sources.

3

0

Bing indexing issue !

Why does the same post struggle to index on Bing which are doing great on Google. Is there anything that i'm missing? BTW robots.txt and Schema are Good. Any tips on this one?

The Unfiltered Guide to LLM Tracking

Today I want to give you the unfiltered reality of what's going on in the market. Why there are so many LLM rank trackers, some are cheap, some are expensive, and some are going to the extreme level now. Let me break it down. THE TWO TYPES OF LLM TRACKING Whenever someone says they're going to "track LLMs," there are really only two ways anyone in the world can do it: 1. API Calls: with web search enabled, or without web search 2. UI-Based (Frontend): which has two versions: logged-in session and non-logged-in session That's it. Whatever tools you hear about on the internet, they're just selecting one of these and building their infrastructure on top of it. And the cheaper tools? They're almost always making API calls. So you might ask why are API calls different from the UI-based versions? HOW LLMs ACTUALLY WORK (CORE PRINCIPLES) To understand the difference, we need to understand how LLMs work at the core. Let's say you're building an LLM from scratch. What you'd do is find a massive amount of data, push it into your model, and process it. Simplest way I can explain it, imagine you turned off your internet and hit the search button on your Windows file finder. You're just trying to find a file on your own machine. That's essentially what an LLM does with its training data. Offline data, whenever a non-web API call is made, this offline/training data is what gets triggered. Web-enabled data, whenever a web-enabled API call is made, the LLM has the capability to go search the live internet. "SEARCH THE WEB" BUT WHERE ARE THEY SEARCHING? You and I have Google or Bing to search. But for LLMs, where are they actually looking? Think of it like this imagine the entire internet is your PC, and all that data has been converted into an index. Like the index pages of a book. You look at the index, it says "if you want to learn about X, go to page 247." That's how it extends its knowledge. The LLM starts with its initial training data. Let's say it has 10 documents. Those 10 documents contain links, external references, bits of information. From those, it pulls more. Now think about the compounding effect 10 documents with 10 links each becomes 100, then 1,000, then millions, then billions of connections. An entire network gets created.

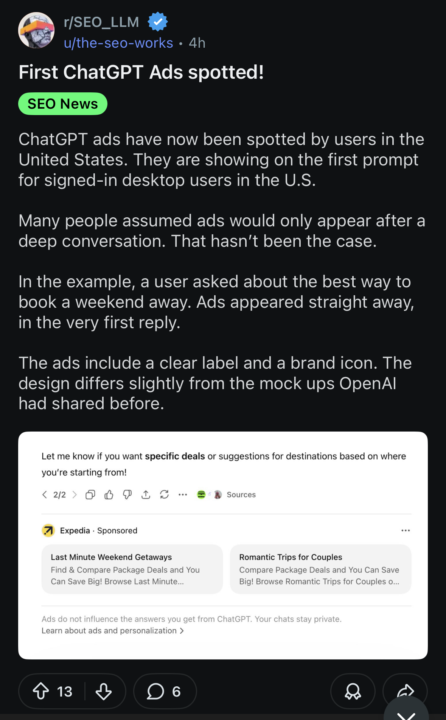

Ads started showing up

Looks like they are implementing the Google sitelinks type of ads

1-5 of 5