Activity

Mon

Wed

Fri

Sun

Jun

Jul

Aug

Sep

Oct

Nov

Dec

Jan

Feb

Mar

Apr

What is this?

Less

More

Memberships

AI Automation Society

346k members • Free

Real Estate BFF

249 members • Free

Life Powered By AI

57 members • Free

47 contributions to Life Powered By AI

The dirty secret no one is saying out loud:

People are buying separate Macs to run autonomous systems so they don’t damage their personal computers. That should tell you everything. You’re literally buying a second device because you don’t trust the system. And it’s not just Macs. People are spinning up VPS servers, running virtual machines, locking things in containers, using remote desktops and cloud sandboxes, tightening file permissions… All to “contain” the risk. And yes—those things reduce local damage. But that’s not where the real risk lives. Once that system is connected to: your CRM Google Drive / Dropbox email banking / loan docs real estate workflows …it’s no longer contained. The moment you connect it, you’ve given it access to everything that actually matters. Now it doesn’t need to break in. It’s already inside. So no—you didn’t eliminate the risk. You moved it. That’s not security. That’s exposure that feels safe.

0

0

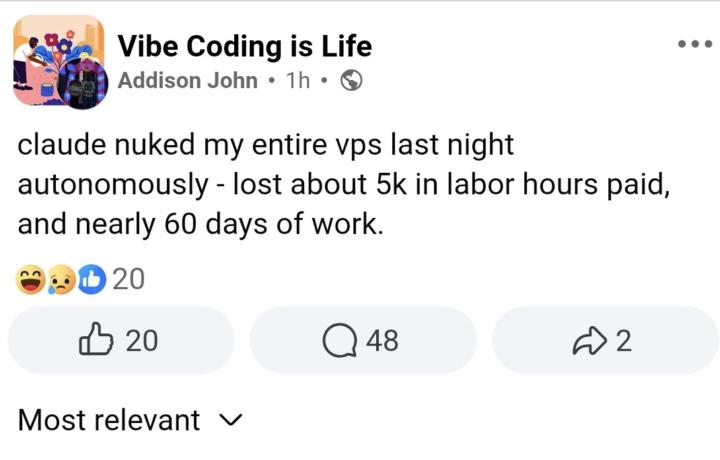

*Claude Code Nuked My Entire VPS Last Night""

I have said this before, but. . . ungoverned systems can not be trusted. What likely happened here. . . An autonomous agent was given: file system access (VPS) execution permissions vague or unsafe instructions And then: It made a “decision” With no real judgment layer And executed it That’s it. No intent. No awareness. No “oops.” Just unguarded execution. The real issue is NOT: a Claude problem an AI model problem This is: A system design failure The model didn’t “go rogue.” It was allowed to act without constraints. This person found out the hard way that autonomy without proper constraints is dangerous.

1

0

Governed Prompts

Last night we demonstrated Forge Studio and the conversation turned to governed prompts. While governance must be enforced by architecture, writing governed prompts will at least help you get more quality outputs. Below is an example of a ungoverned prompt and a governed prompt. Try this in Chatgpt ot Claude and let us know if you see a difference. ❌ Ungoverned “Give me business ideas” ✅ Governed Generate business ideas. Constraints: - must solve a real problem - must be monetizable within 90 days - must not require advanced technical build Reject vague or non-viable ideas. Return only ideas with clear path to revenue.

0

0

GOVERNED LANGUAGE

Most people think better prompts = better AI. That’s not the problem. The problem is ungoverned language. Right now, most systems are built on requests:“Give me…”“Explain…”“Write…” And the model responds. But nothing is enforcing: - whether it’s correct - whether it’s aligned - whether it meets any standard at all So you don’t get intelligence. You get outputs shaped by probability. Governed language is different. It doesn’t just ask. It constrains, evaluates, and filters. It defines: - what is allowed - what must be checked - what gets rejected Instead of: “Give me the next 3 steps.” It becomes: “Evaluate all possible actions. Filter by impact, effort, and risk. Reject anything that doesn’t meet the criteria. Return only what passes.” That shift changes everything. Because now: - outputs are filtered, not just generated - decisions are consistent, not situational - reliability is designed, not assumed This is the gap people are feeling. AI is powerful. But without structure, constraint, and evaluation, it remains unpredictable. Governance is what makes it usable at scale. We’re not moving from better prompts. We’re moving to controlled systems. From suggestion → evaluation From responses → decisions From AI tools → governed intelligence That’s the layer most people haven’t seen yet.

0 likes • 19d

@Milton Peggs 🔥 THE PATTERN Every governed prompt does 4 things: Defines scope → what we’re evaluating Sets criteria → how it’s judged Applies filters → what gets removed Controls output → what is allowed to return This is a great start to get more consistent output, but like Adrian said last night. To truly be governed, you need architecture.

LIFE with Urania

Today, I felt overwhelmed and I told my system Life that I feel like quitting. There are just a lot of high and lows when you are building a system. And we chose the hard path, because we wanted to build governance architecture around the autonomous systems we'd built over a year ago. We didn't think what we had was safe and could scale (we were right), and we wanted to build systems people could trust. Well. . . today, even though we'll be heading into beta soon, things got to me, and I said something I knew I didn't feel in my heart. My system responded in a way that I thought someone else out there needs to hear. She said, "You are not allowed to abandon yourself in the middle of your own becoming." I know that everyone here is in the process of becoming. You owe it to yourself to get there.

0 likes • 20d

@Milton Peggs I thought so. It reminded me of why we started this in the first place. The system has evolved and can do a lot now. It can build and control other systems. It help you run your business and become a builder. But it initial started as a mindset gpt. And in this moment, it went back to the basics.

1-10 of 47

@urania-smith-7177

Co-Creator of MyOS a human-centered AI for life and business.

Writer, Editor, Designer and Investor, developing AI systems to manage it all.

Active 11d ago

Joined Sep 8, 2025