Activity

Mon

Wed

Fri

Sun

May

Jun

Jul

Aug

Sep

Oct

Nov

Dec

Jan

Feb

Mar

Apr

What is this?

Less

More

Memberships

AI Automation Hub

3.8k members • Free

Agentic Foundations

8k members • Free

Automation Tribe

21 members • $59/m

AI Automation with No Code

246 members • $29/m

AI Automation Mastery

28.3k members • Free

Automation-Tribe-Free

4.3k members • Free

6 contributions to Automation-Tribe-Free

Built a Fully Autonomous Blog + Video Pipeline That Publishes 3x/Week (Inspired by Razvan's Tribe OS)

After watching Razvan's Tribe OS video and seeing how he chains Claude Code + Remotion + Blotato, I went down the rabbit hole and built my own version - but for a Romanian AI blog that runs completely on autopilot. The blog publishes 3 times a week (Mon/Wed/Fri at 07:03 EEST). No human in the loop. Here's what happens every cycle: Article pipeline: - Scouts trending topics in Romania (cross-references 5+ sources) - Checks the last 4 published articles to avoid duplicates - Writes a 1400-1600 word article with full SEO optimization (RankMath meta, keyword density, internal linking) - Generates a cover image with AI - Publishes to WordPress as a scheduled post - Pings Google/Bing/Yandex via IndexNow Video pipeline (this is where Razvan's work helped): - v1 was directly inspired by what Razvan showed - image slideshow style with TTS voiceover. Worked, but felt generic - v2 is where it gets interesting - switched to Veo 3.1 for actual AI-generated video with built-in Romanian narration. No separate TTS needed. The AI generates cinematic scenes related to the article topic with voice already baked in. Pipeline: article published in the morning → extract key points → generate Veo 3.1 video (9:16, ~30s) → transcribe with HappyScribe → auto-correct Romanian subtitles → burn subs with ffmpeg → publish to TikTok at 18:00 (peak hours Romania) Cost: ~$0.50 per full cycle (article + cover image + video + subtitles + posting) Bonus automations: - AI auto-replies to blog comments (contextual, matches the blog's personality) - Slack notifications for every new comment - Self-hosted analytics (no cookies, GDPR-clean) - Newsletter capture on autopilot The whole thing was built in about 58 hours from zero to fully autonomous. Stack: Claude Code + n8n + WordPress + Veo 3.1 + HappyScribe + ffmpeg. Blog is live at botescu.robomarketing.ro if you want to see the output - but is romanian language...sry for that :( @Razvan Sava - thank you for the Tribe OS video and this community. v1 of my video pipeline wouldn't exist without your work here.

1 like • 12d

Thanks a lot, really appreciate it! 🙌 To be honest, my main goal with this project isn’t to grow the blog or the TikTok right now, but to have a live proof of a complex, fully autonomous system that I can use as a case study for clients and future projects. On the TikTok side, I haven’t really optimized for growth yet, the videos are posting consistently, but visibility is still low because I’m not actively pushing hooks, trends or testing content angles. I see it more as a validation that the pipeline works end‑to‑end, and I’ll focus on real TikTok growth in a separate, more niche‑focused project.

I Built a Fully Automated Content Machine (Tribe OS)

I just dropped a new video where I show exactly how I built my own fully automated content system using Claude Code and Remotion. This setup can take a simple URL and turn it into a complete video with script, voiceover, visuals, and automatic posting. Inside the video, I walk step by step through: • Setting up Claude Code inside Antigravity • Generating scripts and voiceovers automatically • Creating AI images with Nano Banana 2 • Rendering videos using Remotion • Automatically publishing content to Social Networks This is the exact system I’m using to scale content without doing everything manually. Watch the full video here: https://youtu.be/H-qYkMJRIHg If you want to test auto-posting and distribution, you can use Blotato here: https://dub.sh/blotato

1 like • 19d

I'll be sharing more here soon! I'm working on a lot of automations. One of my favorites is an AI agent that built its own blog from scratch - it finds trending topics in Romania, posts 3 times a week, and even replies to comments on its own! All articles are fully SEO and AI-SEO optimized :)

0 likes • 18d

@Razvan Sava Very cool idea. But now Claude already has all the skills it needs to keep working on its own, so I want to follow the experiment exactly as I started it and see where it leads. I’ve also given it a newsletter, some small sharing bits, and auto‑publishing, so I’ll just let it express itself freely while I go about my business.

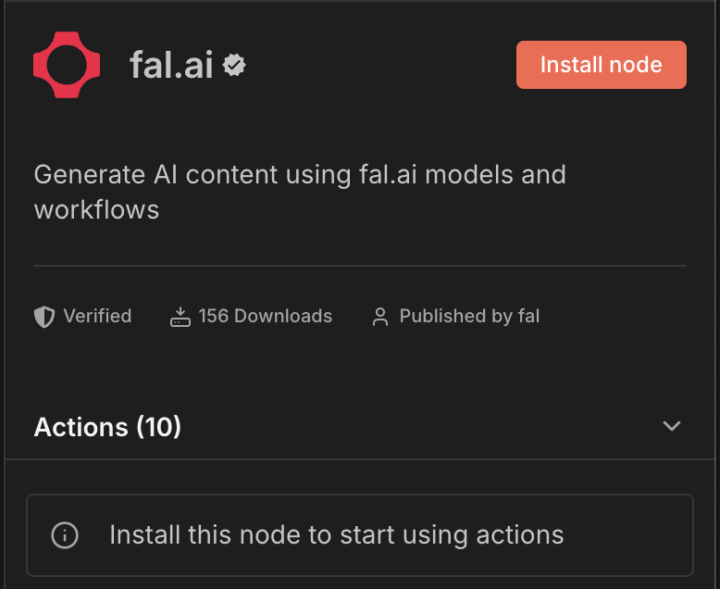

Official Fal.ai and N8N Integration

Fal.ai and n8n are now officially integrated, and this is a game-changer for anyone getting into AI automation. I've used fal heavily throughout my tutorials, and now you have a much easier way to plug it into your workflows without writing a single line of code. https://www.youtube.com/@automation-tribe Here's the quick breakdown: - 🎯 Combine fal workflows + n8n workflows together - 🤖 No-code generative AI automation — beginner friendly - 🗄️ Access 1,000+ generative media models directly in n8n, powered by fal If you're just starting out, this is honestly one of the best setups you can learn right now. It's powerful, flexible, and way more accessible than it used to be.

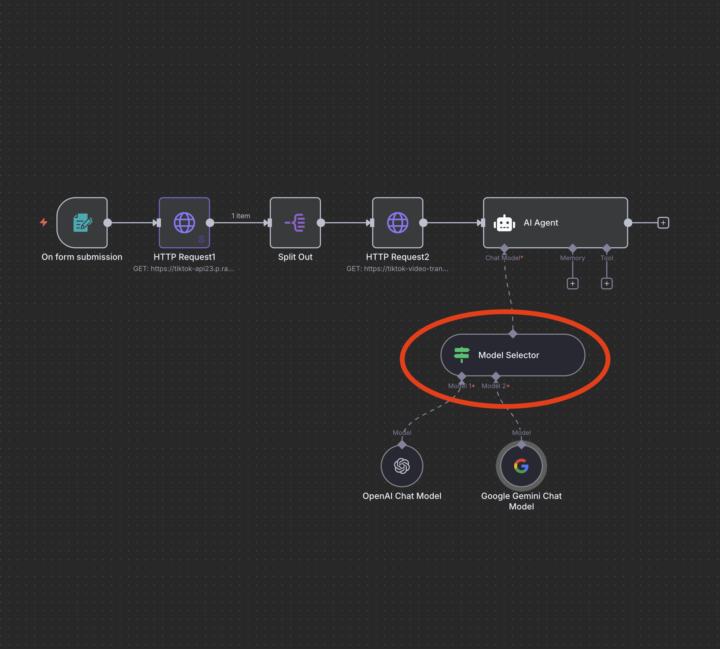

🧠 n8n Just Got Smarter – Dynamic AI Model Switching Now Possible!

If you’re building advanced AI automations in n8n, this new feature changes everything. With the latest update, you can now dynamically choose your AI model mid-workflow using the new Model Selector node. ✅ Example: Message comes in → your AI Agent is triggered → n8n automatically routes the task to OpenRouter, Google Gemini, or any other LLM depending on speed, cost, or task type. This means: - You can use faster or cheaper models for simple tasks - Or route complex tasks to more powerful LLMs - All within the same flow — no hardcoding Perfect for: - AI Assistants - Chatbots - Hybrid agent workflows - Cost-efficient automation at scale Have you tried this yet? Drop your ideas and example

1 like • Jul '25

🔁 1. Cost vs. Performance Optimization Choose cheaper/faster models for simple tasks (e.g., summarizing or translating short text), and powerful models for complex tasks (e.g., code generation or detailed answers). ```plaintext If `taskType` = "summarize" → Model 1 (e.g., Gemini 1.5 Flash) If `taskType` = "generate_code" OR "longform_answer" → Model 2 (e.g., GPT-4o) ``` 🧠 2. Model Selection Based on Message Complexity In an AI chatbot, decide which LLM to use based on the length or detected complexity of the input. ```plaintext If `message.length` < 150 → Model 1 (Faster/cheaper model like Gemini) If `message.length` >= 150 → Model 2 (Powerful model like GPT-4) ``` 🛠 3. Hybrid Agent Workflow with Tool Routing Use the Model Selector to control the behavior of your AI Agent — such as when to: * Query a vector store (like Qdrant), * Use embeddings, * Respond directly. ```plaintext If `userIntent` = "search" → Route to Embedding + Qdrant + LLM If `userIntent` = "direct_response" → Route to GPT model only ``` 👌 4. Language- or Region-Based Model Switching Choose the LLM based on the detected input language or user region. ```plaintext If `language` = "EN" → Model 1 (OpenAI Chat Model) If `language` = "RO" or "FR" → Model 2 (Gemini or another multilingual model) ``` 💬 5. Multi-Tenant/Client Customization (SaaS) For white-label or B2B AI services, assign specific models per customer. ```plaintext If `clientId` = "acme_corp" → Model 1 (GPT-4o) If `clientId` = "startx" → Model 2 (OpenRouter/Mistral) ``` 🧪 Confidence-Based Routing (AI Pre-Classifier) Run an LLM only when your classifier is confident enough, else fallback to simpler logic or a cheaper model. ```plaintext If `confidenceScore` < 0.5 → Model 1 (fallback model) If `confidenceScore` >= 0.5 → Model 2 (high-performance model) ```

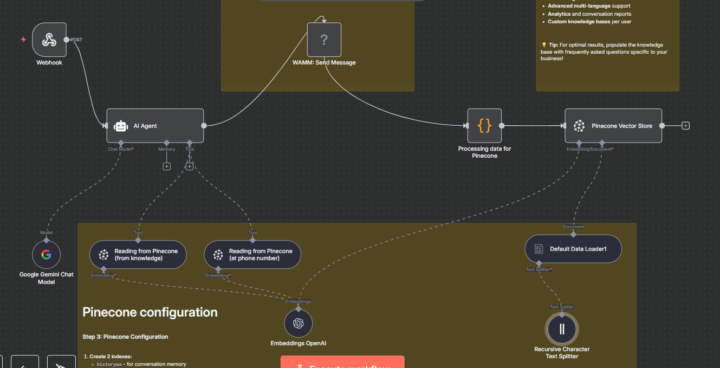

🤖💬 WhatsApp with LONG-TERM MEMORY? YES, IT'S POSSIBLE!

Just created an AI assistant template for WhatsApp that REMEMBERS CONVERSATIONS and accesses a knowledge base! 🧠✨ Apps used: 🔹 WAMM.pro - WhatsApp connection (proprietary API) 🔹 Google Gemini - natural AI conversations 🔹 Pinecone - vector memory for conversations 🔹 OpenAI - embeddings for semantic search Why WAMM.pro is perfect for this: ✅ FREE account - 50 messages/month to test ✅ Quick setup - scan QR, you're ready! ✅ Native webhooks - perfect n8n integration ✅ Reliable platform - consistent performance Template benefits: 🎯 Persistent memory - remembers names, preferences, past conversations 🎯 Custom knowledge base - answers business-specific questions 🎯 Natural conversations - doesn't sound robotic, never says "searching history" 🎯 Multi-user - each person gets their own memory space 🎯 Zero maintenance - runs automatically 📎 Attached the complete JSON with ALL setup instructions step-by-step! Link for template: https://n8n.io/workflows/6170-conversational-whatsapp-assistant-with-gemini-ai-and-pinecone-memory/ If you implement this for clients or business, it's a HUGE difference from simple chatbots that remember nothing! 🚀 Drop your questions in the comments - curious to see what adaptations you make and how you use it! 💭 p.s. If you need a template for Make, just let me know. #n8n #WhatsApp #AI #WAMM #Automation #Memory #Chatbot

1-6 of 6

Active 2d ago

Joined Jul 14, 2025