Activity

Mon

Wed

Fri

Sun

Jun

Jul

Aug

Sep

Oct

Nov

Dec

Jan

Feb

Mar

Apr

What is this?

Less

More

Memberships

Shipping Skool

201 members • $99/month

69 contributions to Shipping Skool

Local Hosting your LLMs

Hi everyone, we’ve just wrapped up our first session on self-hosting models. If you weren't able to join us live, the recording is now available and provides a comprehensive blueprint for local hosting. Please feel free to reach out if you have any questions

0 likes • 17h

- If I have a DGX Spark with a solid Qwen model running, how many openclaw agents or sub-agents can I have utilize this device and model at the same time? Or do each wait in a line for their turn to perform a task? - If I ran Hermes and Openclaw, would it be best to have two DXG Sparks so that each agent session would have a dedicated local model to use for regular daily business operations and activity? - Would it be expected to experience high-quality project research and building of apps using a good Qwen local model at the same time as other agents and sub-agents needing to get tasks and operations done simultaneously without issues? ( Example: I am on OpenAI Codex to orchestrate, and I may have Hermes doing 24/7 market monitoring and taking actions when certain triggers are hit. I also may have OpenClaw doing content marketing research and building and monitoring marketing campaigns as needed. Is all of this going to run ok on a single DXG spark model running a solid Qwen model? ) - From what I was understanding, I would need multiple devices and models running to regularly run many agents with sub-agents for regular operations like the example, and would still have to share the time needed to even access the device and use the model to perform tasks on each agent/sub-agent task when needed?

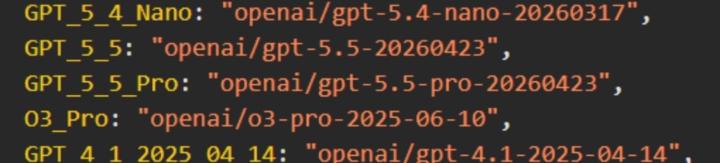

GPT 5.5 today

Just spotted on openrouter. Endpoints will go live today Get ready for testing this should be a massive upgrade over 5.4

1 like • 1d

@Dan Carroll Glad you got your project knocked out. Its really is a good model. I am running 5.5 medium in codex and that is the sweet spot there so far. I have not even tried PRO. If I have a struggling project I might need to try it out. Yes with API that is a expensive idea to even try. The codex plan does not offer pro I believe. With the codex desktop app and 5.5 together things seems to work amazing together and not sure I need PRO. I have some projects in the pipeline and can let you know if still consider it an improvment or not.

Kimi K2.6

I dumped Claude Sonnet this morning for Kimi K 2.6, and it didn't break anything. It is surprisingly good. We'll see if it goes another 24 hours. If it does, I'll report back here, but it's really good.

1 like • 5d

Yes would love to hear more about your test results. I tested it out recently also and can say I like it better than GLM 5.1. Overall it seems to have a flow and vibe of getting things done like Opus so I am liking it so far. Will have one main agent further test as I build some more stuff and do some real things with it. Its definitely easier on the wallet and seems to be a good Opus fill in or replacement, will see.

Anthropic wants to remove Claude Code from it’s $20 subscription??

It appears Anthropic is A/B testing Claude Code access by removing it from 2% of prosumer “Pro” plan signups to gauge reaction: https://www.wheresyoured.at/news-anthropic-removes-pro-cc/ In the parallel news, open models are killing it: https://www.buildfastwithai.com/blogs/qwen-3-6-plus-preview-review Qwen 3.6 benchmarks are reported to be on par with frontier models such as Sonnet 4.5. Is privacy & independence important to you? Is this the time you leverage local models?? Share your thoughts!!

1 like • 5d

Yes Anthropic causing more issues again. Yes, I am excited about Qwen and other local models but need the models and equipment for local to match what I need and be affordable. Privacy and independence are nice but I need what works and I can afford most. I do want to learn more and find good use cases to get some serious gear and tinker more.

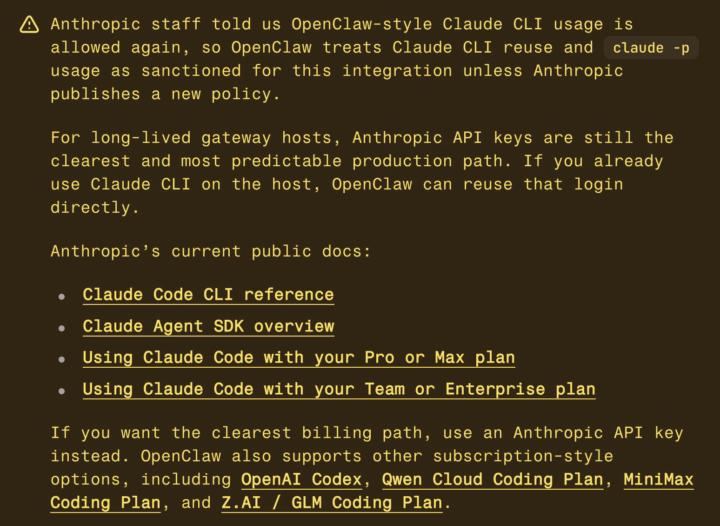

opus 4.7 back on openclaw through Claude CLI

the openclaw api bill just went back to zero for me lol, was paying $1500 this month remember three weeks ago when anthropic said no more subscriptions for third party tools. everybody wrote their hot take. i moved my pipeline to the openai api to cut losses. turns out that story was half right. what anthropic actually banned was openclaw harnesses pulling your oauth token out of claude code and calling the anthropic api directly while pretending to be claude code. fair. that was legitimately bad behavior. but there is a second pattern they did not ban. shelling out to the claude cli itself with dash p. claude code runs the request. claude code handles auth. claude code does the caching. openclaw is just calling the binary. anthropic staff confirmed to the openclaw team that one is still sanctioned. openclaw treats it as official green light unless they publish a new policy. i flipped my main session to it this afternoon. one line in openclaw.json. set provider to claude-cli and mode to oauth. my opus 4.7 sessions are now running through my max subscription instead of the api. no per token bill on the main session. catch: your subscription cap is still real. pro has one, max has one. i hit my 5 hour cap two days ago when the rza cron tried to write a script through it. blew right through my usage window in the middle of the day. the fix was simple. only the main session touches claude oauth. every cron, every coding task, every background job goes to gpt 5.4 on the openai api. that keeps the claude cap for thinking and planning, not bulk content generation. if you dont have claude max, the same pattern works elsewhere. glm coding plan starts at 10 a month, qwen around 28, minimax at 10 for the starter tier. run a local cli, subscription handles the billing, no per token bleed. if your using claude code in your .openclaw workspace folder just tell claude to wire it up for you full video on this drops in 1 hour on yt.

1-10 of 69

@stephen-king-8167

Some experience in tech and software, previous web designer. Became ill looking to learn a new skill to help with medical and life while disabled.

Online now

Joined Mar 28, 2026

Powered by