Activity

Mon

Wed

Fri

Sun

Jun

Jul

Aug

Sep

Oct

Nov

Dec

Jan

Feb

Mar

Apr

May

What is this?

Less

More

Memberships

AI Automation Society

355.2k members • Free

59 contributions to AI Automation Society

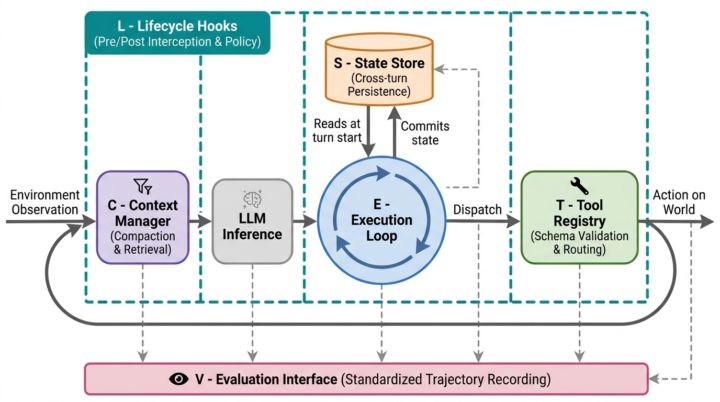

LLM Agents Don’t Need Better Prompts — They Need Orchestrators

Early AI agents felt simple: prompt + tools + loop. Great for demos. Fragile in production. As soon as agents run longer, use memory, call real tools, or need safety and evaluation, prompts stop being enough. The problem isn’t the model — it’s the missing runtime. 👉 That’s where agent orchestration comes in. 🎉 Modern agent systems separate: Context (what the model sees) State (what persists across turns) Execution (what happens next) Tools (how actions are validated) Policies (what’s allowed) Evaluation (what actually happened) This turns agents from clever scripts into reliable systems. ♉ The Open-Source Orchestrators Leading This Shift: ✅ LangChain – the most popular foundation for tools, memory, and chaining ✅ LangGraph – graph-based execution and stateful workflows ✅ AutoGen – strong multi-agent coordination ✅ LlamaIndex – memory- and retrieval-centric orchestration ✅ Haystack – pipelines and routing for RAG systems ✅ CrewAI – role-based agent collaboration ▶️ Each tackles a different slice of the same idea: LLMs need a control plane. The Takeaway ⚙️ Agents are no longer prompts. They’re runtimes. ⚙️ The teams that win won’t have the cleverest prompt — they’ll have the best orchestrator. ⚙️ If you’re building agents and can’t answer where state lives, how execution is controlled, or how behavior is evaluated, you’re still in demo mode. Orchestration is how agents grow up. 📈

0

0

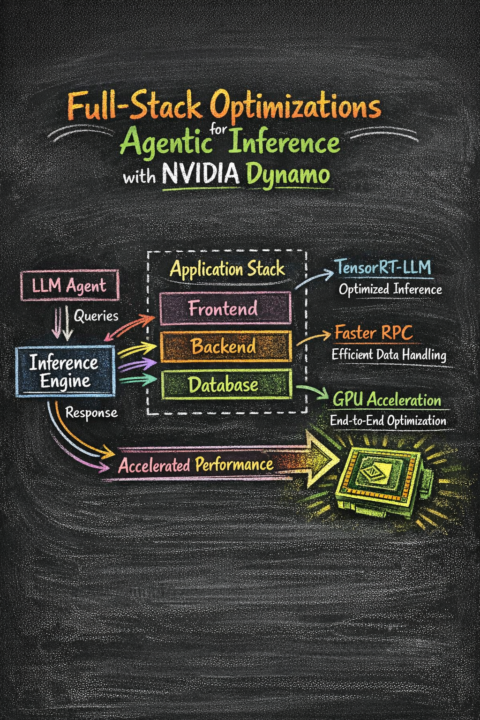

🧠 From Agent to Acceleration: The NVIDIA Integrated Flow

I just found a must-read piece on the future of agentic AI: Full-Stack Optimizations for Agentic Inference with NVIDIA Dynamo. If you’re currently building AI agents, here’s a quick breakdown of how this framework standardizes and accelerates the stack. The diagram illustrates the critical pathway from an LLM Agent (like an AI co-pilot or autonomous task manager) to high-performance execution on an NVIDIA GPU. 1. LLM Agent ➡️ Queries ➡️ Inference Engine: The agent sends complex, iterative queries to the core Inference Engine. 2. Application Stack: At the heart of the optimization is the integration with your standard software stack (Frontend, Backend, Database). Dynamo coordinates with these layers. 3. Specific Optimizations: 💥 The Result: Accelerated Performance By synchronizing all parts of the application stack and connecting them directly to the underlying NVIDIA GPU hardware, Dynamo unlocks a massive surge in Accelerated Performance. This isn't just about speed; it’s about making complex, multi-step agent behaviors viable and responsive in real-world applications. 💥 Why this matters: Agentic workflows are computation-intensive. Without these deep, integrated optimizations, they can be slow and expensive. NVIDIA Dynamo provides the blueprint for making them efficient and scalable. If you interested to read more, here are some articles: ➡️ LMCache: An Efficient KV Cache Layer for Enterprise-Scale LLM Inference ➡️ KVFlow: Efficient Prefix Caching for LLM-Based Multi-Agent Workflows ➡️ CONCUR: High-Throughput Agentic Batch Inference via Congestion-Based Concurrency Control

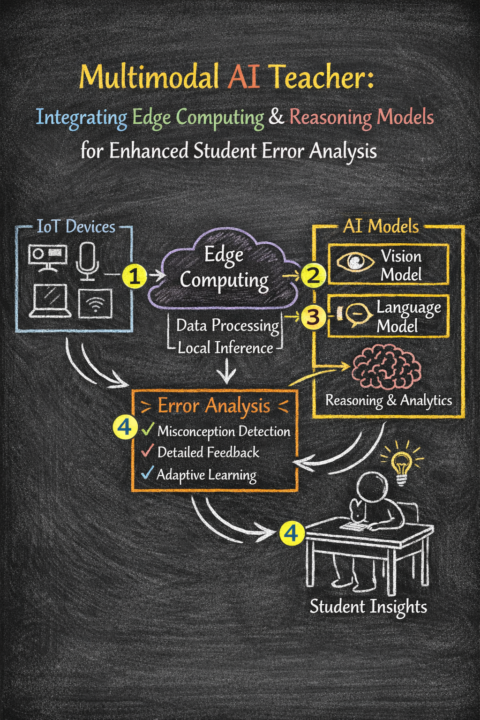

Multimodal AI Teacher: The Future of Personalized Learning

Education has always been constrained by one fundamental bottleneck. A single teacher cannot give every student individualized attention at the same time. A student struggling silently in the back row, a misconception repeated across thirty homework submissions, a language learner mispronouncing the same word for weeks — these are problems that scale works against. Multimodal AI is beginning to change that. What Is a Multimodal AI Teacher? A Multimodal AI Teacher is not a chatbot. It is a system that sees, listens, reasons, and responds — combining computer vision, natural language processing, and reasoning models to understand what a student is doing and where they are going wrong, in real time. Unlike single-modal AI tools that only process text, a multimodal system integrates: - Visual input — handwriting, diagrams, facial engagement cues, screen activity - Audio/text input — spoken answers, written responses, chat interactions - Reasoning — synthesizing both to detect patterns, misconceptions, and learning gaps The result is a system that behaves less like a grading tool and more like an attentive teaching assistant that never sleeps. How the System Works. The architecture follows a clean four-stage pipeline: 1. Capture — IoT Devices Smart cameras, microphones, laptops, and tablets in the classroom capture student interactions continuously. These are standard devices — nothing exotic is required. 2. Process Locally — Edge Computing Raw data flows to an on-premise edge server, not the cloud. Local inference handles data processing at low latency. This is the privacy backbone of the entire system — student data never leaves the campus network, keeping the system compliant with FERPA and GDPR. 3. Analyze — Vision + Language Models Two AI models work in parallel: - Vision Model (e.g., LLaVA, PaliGemma) — reads handwritten work, diagrams, and visual cues - Language Model (e.g., Llama 3, Mistral) — processes speech transcripts and written text Both run locally using tools like Ollama or vLLM on campus GPU servers.

Your Claude API call is eating 1.2 seconds. Here is when that stops being acceptable.

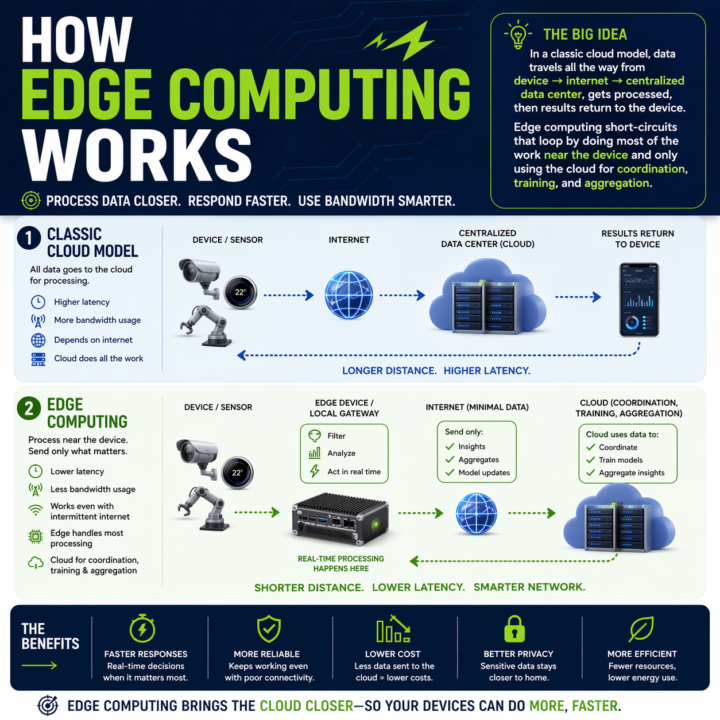

Every automation you build has a round trip baked in. Claude API, OpenAI call, n8n webhook, vector DB lookup. Data leaves the device, travels to a data center, gets processed, comes back. On a good day a cached Claude call runs 600ms to 1.2s. On a bad day you are watching a spinner. Where that round trip breaks: - Real-time perception in vehicles or robotics - Industrial control loops that cannot wait on a network - Anywhere connectivity is spotty or intermittent - High-volume sensor data where shipping everything to the cloud burns bandwidth and budget Edge changes the math. Compute runs where the data is generated. Local work stays local. Cloud only sees what needs cross-site context. The split that is emerging: - Edge: real-time inference, event detection, filtering, local control - Cloud: model training, cross-site analytics, long-term storage, heavy compute On-device SLMs are usable now. Llama 3.1 8B on an M-series Mac via Ollama hits sub-100ms first token. Haiku-class reasoning is running on phones with NPUs. If your automation touches physical systems, live audio, live video, or anything latency-sensitive, you have a real placement decision to make: edge, cloud, or hybrid. The pattern I keep reaching for: route by cost of latency. If a one-second delay breaks the experience, run it local. If the step needs cross-context memory or a frontier model, send it up. Cheap decisions at the edge, expensive ones in the cloud, with the edge doing the filter so the cloud only sees signal. The architecture question has changed. Not "which cloud model do I call," but "where does each step of this pipeline need to run." What is running in your stack right now that should not be making a cloud round trip? What is stopping you from moving it?

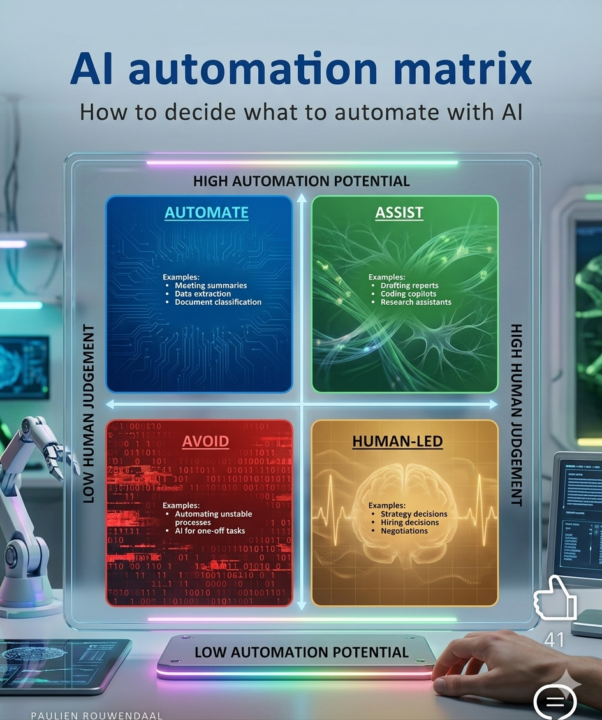

AI Automation Matrix: Working Decision

This visual guide helps you work smarter, not harder. Learn to: ▶️ Automate low-judgment, repetitive tasks. ▶️ Get AI to assist you with high-potential, complex ones. ▶️ Human-lead critical decisions and creative work. ▶️ Avoid the trap of automating unstable processes.

5

0

1-10 of 59

@mohammed-roqa-7379

Technologist, Creator and Handsome

Active 7m ago

Joined Jan 20, 2026

Powered by