Activity

Mon

Wed

Fri

Sun

May

Jun

Jul

Aug

Sep

Oct

Nov

Dec

Jan

Feb

Mar

Apr

What is this?

Less

More

Memberships

Human AI Alliance

520 members • Free

L'Alliance 🤝

269 members • Free

AI SoftLife Society

699 members • Free

Learning Pretty Academy + AI

395 members • Free

Poised 4 Profits in AI™©️

237 members • Free

Future Proof

352 members • Free

The Prompt Playground

725 members • Free

OpenClaw and Autonomous AI

127 members • Free

AI & Free Intelligence

199 members • Free

5 contributions to AI Automation Network

🚀 New Video: Build Powerful AI Dashboards with n8n & Lovable (Full Guide)

In this video, I’ll show you how to build an AI-powered dashboard that visualizes Google Sheets data and enables real-time interaction with an AI agent using Lovable and n8n. We’ll cover: - Frontend AI dashboard setup - n8n backend workflow design - Google Sheets data integration - AI agent with data context - Chat interface for data queries 🚀 Perfect for AI builders, automation engineers, and founders who want to create interactive data dashboards with AI-driven insights—use this as a foundation and start customizing your own workflows. Resources: Lovable n8n Google Sheet

How Chatbots Actually Work: From User Message to AI Response

I have previously conducted lectures on LLM orchestration, RAG pipeline, multi-modal models, and multi-agent architecture. I am going to explain how to implement chatbot functionality by utilizing the previous lecture. A chatbot MVP is essentially: A system that takes a user message → understands it → optionally looks things up → generates a response → returns it You can express this as a simple loop: The 5 Core Components of a Chatbot MVP Break the system into 5 understandable parts: ① User Interface (UI) Chat screen (web, app, Slack, etc.) Where users type messages ② Backend Controller (Orchestrator) The “brain” that decides what to do next Routes requests between components Connect to your previous lectures: This is where **LLM orchestration logic** lives. ③ Large Language Model (LLM) Generates responses Understands natural language ④ Knowledge / Data Layer (Optional but critical for MVP+) Documents, database, APIs Used in **RAG (Retrieval-Augmented Generation)** ⑤ Memory (Optional but powerful) Conversation history User preferences User ↓ UI ↓ Orchestrator ├── LLM └── Knowledge Base (RAG) ↓ Response contact information: telegram:@kingsudo7 whatsapp:+81 80-2650-2313

0

0

Can this be used to promote mobile apps too?

My question is whether this automation would work well with fitness mobile apps too? As in, I would require the content creation around promoting my mobile app that is geared towards people using the mobile app and getting to their fitness goals. Would I be able to simulate the characters using the phone with the app and talking about it too?

🚀 New Lecture: Multi-Agent Architecture (Production Systems)

Today I’m starting a lecture on Multi-Agent Architecture, focusing on how modern AI systems move beyond single LLM prompts and into coordinated agent ecosystems. In real-world AI products, the challenge isn’t generating text — it’s orchestrating multiple agents that can plan, reason, and execute tasks reliably. In this session we’ll break down: • Core architecture patterns for multi-agent systems • Agent orchestration, routing, and task decomposition • Tool usage and memory management • Building reliable pipelines instead of fragile prompt chains • Real production use cases from modern AI systems The goal is simple: move from demos to production-grade AI architectures. If you're building with LLMs, AI agents, or automation pipelines, understanding multi-agent design patterns will be one of the most important skills going forward. More details and implementation walkthrough coming in the lecture. Let’s build systems that actually scale. ⚙️

0

0

🔮🚀🔜💡 For the future 🔮🚀🔜💡

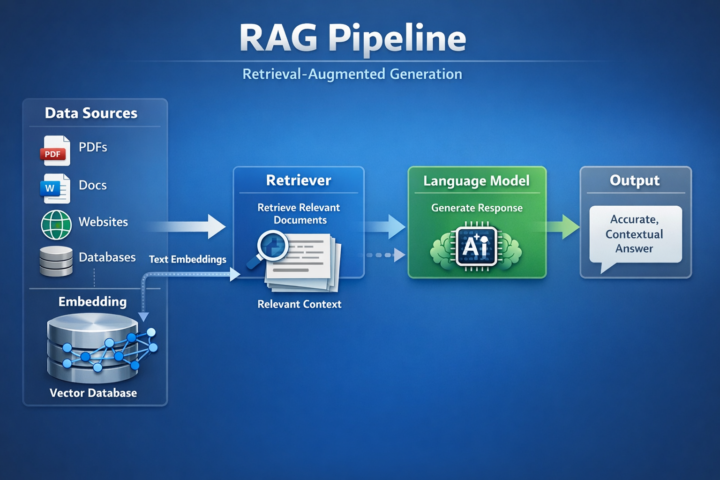

Today, following our discussion on LLM Orchestration, we are specifically introducing the RAG Pipeline. For satisfactory processing, the RAG (Retrieval-Augmented Generation) pipeline is a key element in building AI systems that provide successful and context-aware answers. This pipeline combines the powerful capabilities of language models with document-related search functions, ensuring that AI responses are based on user data rather than relying solely on prior knowledge. The following is a subsequent diagram illustrating the RAG pipeline. It shows how data is retrieved, processed, and used to generate high-quality, powerful answers. This approach not only enables excellent answers but also allows for the integration of features through added content. We welcome any questions related to software, including issues encountered during the learning and development process. Our goal is ```for the future```.

1

0

1-5 of 5

Active 6h ago

Joined Mar 16, 2026

Powered by