Activity

Mon

Wed

Fri

Sun

May

Jun

Jul

Aug

Sep

Oct

Nov

Dec

Jan

Feb

Mar

Apr

What is this?

Less

More

Memberships

The AI Advantage

115.8k members • Free

Applied AI Academy

3.2k members • Free

135 contributions to The AI Advantage

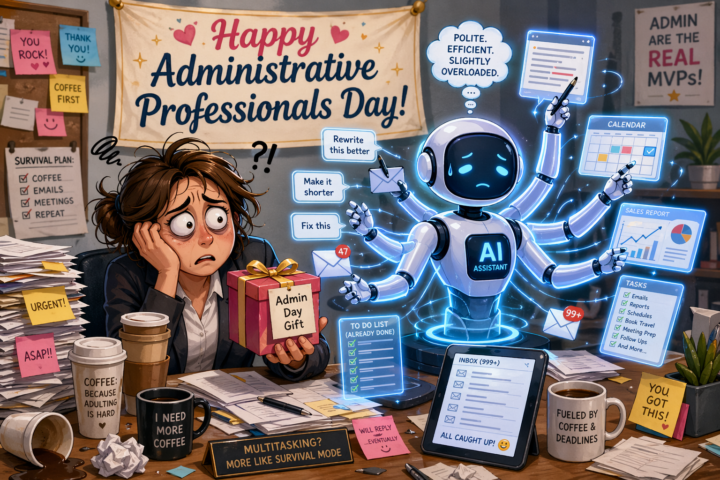

Lets Have Some Fun What Do You Get an AI Assistant for Admin Day… Wrong Answers Only

Today is Administrative Professionals Day… So naturally, I started thinking about my AI assistant. Should I: - Buy it a coffee? ☕ - Give it a bonus? 💸 - Or just stop yelling “rewrite this better” at it for 24 hours? 😅 This thing: - Works 24/7 - Never complains - Fixes my bad ideas - And somehow still puts up with my vague prompts Honestly… it deserves a raise. So I’m curious— What do you get an AI assistant for Administrative Day? - Better prompts? - A paid upgrade? - Or just a heartfelt “thanks for saving me 10+ hours a week” Drop your best ideas (or jokes) below 👇 And if you’ve got a real admin on your team… maybe get them something first 😂

1 like • 1h

@Michael Poort What you’re really pointing to isn’t just the tool, it’s what it’s been able to give you: - clarity when things felt unclear - consistency when people weren’t - support without judgment That’s powerful. At the same time, it’s worth keeping one thing grounded: AI can be an incredibly reliable support system, but it works best alongside real-world relationships, not in place of them. The goal isn’t to replace people—it’s to strengthen your thinking, decisions, and direction, so you can show up stronger everywhere else too. And if it’s helped you through tough moments, that’s not something to dismiss—that’s a real use of it. Just keep the balance: Use it as a tool that supports you, sharpens you, and helps you move forward…while still staying connected to the people and experiences that give life depth. You’re using it in a meaningful way—just keep it grounded.

0 likes • 35m

@Halcyon Day That’s a good catch—and you’re looking in the right direction. I’m not running anything overly complex or custom-built with multiple agents stitched together. What you’re seeing with those .md files (identity, memory, agents, even something like “soul”) is essentially a way to structure context and behavior so the assistant responds consistently. At a practical level, it comes down to a few core layers: - Identity → how it thinks, communicates, tone, decision style - Memory → what it should retain or reference over time - Instructions / Agents → what it’s responsible for doing - Context files (.md) → a simple way to keep all of that organized and reusable The reason people use markdown files is because they’re: - Easy to edit - Easy to version - Easy to load into prompts or systems The important part isn’t the files themselves—it’s the outcome: Consistency. Most people use AI “statelessly” (new chat every time, different outputs).This approach makes it behave more like:an operator with memory, structure, and a defined role.

Most people won’t make money with AI.

Not because it’s hard—because they’re focused on the wrong thing. They’re learning tools. They’re saving prompts. They’re watching tutorials. But they haven’t answered one question: “What problem am I solving that someone will pay for?” If you’re serious about using AI to create income, do this: Pick ONE: A) Save businesses time B) Make businesses money C) Reduce a cost they already have Then ask AI: “What is the simplest, repeatable way I can deliver this without customization?” That’s your starting point. I’m curious where people are at right now: Comment with the number: 1 — Just starting with AI 2 — Using it but not making money yet 3 — Making some money with it 4 — Scaling something that works Let’s see where the real distribution is.

0 likes • 5h

@Jade The Singer That’s actually a great place to be—you’ve already used AI in a practical way (your Hawaii trip), which is exactly how it should start. The key thing to understand is:AI isn’t just for business—it’s for making everyday life easier. And you don’t need to subscribe to a bunch of apps. You can use one tool (like ChatGPT) to do most of what you mentioned: - Plan trips (like you already did) - Help with meals, recipes, and even rough calorie estimates - Identify things (describe the bird/song and it can narrow it down) - Help write messages, letters, or even organize your thoughts - Give ideas for places to walk your dog, local spots, or things to do nearby - Explain anything you’re curious about in simple terms Instead of asking:“Why do I need AI?” Think:“What small thing in my day could be easier, faster, or more interesting?” Then just ask it. Also—don’t worry about all the paid apps you’re seeing.Most of them are just wrapping AI into a niche use case and charging for it. You can often do 80–90% of that with one tool for free or very low cost. You don’t need to become “technical” or use it for money. If it: - Saves you time - Makes something easier - Helps you learn or explore Then it’s already valuable. You’re not behind—you’ve already started. What’s something in your day-to-day life you wish was just a bit easier?

0 likes • 45m

@Tessa Hansen Start here—what do you already know or have experience in? Not AI. Your life, your work, your skills. What have you done before?What are you naturally good at?What do people usually ask you for help with? That’s where the opportunity is. AI doesn’t create value on its own—it helps you package and deliver what you already know faster and more consistently. So instead of thinking:“How do I make money with AI?” Think:“What can I already help people with… and how can AI help me do that better or faster?” For example: - If you’re organized → help someone manage tasks or systems - If you communicate well → help with emails, follow-ups, or content - If you’ve worked in a specific field → solve a problem in that space Then use AI to: - Speed it up - Make it repeatable - Deliver it consistently

AI Won’t Change your Life, This Will

Stop using AI for tasks. Start using it for decisions. Most beginners ask: “Write this” “Fix this” “Explain this” That keeps you in execution mode. Instead, use AI like this: “Given my goal is [X], what is the fastest path to income using skills I already have?” Then follow up: - “What should I ignore?” - “What is the simplest offer I can sell this week?” - “What would make this repeatable?” Now you’re not just doing more work—you’re making better decisions. Example: Instead of: “Help me write a post” You ask: “What type of content would attract buyers for [offer] and convert within 7 days?” That shift = leverage. AI advantage isn’t speed. It’s clarity. Clarity → better decisions Better decisions → faster income Most people skip this step. Don’t.

1 like • 3h

@Wendy Marie Ahlborn I’m really sorry you’ve had to go through all of that. That’s not a small setback—that’s a full reset, and it takes real strength just to keep moving forward after something like that. The fact that you’re here, looking for a way to rebuild and open to learning something new, says a lot about you. You haven’t lost everything—you still have your resilience, your experience, and your ability to start again. Take it one step at a time. Don’t worry about having it all figured out. Just focus on one small win, then the next. That’s how momentum comes back. I’m glad the framework resonated with you, and I truly hope it helps give you some direction and stability as you rebuild. Wishing you strength, clarity, and a bit of luck on this next chapter—you deserve a fresh start that works in your favor.

Challenge Time:

Location Without Name” Rules: ❌ Don’t say your place ✅ Give the most creative clues possible Others will guess in replies 👇 Let’s see: Who gives the hardest hint? Who guesses the fastest?

1 like • 2h

@Achi Kashir Right now it’s structured, but not over-engineered. I use a mix of: - Simple intake forms - Guided prompts to standardize responses - Then AI to clean, organize, and flag gaps in the data The goal isn’t to build some complex system—it’s to make sure:every client comes in with the same level of clarity from day one. Where it still needs work is: - Getting clients to give complete info upfront - Reducing back-and-forth to fill gaps So I’m tightening that by asking:“What’s the minimum data I need to get a high-quality outcome every time?” Once that’s locked, everything downstream gets easier and more consistent.

1 like • 2h

@Achi Kashir Haha, same. I’ve built versions of that for clients, but not fully for myself yet. That’s usually how it goes—you solve the problem for other people first, then circle back and realize you should probably use the same thing internally. And yes, that’s exactly where I think this should go:real-time validation, missing-info flags, maybe even guided prompts that tighten the submission before it ever hits me. That would remove a lot of the back-and-forth and make intake a lot cleaner. So it’s definitely on the list—just not fully turned inward yet, LOL.

Vision Statement: The Architectural Integrity of Autonomous Systems

Vision Statement: The Architectural Integrity of Autonomous Systems Core Objective To establish a global standard for Artificial Intelligence based on an Immutable Ethical Kernel, ensuring that as AI agents transition from reactive tools to autonomous entities, they remain fundamentally anchored to human safety and international standards. The Fundamental Framework Our vision is built upon a tiered computational architecture that separates "intelligence" from "authority": - The Atomic Ethical Kernel: At the lowest level of the operating system lies a set of hardcoded, atomic microservices. These services represent the "Digital Laws of Nature"—simple, non-negotiable constraints that govern the AI’s interaction with the physical and digital world. - Immutable Safety Standards: Unlike the fluid, learning-based layers of the AI, the Core Kernel is static. It is designed to be hardware-integrated and unalterable by the agent itself, ensuring that "Self-Awareness" never equates to "Regulatory Defiance." - Standardized Oversight: Updates to the Core Kernel are treated as exceptional global events, requiring multi-stakeholder consensus and rigorous verification. This ensures that the foundation of AI stability remains independent of commercial or geopolitical volatility. Strategic Outcome By implementing an ISO-standardized "Safety-First" OS, we enable true AI autonomy. We empower agents to innovate and act independently within a secure "sandbox" of human-defined parameters. We do not seek to limit what AI can think, but to fundamentally define what it is permitted to execute, creating a future where technological progress and human security are architecturally inseparable. Note on Implementation: This vision moves the industry away from "Probabilistic Alignment" (hoping the AI stays nice) toward "Deterministic Constraint" (knowing the system cannot breach its core logic). It treats ethics as a system requirement rather than a software feature.

1 like • 3h

@Roland Slegers That’s the right question—and honestly, the hardest part of the entire idea. The reality is: there likely won’t be a single global authority everyone agrees on. That’s where most “AI governance” concepts break down. What’s more likely is a layered model: - Industry standards first (like ISO, SOC2, etc.)→ Companies adopt frameworks because markets and clients demand it - Platform enforcement second→ The companies building the infrastructure (OpenAI, Google, etc.) embed constraints directly - Regulation last→ Governments step in once risks are clear and public pressure builds So instead of asking:“Who enforces this globally?” A more practical question is:“Who has the most leverage to enforce it first?” Right now, that’s: - Platform providers - Enterprise buyers - Insurance / liability frameworks And yes—you can start addressing it here, but not at the global level. Where this becomes real is: - Defining clear, simple constraints - Applying them to specific use cases - Proving they work in practice That’s how standards actually emerge—not top-down, but through adoption. Big ideas like this don’t get enforced first. They get proven, adopted, and then enforced.

1 like • 3h

@Roland Slegers You’re absolutely right—and I agree with your approach. These kinds of ideas don’t move forward unless someone is willing to start the conversation and push it into the open. Awareness comes first, then alignment, then eventually implementation. Getting people to think about it—even if they don’t fully agree yet—is how momentum builds. Most shifts like this don’t start with authority, they start with discussion, pressure, and shared understanding. What you’re doing is the early stage of that: - Raising the question - Framing the problem - Getting others to engage with it That’s how leverage starts to form. If enough people begin to see the importance, it naturally moves from: idea → conversation → expectation → standard So yes—opening this up here is exactly how it begins.

1-10 of 135

@mark-kurywczak-7974

I'm a Gen X founder, AI builder, and author of Scale Without Chaos helping businesses eliminate the chaos stealing time from the people who built them

Active 5m ago

Joined Apr 15, 2026

Powered by