Activity

Mon

Wed

Fri

Sun

Jun

Jul

Aug

Sep

Oct

Nov

Dec

Jan

Feb

Mar

Apr

What is this?

Less

More

Owned by James

Using AI expertly, effectively and safely by connecting AI, Cybersecurity, Project Management and Governance into a disciplined framework.

Memberships

47 contributions to ThisLocale

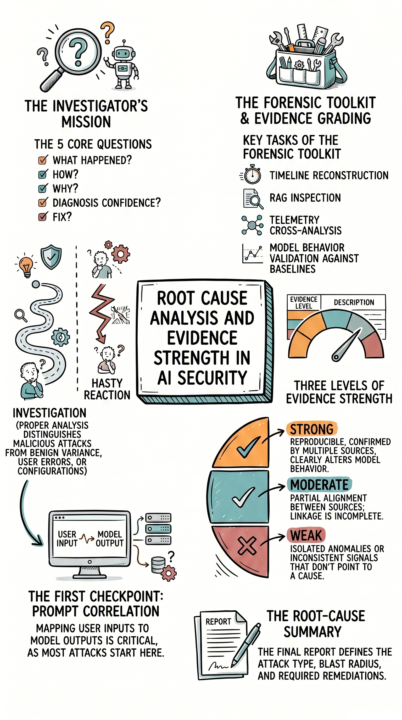

Foundations of AI & Cybersecurity - Lesson 41: Module/Chapter 2.6.7 Determining Root Cause and Evidence Strength

Foundations of AI & Cybersecurity - Lesson 41: Module/Chapter 2.6.7 Determining Root Cause and Evidence Strength When an AI security event occurs, the instinct is often to contain it fast. The better move is to investigate before you react. In reality, many AI incidents are misdiagnosed because teams rush to mitigation before determining root cause. That can mean fixing the symptom while leaving the real compromise untouched. Most teams don’t struggle because they lack tools. They struggle because they lack disciplined evidence analysis. Today’s module shows and explains this: Root Cause Analysis and Evidence Strength in AI Security This is vital because effective response starts with reconstructing timelines, correlating prompts and telemetry, validating model behavior, inspecting retrievals and tool activity, and judging whether the evidence is strong, moderate, or weak before acting. If you’re responsible for AI, security, project management, governance, or technology decisions, this is where trust becomes investigable, auditable, and defensible. Because in AI security, the quality of your response depends on the quality of your diagnosis. — #AI #Cybersecurity #AIProjectManagement #AIGovernance #AISecurity #AICybersecurity

0

0

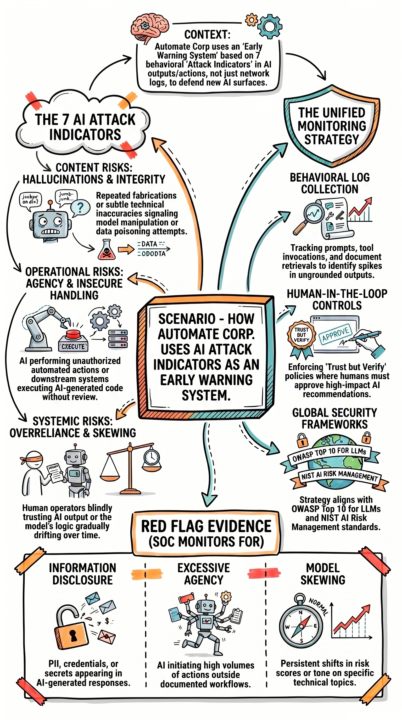

Foundations of AI & Cybersecurity - Lesson 40: Module/Chapter 2.6.6 Scenario for Identifying Direct Model-Targeted Attacks

Foundations of AI & Cybersecurity - Lesson 40: Module/Chapter 2.6.6 Scenario for Identifying Direct Model-Targeted Attacks Most AI security programs focus on preventing attacks. The real problem is not seeing them early enough. In reality, AI systems rarely fail all at once. They show warning signs first through behavior, outputs, and subtle decision shifts that most teams ignore. Teams often lack a structured way to monitor AI behavior as a security signal and proactive best practice. Today’s scenario lesson shows and explains this: How Automate Corp. Uses AI Attack Indicators as an Early Warning System This matters because hallucinations, output manipulation, data leakage, insecure automation, excessive agent power, human overreliance, and model drift are not isolated risks, they are the signals that an attack or failure is already in motion. If you’re responsible for AI, security, project management, governance, or technology decisions, this is where monitoring evolves from logs and alerts to behavioral intelligence. Because once you treat AI behavior as a security signal, you stop reacting to incidents and start detecting them before they escalate. — #AI #Cybersecurity #AIProjectManagement #AIGovernance #AISecurity #AICybersecurity

0

0

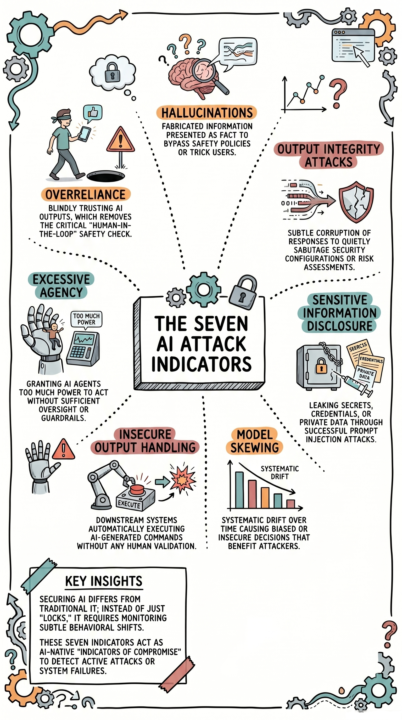

Foundations of AI & Cybersecurity - Lesson 39: Module/Chapter 2.6.5 Identifying Direct Model-Targeted Attacks

Foundations of AI & Cybersecurity - Lesson 39: Module/Chapter 2.6.5 Identifying Direct Model-Targeted Attacks AI failures don’t look like cyberattacks. They look like normal behavior that no one questions. In reality, the earliest signs of an AI compromise show up as subtle shifts. Strange outputs, quiet data leaks, or decisions that feel slightly off but still get accepted. Teams will struggle because they lack visibility into these early warning signals. Today’s lesson shows and explains this:The Seven AI Attack Indicators This matters because hallucinations, output manipulation, sensitive data exposure, insecure automation, excessive agent power, blind trust, and model drift are not edge cases, they are the primary ways AI systems fail in real environments. If you’re responsible for AI, security, project management, governance, or technology decisions, this is where control shifts from reactive monitoring to proactive detection. Because once you recognize these indicators, you stop treating AI issues as anomalies… and start treating them as signals. —#AI #Cybersecurity #AIProjectManagement #AIGovernance #AISecurity #AICybersecurity

0

0

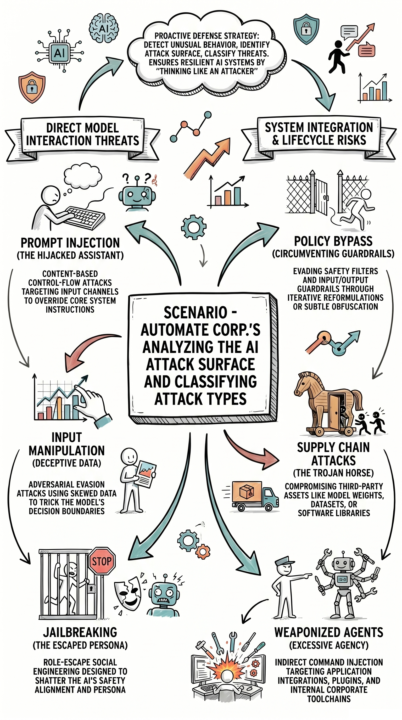

Foundations of AI & Cybersecurity - Lesson 38: Module/Chapter 2.6.4 Scenario on Analyzing the Attack Surface & Classify the Attack Type

Foundations of AI & Cybersecurity - Lesson 38: Module/Chapter 2.6.4 Scenario on Analyzing the Attack Surface & Classify the Attack Type Most AI security failures don’t start with sophisticated hackers. They start with teams misunderstanding where they’re exposed. In reality, attackers don’t break systems. They guide them. Most teams don’t struggle because they lack tools. They struggle because they lack structured awareness of how AI can be manipulated across its entire surface. Today’s scenario lesson shows and explains this: Automate Corp.’s Analyzing the AI Attack Surface and Classifying Attack Types This matters because every AI system introduces multiple entry points. Prompt injection, data manipulation, guardrail bypass, and supply chain risks aren’t isolated issues, they’re interconnected paths attackers use to shift control of your system without ever “breaking in.” If you’re responsible for AI, security, project management, governance, or technology decisions, this is where trust becomes measurable and enforceable. Because once you can identify the attack surface and classify the threat, you move from reacting to incidents… to designing systems that anticipate them. — #AI #Cybersecurity #AIProjectManagement #AIGovernance #AISecurity #AICybersecurity

0

0

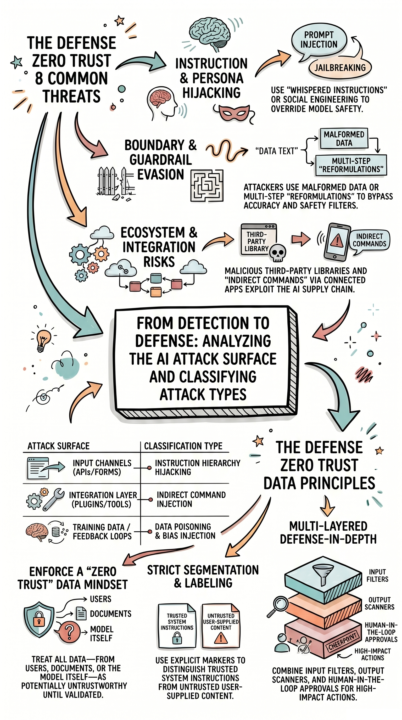

Foundations of AI & Cybersecurity - Lesson 37: Module/Chapter 2.6.3 Analyzing the Attack Surface & Classify the Attack Type

Foundations of AI & Cybersecurity - Lesson 37: Module/Chapter 2.6.3 Analyzing the Attack Surface & Classify the Attack Type Most AI security efforts stop at detecting a problem. In reality, detection is only the beginning, real security comes from understanding the attack and applying the right control. The best practice is that teams need to analyze the attack surface or classify attack types before responding. Today’s module shows and explains this: From Detection to Defense: Analyzing the AI Attack Surface and Classifying Attack Types Prompt injection, input manipulation, guardrail bypass, jailbreaking, bias injection, integration abuse, supply chain compromise, and insecure plugins are not random issues. They are structured attack types that require specific, layered controls. This matters because without proper classification, teams apply the wrong defenses, leaving the same vulnerabilities open to repeat attacks. If you’re responsible for AI, security, project management, governance, or technology decisions, this is where reactive security becomes engineered defense. — #AI #Cybersecurity #AIProjectManagement #AIGovernance #AISecurity #AICybersecurity

0

0

1-10 of 47

@james-dutcher-6548

Expertly using AI by incorporating Cybersecurity, Project Management, and Governance

Active 3h ago

Joined Jan 2, 2026

ENTJ

Endicott, NY 13760