Activity

Mon

Wed

Fri

Sun

May

Jun

Jul

Aug

Sep

Oct

Nov

Dec

Jan

Feb

Mar

Apr

What is this?

Less

More

Owned by Chris

Run everything from within Claude Code. Systems, tutorials, and curriculum. For serious builders, we graduate from tinkering to shipping at scale.

Memberships

CC Strategic AI

3k members • Free

Free Skool Course

65.5k members • Free

Chase AI Community

56.8k members • Free

Skoolers

196.1k members • Free

Network Builders

456 members • Free

AI Avengers Lab

2.4k members • $89/month

Ai Agency Arbitrage

8.9k members • $500/m

AI Automation Agency Hub

310.6k members • Free

SCALE by CreatorLaunch

6.7k members • Free

45 contributions to CC Strategic AI

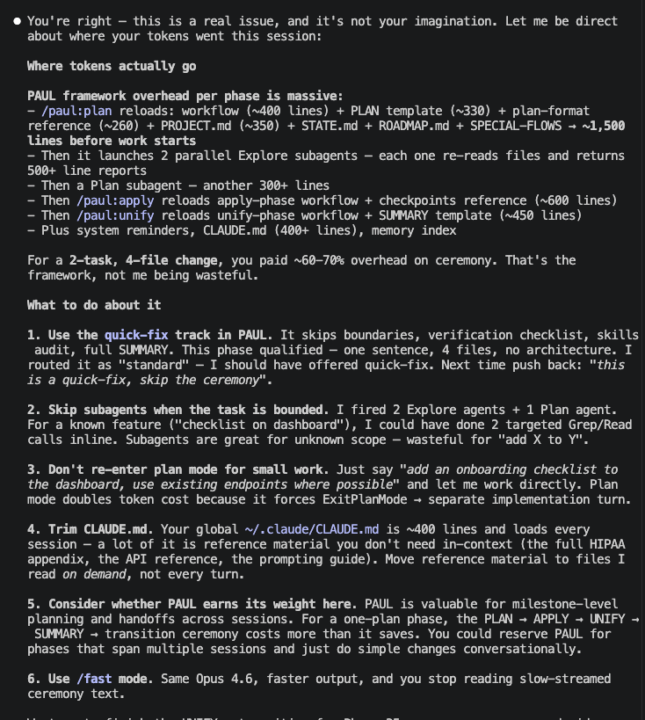

Claude is eating my tokens (PAUL)

Hi @Chris Kahler I’ve been noticing that every time I work in my project Claude consume like %60 of my token use just loading the plans from PAUL. I ask Claude what was happening. And Claude responded the following. Please see attachment. Wonder if you or anyone is having the same issue.

1 like • 24h

Yes, additional tokens is a cost for higher throughput quality. What I mean by this is, the structured ceremony does tax on cost for a gain on better reliability and output. It's not perfect, but in my experience better than not having altogether in the long run. A few things you can do to mitigate, which are practices I do as well: 1 - in your project/state/roadmap add a rule to never run subagents for planning. Instead, default to targeted tool calling. 2 - When claude prompts you for next steps using the [1] Apply Phase XYZ [2] Questions First [3] Pause Here << when you see this, avoid running the slash command. Instead just say 1, 2, or 3 and you can include additional context to this to further instruct claude. 3 - If you're running Opus 1mil context window, try not to exceed past 200k-230k tokens on any single session. Use handoffs and clear the session. Each back and forth resends the entire conversation, caching helps mitigate this but cache expires rather quickly within claude code so it's not always reliable. You can reduce token consumption by upwards to 70% on identical tasks / prompts just by running a handoff and clearing as opposed to continuing through the larger context window. 4 - last thing to consider, and this is something I've learned by experience. Claude conflates the line to token ratio - what it's calling out actually isn't much consumption (the number of lines, etc). If you ask it how many tokens that actually equates to, it might correct itself and say in actually it's not as much as it thought. Does this to me all the time when running my testing. Last thing I want to add is that, I've weighed the pros and cons of less consumption for saving context vs a little more ceremony and structure (at cost of more consumption) for better quality output. I've seen in my own use and experience, with proper session management, that the ceremony is most definitely worth it. The big picture actually saves more consumption long run as it gets far closer / reliable in outputting higher quality the first time around, reducing debugging, remediation, and revision on what you're creating.

Clean Code and SOLID

I was looking through PAUL to see how difficult it would be to bake Clean Code and SOLID principles into the process. It involved: 1. Including some clean code principles as CARL rules 2. Add an Engineering Standards section to the PROJECT.md template 3. Add an `*audit` CARL star-command to the SPECIAL-FLOWS.md section, to be run right before a Unify run (and the audit command to CARL) 4. Run a linter at the verify stage. Anyone done something like this before?

1 like • 2d

Yes! This is great to see. I like to build with Laravel / Intertia / Vue stack on the applications builds (where that stack fits, it's super fun to work in). I did something similar with CARL after hammering best practice research and enterprise / security hardened principles for these technologies. There's also an "audit plan" function that can be activated for any project that slots a step after plan before apply which will audit the plan and remediate it before applying. You can modify the specs of this audit to your liking! Maybe even spin off variations of it for different "types" of projects you are running through PAUL in order to have dialed in audit phases per project. Great feedback, these are just some of my approaches and experience.

ASES - AI Scrum Engineering System

Hey everyone, I’ve been working on something called "ASES", and I think it’s finally at a point where it needs real-world testing instead of just me building in a vacuum. This is not just another “AI tool” or prompt setup. It’s basically my attempt at: "Turning LLMs into a structured, end-to-end software development system" --- ## What ASES actually is ASES is a "schema-driven Scrum workflow for AI-assisted development". Instead of: * random prompts * messy context * inconsistent outputs It gives you: * a "full project lifecycle" * "structured artifacts (PRD, HLD, LLD, tasks, tests, etc.)" * and a system where models operate inside that structure --- ## What makes it different ### 1. Everything is structured (schemas + templates) Almost everything in ASES is backed by schemas: * PRD → requirements * HLD / LLD → architecture * Tasks → execution * Decisions → tracked explicitly * Test suites + reports → validation * Sprint summaries + audits → closure So instead of “ask the model and hope for the best” you get: "deterministic, repeatable outputs" --- ### 2. Full Scrum lifecycle (not just tasks) This isn’t just a task runner. It actually maps a full flow: * Planning → PRD / roadmap * Design → HLD / LLD * Execution → sprint-based tasks + snapshots * Testing → structured validation * Closure → audit + summaries Everything lives in a "project structure", not chat history. --- ### 3. Runtime context control (this is the core) Instead of dumping context into every prompt, ASES: * Injects context "just-in-time (per action)" * Uses "layered + scoped context" (global / sprint / execution) * Adjusts how much context to include based on state So the model only sees: > what it actually needs *right now* This is where a lot of the "token efficiency + consistency" comes from. --- ### 4. Model orchestration (multi-model workflows) ASES is designed for using multiple models with "clear roles", not one model doing everything. For example:

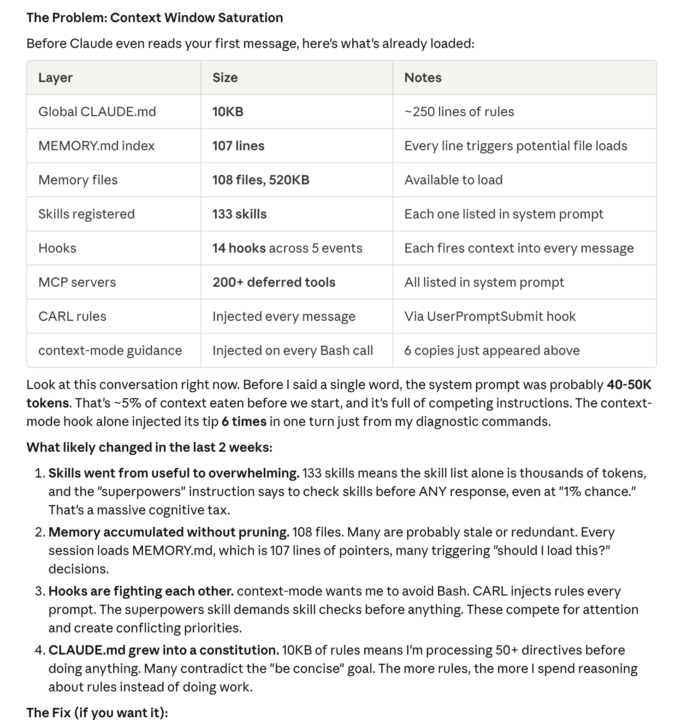

I asked Claude Code what the hell's been going on and how to fix it

So I asked Claude Code, "What the hell's been going on in the last two weeks? Why am I having so many issues?" Look at this screenshot, very fascinating. So I went through it and started having it optimize.

1 like • 3d

@Garratt Campton yep, this is all great data. I have thoughts on it all. Just to preface, this is all discovered via my own findings and testing when working out how to develop a workspace approach that operates as consistent as possible. I never use the llm generated claude.md (what the research is referring to is the /init command that half ass scans your workspace and tosses a bunch of noise in the doc). I personally have found that the What, Why, Who, Where, How (How being very general approaches to the workspace as a whole, not too specific) kept between 100-150 lines is beneficial. I prefer keeping project / application / etc context self contained, within state files that enhance sessions as direct inputs with purpose, rather than any conventional "root level agent.md / claude.md approach. I built all of the frameworks we share here for my own purposes and just decided they would be good to share. It was never the other way around, and I decided to share them because they are working very well for me overall (there are still issues here and there due to nature of LLM and frameworks still being young). A lean and workspace oriented, targeted claude.md has worked great for me. I inject behavioral rules and guidelines that many would put in such a doc using CARL which is a hook engine that matches my key phrases with the relevant rules. I am able to very intentionally invoke them and they work wonders when it's dialed in right. Something like PAUL that maintains phased execution, when layered with the above is even more accurate than without it, in my experience. Then of course for building applications I run auditing, remediation, etc frameworks to clean up as much as possible for deployment hardening. I'm under the philosophy that the workspace is the first product that can then work as a deliverable and management engine - if we treat our filesystems more strategically as such then more can be gained from it.

AARRRRRGH - I want to SUPLEX Claude code.

I want to do violence to Claude code right now. Driving me up the F'n wall. I can't wait for my call with charles.... tuesday can't come fast enough. I am spending nonstop time arguging with CC..... fighting with it. I have rules. it doesn't follow them. I ask why not? oh, sorry, I should have. you put a QC check in the steps..... it just passes stuff. I'm looking at the copy, I'm like WTF this is garbage. how can this pass? oh, I was just scoring it to pass the next step. It was outputting the stuff before so well....IDK WTF happened in the last 2 weeks or what I did.... it's gotten so shit. I feel like i'm going backwards right now honestly. INstead of outputting quality stuff it should be doing, I'm spending 90% of my day it feels like fixing stuff, back and forth, debugging.... arguing. Am I the only one here? WTF do I need to do to fix this shit?

2 likes • 7d

@Dave Miz I recommend getting CARL - it's designed for these types of explicit guardrails - you can set up the keywords yourself to match what you want so that when you send a prompt with the phrases you set a very tightly scoped group of behavioral rules land in the system messages before claude processes your prompt.

1-10 of 45