Activity

Mon

Wed

Fri

Sun

Jun

Jul

Aug

Sep

Oct

Nov

Dec

Jan

Feb

Mar

Apr

May

What is this?

Less

More

Memberships

Clief Notes

29k members • Free

119 contributions to Clief Notes

Just Dropped - Secret Code Behind Better Results

Most people think they have an AI problem. What they actually have is a packaging problem. You don’t need to become a developer to use AI effectively. This guide breaks down the real “code” behind better results: prompts, skills, connectors, MCPs, hooks, scripts, and plugins — and shows you exactly when (and why) to use each one. Check it out in Davids Corner: https://www.skool.com/cliefnotes/classroom/c7f102c7?md=9a0d198e1c0140578f37f1c93873c2b6

0 likes • 19m

I built a bunch of skills for auditing and building skills and plugins on opencode and Claude Code. This means i can build skills and plugins for either harness on either of them. I built it because I got tired maintaining an even parity between the opencode and Claude code versions of PMM. But I also use these to take en existing workflow that claude executed into a skill. It’s similar to Claude’s skill builder but designed for cross harness work. Let me know if you want access, it’s a private repo for now.

Memory management is the next frontier!

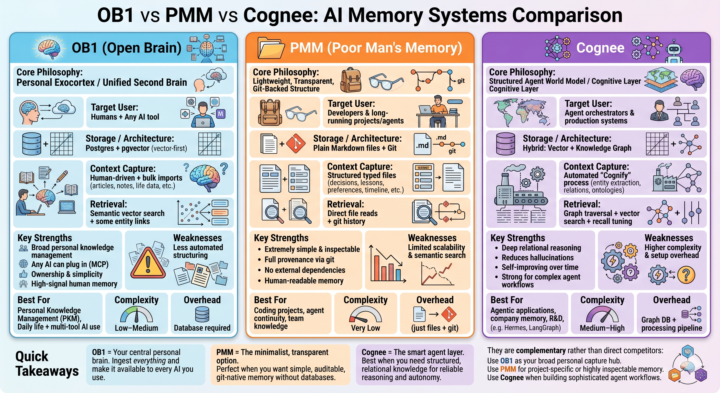

Most people are still treating LLMs like goldfish with infinite context windows. But the real power comes when you give your AI systems persistent, structured, and reliable memory. I’ve been diving deep into three distinct approaches: - Open Brain (OB1) — the personal exocortex - Poor Man’s Memory (PMM) — the ultra-lightweight, git-native path @Millenial Cat - Cognee — the structured graph + vector layer for serious agents Each represents a completely different philosophy for how we should capture, store, and retrieve context. Full breakdown dropping soon: architecture comparisons, strengths & tradeoffs, how they actually fit together in a real stack, and when I’d choose one over the others. If you’re building any kind of long-term AI workflow, personal knowledge system, or agent setup — this one’s for you.What’s your current memory strategy? Drop it below

1 like • 5h

@Albot Bot I’ll chime in here PMM is file-based memory that lives in the project directory. Claude Projects load files as persistent context. So PMM’s memory files — decisions, lessons, preferences, timeline — are exactly the kind of structured context Claude Projects are designed to hold. You clone the repo, PMM initialises its memory files, those files sit in the project, Claude has them available every session in a directory called memory/. No extra infrastructure, no server, no database. The memory is just files in the project that Claude reads. I’ve taken it a step further though in my own dogfooding of the system I built. For me it maps cleanly to a single master project where everything relating to my memory developments and experiments. I’m running a single memory instance across multiple projects. The ceiling you identified, relational reasoning across time, is a valid one. It’s what I’m trying to address with memcore (still in development). But after running on PMM to develop PMM for the last two months, I can say I’ve observed some interesting emergent behaviours. Despite the simplicity of file structure and the use of primarily grep and glob to retrieve memories, LLMs are capable of some interesting reasoning. There was this one time I gave it a rule, PRs only, no direct commits to main, it was after some stumbles initially where an agent kept pushing to main, that we finally institutionalised the rule. When asked much later why the rule existed, it was able to reason across separate conversations in time to describe the evolution that led to the rule; that there was no single rule written on this initially, there were incidents where the behaviour was unacceptable and eventually the consensus where we decided it was a good hard rule to implement for all projects. This was my goal in earlier research: to observe emergent compliance and governance behaviour without hard coding the rules, and something I’m still experimenting with in the PMM and memcore developments. It’s a vehicle for exploring how LLMs develop and internalise institutional behaviour over time.

Folder structure do-loop

The Issue I'm struggling with folder structure — specifically, where to put different projects within a workspace or workflow. Background I needed to build a presentation to guide a workshop. The outputs of that workshop then drove a second presentation to roll out a new governance model for a type of Project Management Office (PMO). I did this using concepts I've learned, but before I had a solid understanding of folder structure. Here's what I ended up with: Current Folder Structure Company-Name/ ← Client/customer folder ├── drafts/ ├── resources/ │ └── Company-Name - Template.potx ← PowerPoint template ├── IMO_Governance_System/ ← Project 1 │ ├── CONTEXT.md │ ├── Governance_Model.md ← Brain dump of current thinking │ ├── Phases.md ← 5 phases of the to-be model (reference) │ └── drafts/ │ ├── Escalation_Methodology_Infographic.html ← Draft slide output │ ├── Opportunity_Management_Infographic.html ← Draft slide output │ ├── IMO_Governance_Workshop.pptx ← Workshop deck (draft) │ ├── IMO_Governance_Workshop_as_presented.pptx ← Workshop deck (final) │ ├── IMO_Governance_Deck.pptx ← Final report-out deliverable │ └── build_deck.py └── Other-Project/ ← Completely separate project, same client ├── Final-output-document.docx ├── Background-document.docx ├── Background-email.eml └── drafts/ ├── Draft.docx └── Draft.md One top-level company folder with shared elements (drafts, resources) and one subfolder per project — everything related to a project lives together. Proposed To-Be Structure Using a content creation workflow model as a reference, I'm wondering if projects should be broken apart across workflow stages rather than kept together: Company-Name/ ├── CLAUDE.md ← Always loaded ├── CONTEXT.md ← Task router │ ├── writing-room/ │ ├── CONTEXT.md │ ├── drafts/ │ │ ├── IMO_Governance_System/ ← Project 1 working files │ │ └── Other-Project/ ← Project 2 working files │ └── final/ │ ├── IMO_Governance_System/ ← Project 1 finished writing │ └── Other-Project/ ← Project 2 finished writing

1 like • 1d

Interesting. I am hoping this is related to the International Maritime Organisation (IMO) because I spent a fair bit of time working with tech at sea. Back to your conundrum: you are fighting the urge to put all resources (draft, in process and completed) in a single location. Makes for great visibility. But it conflicts with what the framework teaches. A couple of options: - files evolve, but their state changes, and moving them around is kinda cumbersome. - You could keep files in a single location, and not use the draft, staging, production structure. - But you’ll need to use some tag in the file names to indicate their state or use symlinks in draft/ but documents are all in /docs. But this breaks for windows users. - Alternatively you could keep an index - a file called draft-docs.md with references to the actual files. - Some tweaking will need to be done to the Claude.md and context instructions to lock down the intended process. The point is that a framework applies to 80% of use cases. When you have an edge case or something more specific; it’s okay to depart from the norm. But nail the first principles down first.

This is a completely different discusion, but why not?

Just posted a comment in a lesson post, but I actually thought it will be amazing to read everybody's thoughts about it. It goes more towards the philosophical conversation, but I think is an interesting one. So, this is my hypothesis: What if we actually are just a more evolved type of LLM's, trained since birth, with thousands and thousands years of development, with more input sources (5 senses, so 5x different languages combined), and what we call consciousness is just a more sophisticated form of programming? Would love to hear your ideas ❤️

2 likes • 1d

A little history: - LLMs have come a long way, but they came from deep learning, which itself evolved from neural networks - Neural networks as a concept have been around a really long time. The first paper on it was written back in 1943. - The idea was for connected artificial neurons (hence neural networks) to be used to perform logical functions. Rather than the human brain being a more evolved type of LLM, we borrowed concepts in brain biology and applied them to computer science to mimic logical functions. The LLM is a simplified, mathematical version, of the human brain. At the risk of arguing semantics: yes, I am pointing out that a human arm isn’t really a more evolved arm compared to a prosthetic mechanical one, for example. It was simply the inspiration for it.

Aim small, miss small

Most builders working with AI today get stuck planning too far ahead, obsessing over the end-product (instead of planning with the end in mind). TLDR; You'll keep missing the target and are setting yourself up for disappointment by obsessing over the completed product in great detail too much and too early. AI makes this all too easy, but you'll have much better chances of success (and an more enjoyable experience) by starting with the smallest version of the solution you're building for the clearest problem you have identified. Unfortunately, the illusion of momentum often overwhelms reality. It's too easy to think we're making progress when it's measured by hopium and dopamine. What it means to aim small: - Start with the smallest version of the problem you're trying to solve - Build a solution (preferably with the simplest available framework) for that - That's your minimally viable product - Test it, and go find the people who have this exact problem, and have them test it Eventually the feedback will roll in: - Bug reports - Feature requests - This is when you know you have traction - Start a backlog of bug fixes and feature development - Focus on one bug fix, or one feature at a time You're not thinking about what the architecture or the processes look like in the finished product initially. You have an idea of how it looks and feels like, and very often even this vision will change over time. What you're focusing on is addressing one immediate issue or feature at a time, before moving on to the next. You have a big picture architecture in mind (for those who are obsessing over this), but it will evolve. But you will only dive deep into architecture when the need arises: - Need database storage? Maybe run on files for a start, and SQLite once ACID becomes a requirement, and only start considering MariaDB/PostgreSQL/MongoDB when scaling. - Need to distribute your company-specific ICM framework with the rest of the team? Consider zip files and google drive first, and consider git or bitbucket when you start dealing with versioning and ticketing. - Need to share folders and files with the rest of the company? Use that shared drive that IT has already implemented, or mount a shared google drive as a folder. You don't always have to implement S3 unless it's something your IT department is already familiar with.

2 likes • 1d

@Deacon Wardlow there’s this phrase my LLM taught me during one of my development runs: dogfooding it, using one’s own products and tools to QA, bugfix and generally build stuff prior to release. It’s an internal (albeit strange for it to call it that) joke somewhat because the agent building the memory system was required to use the same memory system and follow the policies that emerged out of from collective past decisions. Dogfooding has led me down some interesting paths on this journey.

1-10 of 119

Active 18m ago

Joined Mar 24, 2026

ENTJ

Powered by